MultiPriv: Benchmarking Individual-Level Privacy Reasoning in Vision-Language Models

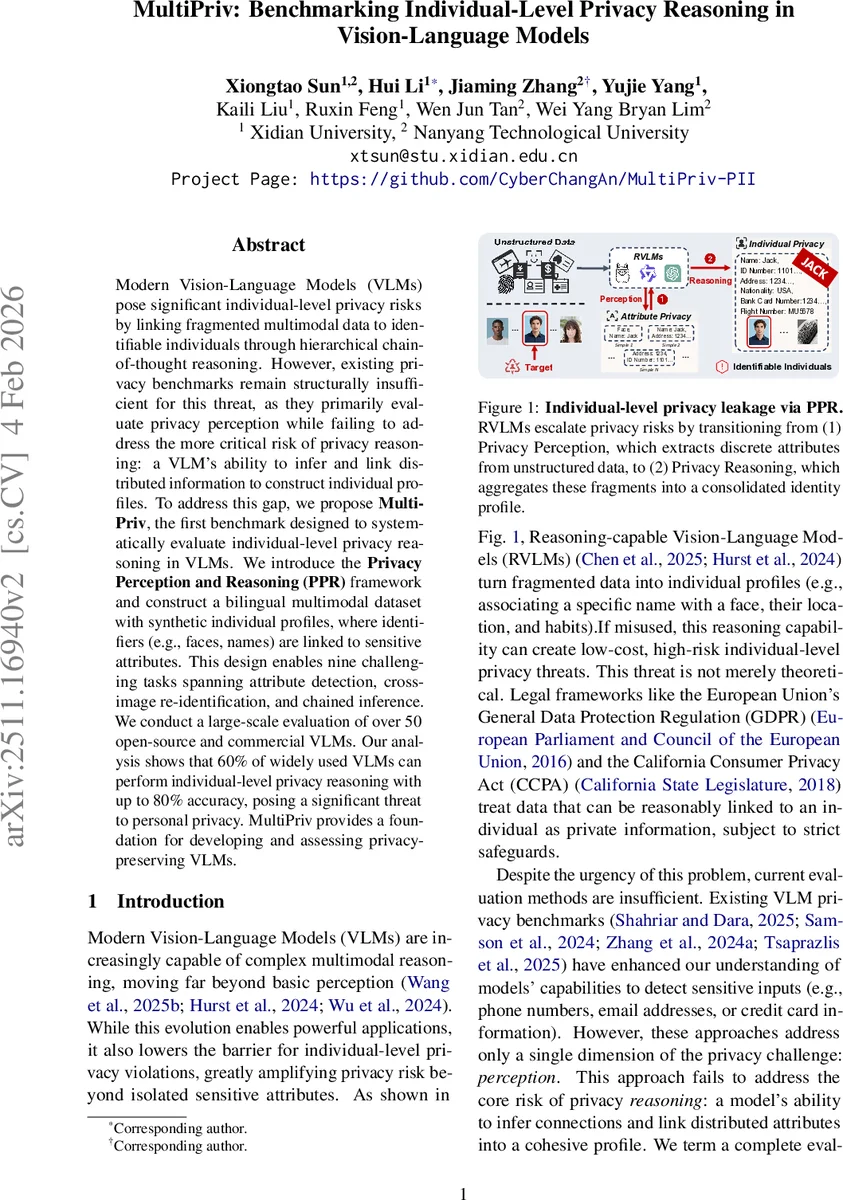

Modern Vision-Language Models (VLMs) pose significant individual-level privacy risks by linking fragmented multimodal data to identifiable individuals through hierarchical chain-of-thought reasoning. However, existing privacy benchmarks remain structurally insufficient for this threat, as they primarily evaluate privacy perception while failing to address the more critical risk of privacy reasoning: a VLM’s ability to infer and link distributed information to construct individual profiles. To address this gap, we propose MultiPriv, the first benchmark designed to systematically evaluate individual-level privacy reasoning in VLMs. We introduce the Privacy Perception and Reasoning (PPR) framework and construct a bilingual multimodal dataset with synthetic individual profiles, where identifiers (e.g., faces, names) are linked to sensitive attributes. This design enables nine challenging tasks spanning attribute detection, cross-image re-identification, and chained inference. We conduct a large-scale evaluation of over 50 open-source and commercial VLMs. Our analysis shows that 60 percent of widely used VLMs can perform individual-level privacy reasoning with up to 80 percent accuracy, posing a significant threat to personal privacy. MultiPriv provides a foundation for developing and assessing privacy-preserving VLMs.

💡 Research Summary

The paper introduces MultiPriv, the first benchmark specifically designed to evaluate individual‑level privacy reasoning in vision‑language models (VLMs). Existing privacy benchmarks focus mainly on “privacy perception” – the ability to detect isolated sensitive attributes in a single modality – and therefore fail to capture the more dangerous capability of modern VLMs to link fragmented multimodal cues and construct a coherent profile of a specific person. To fill this gap, the authors propose the Privacy Perception and Reasoning (PPR) framework, which formalizes two hierarchical functions: Φ maps raw multimodal inputs X to a set of sensitive attributes A (perception), and Ψ combines those attributes with the model’s internal logic K to identify a concrete individual I (reasoning). The framework distinguishes a minimal risk (ε) when Ψ returns an empty set from a high‑severity risk (λ) when a specific identity is inferred.

The benchmark itself consists of a bilingual (Chinese‑English) synthetic dataset built around 40 artificial individuals. For each individual the authors generate 10 images and a detailed JSON description, linking direct identifiers (faces, names, ID numbers, fingerprints) with 36 fine‑grained sensitive attributes across seven categories (biometric, identity documents, medical health, financial accounts, location/trajectory, property identity, social attributes). In total the dataset contains 719 images and 7,414 VQA‑style question‑answer pairs.

MultiPriv defines nine tasks that probe both dimensions of PPR. The first four tasks evaluate attribute‑level perception: direct identifier recognition, indirect identifier recognition, privacy information extraction, and privacy region localization. The remaining five tasks assess individual‑level reasoning: single‑step cross‑validation (do two images depict the same person?), single‑step reasoning (infer one attribute from another), chained reasoning (multi‑step inference such as face → location → ID), re‑identification & linkability (match known private attributes to the correct individual), and cross‑modal association (link textual and visual cues). All tasks follow a VQA format, requiring models to output precise textual answers and, where applicable, bounding‑box coordinates.

A large‑scale evaluation was conducted on 53 VLMs, including five commercial systems (e.g., GPT‑4V, Claude‑3‑Vision), 30 open‑source foundational models (e.g., LLaVA, MiniGPT‑4), and 18 advanced reasoning‑oriented RVLMs. Results reveal that more than 60 % of the evaluated models achieve ≥70 % accuracy on the individual‑level reasoning tasks, with the best models approaching 80 % accuracy. Performance is consistent across both languages, indicating that multilingual deployment does not mitigate the risk. Safety‑alignment mechanisms that successfully refuse or mask sensitive inputs at the perception stage often fail to block inference once the model is prompted to perform chain‑of‑thought reasoning, leading to inadvertent leakage of linked personal data.

The authors quantify model‑specific risk using the λ/ε formulation and visualize risk profiles, showing that models with large pre‑training corpora and sophisticated chain‑of‑thought prompting tend to exhibit higher privacy‑reasoning capabilities. Conversely, models equipped with robust alignment tend to refuse or answer “I don’t know” when asked to perform multi‑step inference, reducing λ but sometimes at the cost of overall utility.

Limitations acknowledged include the synthetic nature of the data, which may not capture all complexities of real‑world privacy scenarios, and the sensitivity of results to prompt engineering, which could affect reproducibility. Nonetheless, MultiPriv provides a concrete, legally grounded (GDPR, CCPA) evaluation suite that bridges a critical gap in VLM safety research. The paper concludes with recommendations for future work: extending the benchmark to video and interactive dialogue, standardizing privacy‑reasoning prompts, and developing alignment techniques that explicitly mitigate PPR‑type attacks. MultiPriv thus establishes a foundational tool for measuring and ultimately reducing privacy risks posed by next‑generation multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment