Hebrew Diacritics Restoration using Visual Representation

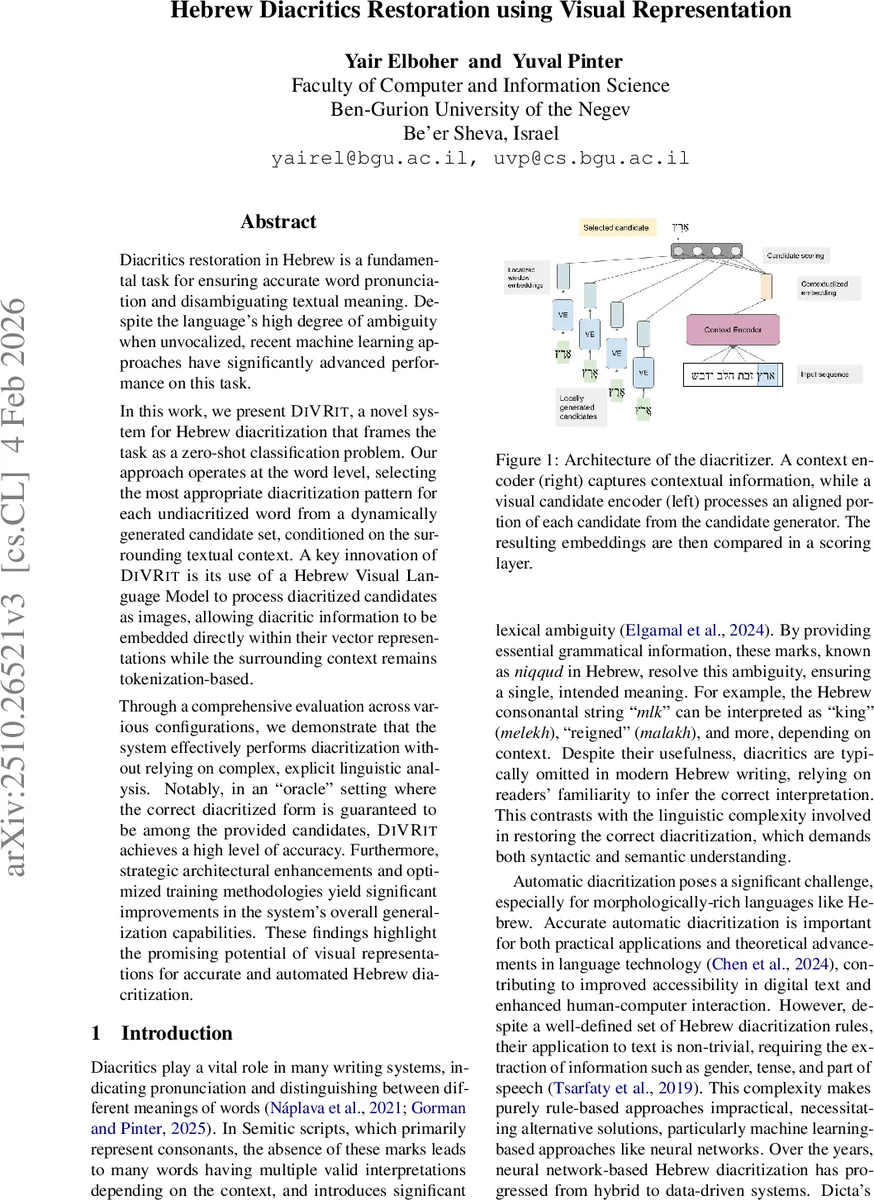

Diacritics restoration in Hebrew is a fundamental task for ensuring accurate word pronunciation and disambiguating textual meaning. Despite the language’s high degree of ambiguity when unvocalized, recent machine learning approaches have significantly advanced performance on this task. In this work, we present DiVRit, a novel system for Hebrew diacritization that frames the task as a zero-shot classification problem. Our approach operates at the word level, selecting the most appropriate diacritization pattern for each undiacritized word from a dynamically generated candidate set, conditioned on the surrounding textual context. A key innovation of DiVRit is its use of a Hebrew Visual Language Model to process diacritized candidates as images, allowing diacritic information to be embedded directly within their vector representations while the surrounding context remains tokenization-based. Through a comprehensive evaluation across various configurations, we demonstrate that the system effectively performs diacritization without relying on complex, explicit linguistic analysis. Notably, in an ``oracle’’ setting where the correct diacritized form is guaranteed to be among the provided candidates, DiVRit achieves a high level of accuracy. Furthermore, strategic architectural enhancements and optimized training methodologies yield significant improvements in the system’s overall generalization capabilities. These findings highlight the promising potential of visual representations for accurate and automated Hebrew diacritization.

💡 Research Summary

The paper introduces DiVRit, a novel system for Hebrew diacritics restoration that reframes the task as a zero‑shot classification problem at the word level. Instead of predicting niqqud symbols character‑by‑character, DiVRit first generates a small, context‑specific set of candidate diacritized forms for each undiacritized word using a k‑nearest‑neighbors (KNN) algorithm over a large diacritized corpus. Each candidate is rendered as an image and fed into a visual language model based on a Vision Transformer (ViT‑MAE) architecture, specifically a Hebrew‑adapted PIXEL model.

The visual candidate encoder is pretrained in two stages. The first stage uses masked image modeling on 2 GB of Hebrew Wikipedia and 9.8 GB of OSCAR data to learn generic visual text representations. The second stage continues masked modeling on a 3.4 M token diacritized corpus with a reduced masking ratio, allowing the model to capture the visual patterns of diacritic marks directly. Because the model operates on images, it naturally handles out‑of‑vocabulary words and avoids the need for a token vocabulary that would be unwieldy for diacritized forms.

Parallel to the visual encoder, a context encoder processes the surrounding undiacritized text. The authors experiment with both a standard token‑based transformer and hybrid visual‑text encoders. Both encoders output embeddings that are mean‑pooled and projected into a shared embedding space of identical dimensionality. Scoring is performed by a simple inner product between the context embedding and each candidate embedding; the candidate with the highest score is selected as the final diacritized word.

In an “oracle” setting—where the correct diacritized form is guaranteed to be among the generated candidates—DiVRit achieves 92.68 % word‑level accuracy, surpassing the previously reported 89.75 % of the state‑of‑the‑art Nakdimon system. In a realistic KNN‑based candidate generation scenario, the system reaches 87.87 % accuracy with a candidate set capped at five forms. The authors also explore architectural variations such as contrastive learning objectives, embedding normalization, and multimodal context encoders, all of which improve generalization.

The key contributions are: (1) framing diacritization as zero‑shot classification, allowing the model to treat each candidate as a distinct class; (2) leveraging visual representations of diacritized words so that diacritic information is embedded directly in the image space; (3) demonstrating that a vision‑language model can effectively rank candidates without explicit linguistic rules, handling noisy inputs, typographical errors, and OCR‑like distortions robustly.

Limitations include reliance on the KNN candidate generator, which depends on string similarity heuristics and may miss rare or novel diacritizations, leading to performance drops when the correct form is absent from the candidate set. Future work is suggested to replace the KNN step with neural generative models, expand multimodal pretraining, and increase the size and diversity of diacritized training data.

Overall, DiVRit showcases the promise of visual‑based, zero‑shot approaches for languages with omitted vowel markings, offering a flexible, language‑agnostic pipeline that could be extended to other Semitic scripts and low‑resource orthographies.

Comments & Academic Discussion

Loading comments...

Leave a Comment