UNO: Unifying One-stage Video Scene Graph Generation via Object-Centric Visual Representation Learning

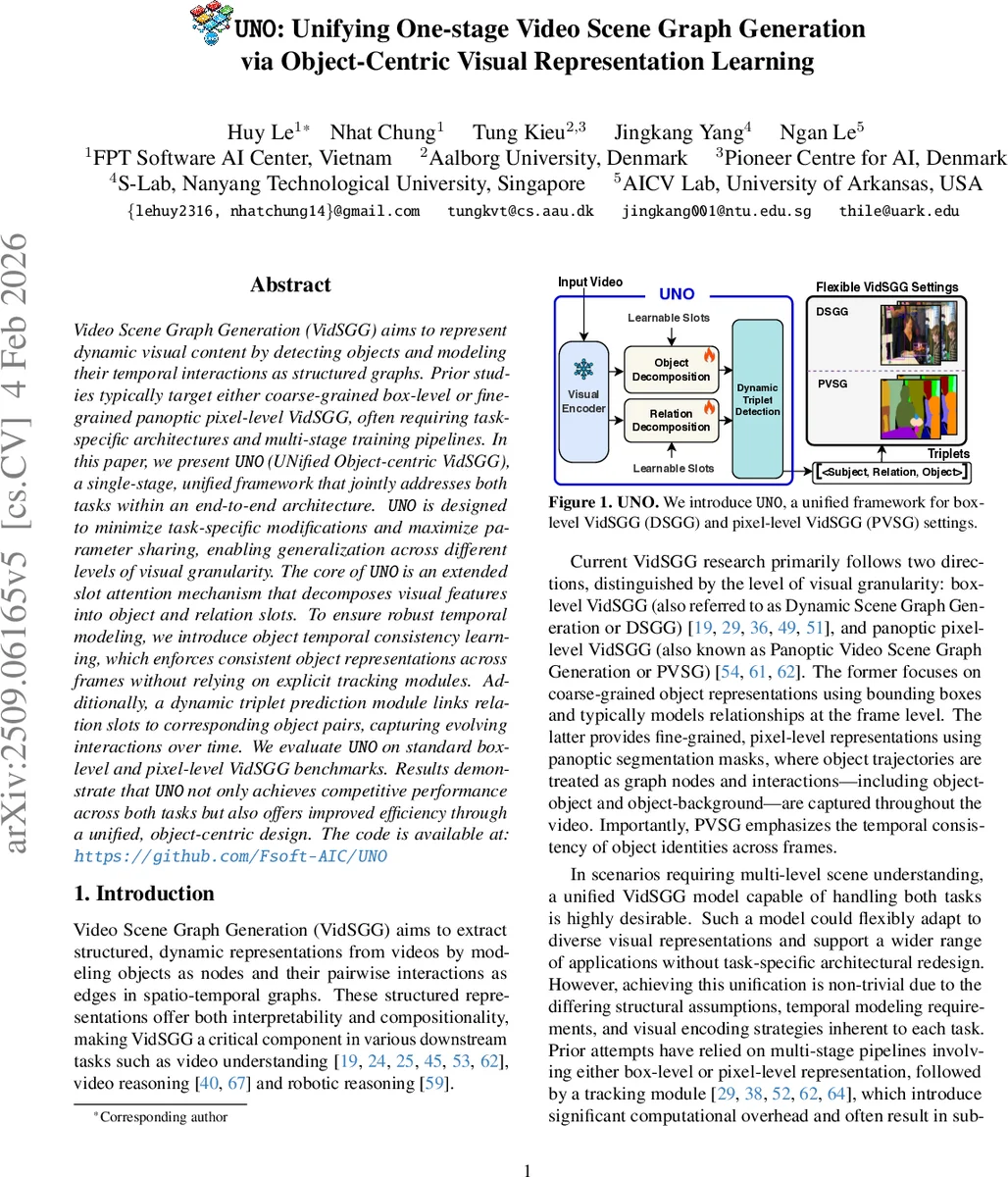

Video Scene Graph Generation (VidSGG) aims to represent dynamic visual content by detecting objects and modeling their temporal interactions as structured graphs. Prior studies typically target either coarse-grained box-level or fine-grained panoptic pixel-level VidSGG, often requiring task-specific architectures and multi-stage training pipelines. In this paper, we present UNO (UNified Object-centric VidSGG), a single-stage, unified framework that jointly addresses both tasks within an end-to-end architecture. UNO is designed to minimize task-specific modifications and maximize parameter sharing, enabling generalization across different levels of visual granularity. The core of UNO is an extended slot attention mechanism that decomposes visual features into object and relation slots. To ensure robust temporal modeling, we introduce object temporal consistency learning, which enforces consistent object representations across frames without relying on explicit tracking modules. Additionally, a dynamic triplet prediction module links relation slots to corresponding object pairs, capturing evolving interactions over time. We evaluate UNO on standard box-level and pixel-level VidSGG benchmarks. Results demonstrate that UNO not only achieves competitive performance across both tasks but also offers improved efficiency through a unified, object-centric design. Code is available at: https://github.com/Fsoft-AIC/UNO

💡 Research Summary

Video Scene Graph Generation (VidSGG) seeks to encode dynamic visual content as structured graphs, where nodes represent objects and edges capture their temporal interactions. Historically, research has split into two distinct streams. The first, Dynamic Scene Graph Generation (DSGG), works at a coarse granularity using bounding boxes; it typically processes each frame independently or with simple frame‑level tracking, and predicts relationships between detected objects. The second, Panoptic Video Scene Graph Generation (PVSG), operates at a fine granularity by representing each object with a pixel‑level mask tube that spans the entire video, thereby enforcing strong temporal identity consistency. Because these streams differ in visual granularity, temporal modeling, and required output formats, prior works have relied on multi‑stage pipelines (detection → tracking → relationship classification) and task‑specific architectures, leading to high computational cost and limited flexibility.

UNO (UNified Object‑centric VidSGG) proposes a single‑stage, end‑to‑end framework that simultaneously handles both DSGG and PVSG. Its design follows three guiding principles: (1) minimize task‑specific modules and share parameters across tasks; (2) adopt an object‑centric latent representation that can serve both bounding‑box and mask predictions; (3) enforce temporal consistency without an explicit tracking module. The backbone consists of a frozen pre‑trained visual encoder (e.g., ResNet‑101) that extracts a high‑level feature map fₜ for each frame Iₜ. These feature maps are fed into an extended slot attention module that learns two sets of slots: N object slots sₜ and M relation slots rₜ. Unlike classic slot attention, which samples slots from a prior, UNO initializes slots as learnable tokens and iteratively updates them via a Query‑Key‑Value attention mechanism followed by a GRU. The attention weights are normalized across slots, encouraging competition so that each slot specializes in a distinct semantic region.

To achieve temporal coherence, UNO introduces Object Temporal Consistency Learning (OTCL). OTCL comprises a “pull” loss that aligns each object slot in frame t with its counterpart in frame t‑1 using cosine similarity, and a “push” loss that penalizes abrupt changes when an object disappears, preventing a slot from being reassigned to a new object prematurely. This dual loss enforces smooth slot trajectories across time, effectively providing implicit tracking.

The dynamic triplet prediction module bridges relation slots with appropriate subject‑object pairs. Rather than enumerating all N² possible pairs, the module computes an attention score between each relation slot and every ordered object pair, then selects the highest‑scoring pairs. This reduces redundancy, improves efficiency, and yields a compact set of triplets {subject, relation, object} that evolve over the video.

Training optimizes a weighted sum of three components: (i) object detection loss (L1 + IoU for boxes, Dice + mask cross‑entropy for masks), (ii) relation classification cross‑entropy, and (iii) OTCL loss (pull + push). The overall loss is λ₁·L_obj + λ₂·L_rel + λ₃·L_OTCL. Task‑specific heads decode object slots into either bounding boxes or panoptic masks, while relation slots are decoded into predicate labels.

Experiments evaluate UNO on two benchmarks: Action Genome for DSGG and the PVSG dataset for panoptic scene graphs. Metrics include mean average precision (mAP@0.5), Recall@K, FLOPs, and inference latency. UNO outperforms state‑of‑the‑art one‑stage methods such as OED on DSGG, achieving a 2–3 % absolute gain in mAP, and surpasses leading PVSG approaches in mask IoU and relationship accuracy. Importantly, because UNO eliminates separate detection, tracking, and graph assembly stages, it reduces FLOPs by over 40 % and speeds up inference by roughly 30 % compared to multi‑stage pipelines, while also cutting total parameter count by about 25 %.

The paper acknowledges limitations: the number of slots (N, M) is fixed, which may constrain performance on scenes with many objects; very small or heavily occluded objects can be missed due to slot competition; and the current design assumes uniform frame sampling, potentially weakening robustness to rapid motion or abrupt scene cuts. Future work could explore adaptive slot allocation, more sophisticated temporal consistency mechanisms, and multimodal extensions (audio, text) to further enrich scene graph generation.

In summary, UNO demonstrates that a unified object‑centric representation, coupled with implicit temporal consistency learning and a dynamic triplet linking strategy, can bridge the gap between coarse box‑level and fine mask‑level video scene graph generation. It delivers competitive or superior accuracy while markedly improving computational efficiency and simplifying the overall pipeline, offering a promising foundation for downstream video understanding and robotic perception tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment