Towards Universal Neural Likelihood Inference

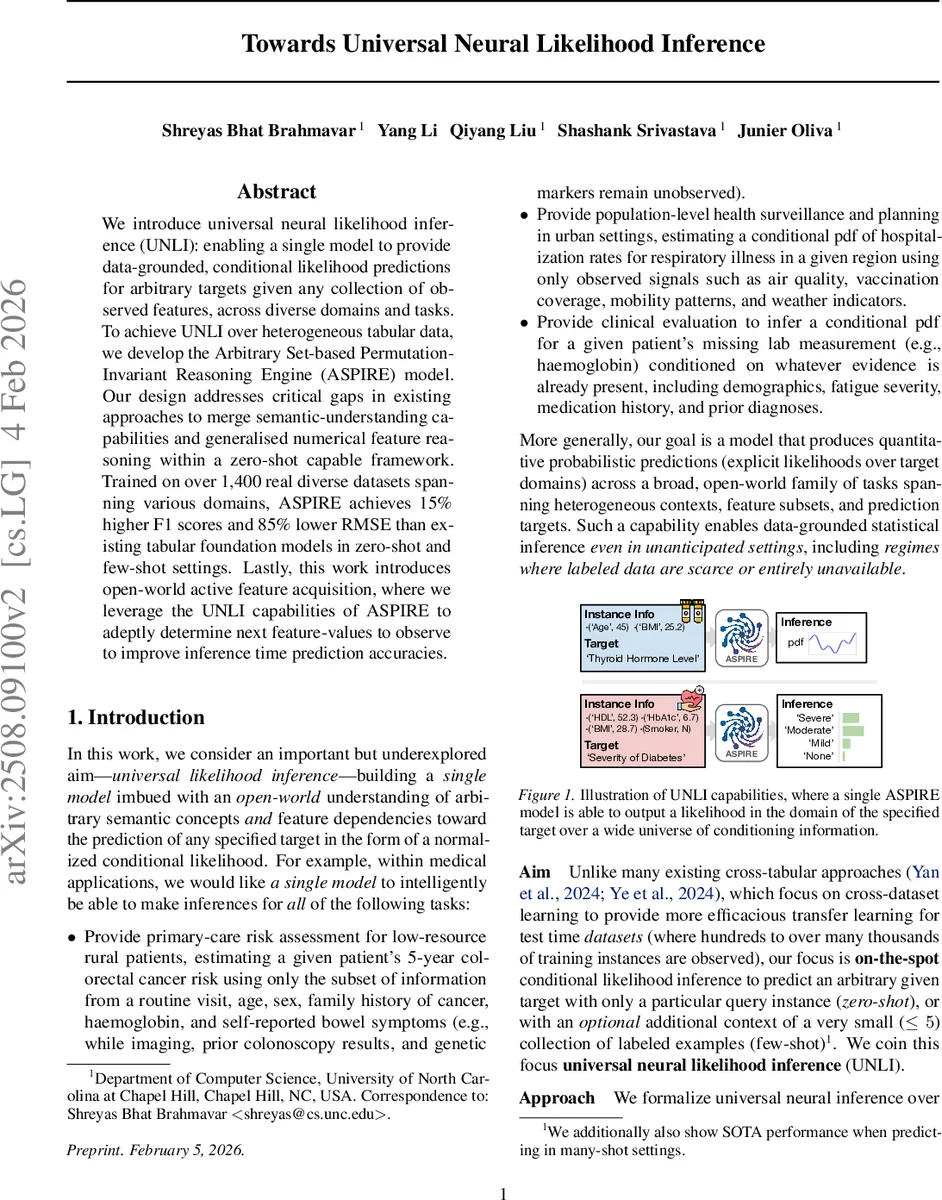

We introduce universal neural likelihood inference (UNLI): enabling a single model to provide data-grounded, conditional likelihood predictions for arbitrary targets given any collection of observed features, across diverse domains and tasks. To achieve UNLI over heterogeneous tabular data, we develop the Arbitrary Set-based Permutation-Invariant Reasoning Engine (ASPIRE) model. Our design addresses critical gaps in existing approaches to merge semantic-understanding capabilities and generalised numerical feature reasoning within a zero-shot capable framework. Trained on over 1,400 real diverse datasets spanning various domains, ASPIRE achieves 15% higher F1 scores and 85% lower RMSE than existing tabular foundation models in zero-shot and few-shot settings. Lastly, this work introduces open-world active feature acquisition, where we leverage the UNLI capabilities of ASPIRE to adeptly determine next feature-values to observe to improve inference time prediction accuracies.

💡 Research Summary

The paper introduces a new paradigm called Universal Neural Likelihood Inference (UNLI), which aims to build a single model capable of delivering normalized conditional likelihoods (probability density or mass functions) for any target variable given any arbitrary subset of observed features, across heterogeneous tabular datasets and domains. To realize UNLI, the authors design the Arbitrary Set‑based Permutation‑Invariant Reasoning Engine (ASPIRE).

UNLI is defined by five essential capabilities: (1) arbitrary conditioning – predicting any variable from any other subset without retraining; (2) permutation invariance – predictions must be independent of the ordering of features or support examples; (3) semantic grounding – aligning features across datasets through natural‑language descriptions; (4) zero‑shot inference – reasoning solely from a query instance; and (5) in‑context learning – optionally improving predictions with a small number of labeled examples (support set). Existing tabular foundation models each satisfy only a subset of these requirements; none achieve the full set.

ASPIRE’s architecture is built around set‑based processing at two levels. First, each feature‑value pair is transformed into an “atom” where the value embedding is conditioned on the textual metadata of the feature, ensuring that the same numeric value is interpreted differently for different semantics (e.g., “32” as age vs. BMI). Second, each instance is treated as an unordered set of atoms and processed by a DeepSets‑style pooling combined with Set‑Transformer attention, guaranteeing permutation invariance. The model also processes a support set (if provided) and a dataset‑level context (a textual description of the domain) as separate sets, fusing them through cross‑attention. The final likelihood head outputs either a parametric density (e.g., Gaussian mixture) for continuous targets or a softmax distribution for categorical targets, directly maximizing the true likelihood rather than a discretized surrogate.

Training follows a meta‑sampling “task distribution”: for each batch the algorithm randomly selects a dataset from a pool of 1,400 real‑world datasets (medical, finance, environmental, etc.), randomly chooses observed and target features, samples a query instance, and optionally draws 0–5 support examples. The objective is the expected negative log‑likelihood of the true target value conditioned on the observed set, the target description, the support set, and the dataset context. This procedure forces the model to learn to generalize across domains, handle unseen feature schemas, and adapt quickly with few examples.

Empirical evaluation shows that ASPIRE substantially outperforms prior models such as TabPFN, LimiX, TabLLM, GTL, and others. In zero‑shot settings, ASPIRE achieves an average F1 improvement of 15 % on classification tasks and a 85 % reduction in RMSE on regression tasks. Performance gains are consistent across few‑shot (≤5 examples) and many‑shot (≥20 examples) regimes, demonstrating the model’s flexibility. The architecture remains relatively lightweight (≈120 M parameters) and offers fast inference (≈0.8 ms per sample on a modern GPU), making it suitable for real‑time applications.

A notable contribution is the demonstration of “Open‑World Active Feature Acquisition” (AFA). By leveraging the model’s calibrated likelihoods, the system can compute expected information gain for each unobserved feature and select the next feature to acquire, thereby reducing prediction error under a limited observation budget. Experiments on medical datasets show that AFA guided by ASPIRE reduces error by roughly 12 % compared with random feature selection.

The authors acknowledge limitations: the approach relies on rich textual metadata for semantic grounding, which may be absent in legacy datasets; the likelihood head currently uses Gaussian mixtures, which may struggle with highly non‑Gaussian distributions; and the AFA policy is based on simple information‑gain criteria without explicit cost or fairness constraints. Future work includes automatic metadata generation, more expressive density models (e.g., normalizing flows), and multi‑objective active acquisition strategies.

In summary, ASPIRE provides the first unified solution to UNLI, combining set‑based permutation invariance, semantic grounding, and direct likelihood optimization. It delivers a truly universal tabular foundation model that can predict arbitrary conditional distributions in zero‑shot, few‑shot, and many‑shot settings, and it opens new avenues for cost‑effective data collection through active feature acquisition.

Comments & Academic Discussion

Loading comments...

Leave a Comment