QuantVSR: Low-Bit Post-Training Quantization for Real-World Video Super-Resolution

Diffusion models have shown superior performance in real-world video super-resolution (VSR). However, the slow processing speeds and heavy resource consumption of diffusion models hinder their practical application and deployment. Quantization offers a potential solution for compressing the VSR model. Nevertheless, quantizing VSR models is challenging due to their temporal characteristics and high fidelity requirements. To address these issues, we propose QuantVSR, a low-bit quantization model for real-world VSR. We propose a spatio-temporal complexity aware (STCA) mechanism, where we first utilize the calibration dataset to measure both spatial and temporal complexities for each layer. Based on these statistics, we allocate layer-specific ranks to the low-rank full-precision (FP) auxiliary branch. Subsequently, we jointly refine the FP and low-bit branches to achieve simultaneous optimization. In addition, we propose a learnable bias alignment (LBA) module to reduce the biased quantization errors. Extensive experiments on synthetic and real-world datasets demonstrate that our method obtains comparable performance with the FP model and significantly outperforms recent leading low-bit quantization methods. Code is available at: https://github.com/bowenchai/QuantVSR.

💡 Research Summary

QuantVSR tackles the pressing problem of deploying diffusion‑based video super‑resolution (VSR) models on resource‑constrained devices. While diffusion models such as MGLD‑VSR deliver state‑of‑the‑art visual quality, their multi‑step denoising pipeline is computationally heavy, making real‑time inference impractical. Existing post‑training quantization (PTQ) techniques, successful for image generation and large language models, fall short for VSR because (1) quantization introduces frame‑wise inconsistent errors that break temporal coherence, and (2) VSR features exhibit complex spatio‑temporal distributions that are difficult to represent with a limited set of integer levels.

The authors propose a two‑pronged solution: a Spatio‑Temporal Complexity Aware (STCA) mechanism and a Learnable Bias Alignment (LBA) module.

STCA first measures, on a calibration dataset, the temporal complexity (C_t) (average inter‑frame difference energy) and spatial complexity (C_s) (average channel‑wise spatial variance) for each layer’s input tensor. Based on upper and lower thresholds for both metrics, a layer‑specific rank (r) is assigned to a low‑rank full‑precision (FP) branch. The original weight matrix (W) is decomposed into (L_1L_2 + R), where (L_1\in\mathbb{R}^{m\times r}) and (L_2\in\mathbb{R}^{r\times n}) constitute the FP branch, while the residual (R) is processed by a low‑bit branch. Before quantization, inputs and weights of the low‑bit branch are smoothed with a randomized Hadamard transform to mitigate outliers, after which standard integer quantization (e.g., 4‑bit or 6‑bit) and de‑quantization are applied. By allocating higher ranks to layers that handle temporally or spatially complex features, STCA preserves crucial information while keeping the overall computational budget low.

Dual‑branch refinement jointly optimizes the FP and low‑bit branches using a mean‑squared‑error (MSE) loss between the full‑precision model output and the quantized model output. The straight‑through estimator (STE) is employed to back‑propagate through the non‑differentiable rounding operation.

Even with aggressive rank reduction, low‑bit quantization inevitably introduces a systematic bias because the scale and zero‑point are coarse approximations of the original distribution. To correct this, the LBA module learns an additive bias vector for each layer, trained in a final stage while freezing all other parameters. This bias aligns the quantized activations with their full‑precision counterparts, dramatically reducing the error especially in the 4‑bit regime.

The training pipeline consists of three stages: (1) compute (C_t) and (C_s) on a calibration set and allocate ranks, (2) fine‑tune the low‑rank matrices (L_1, L_2) and the residual (R) for both branches, and (3) train the LBA module.

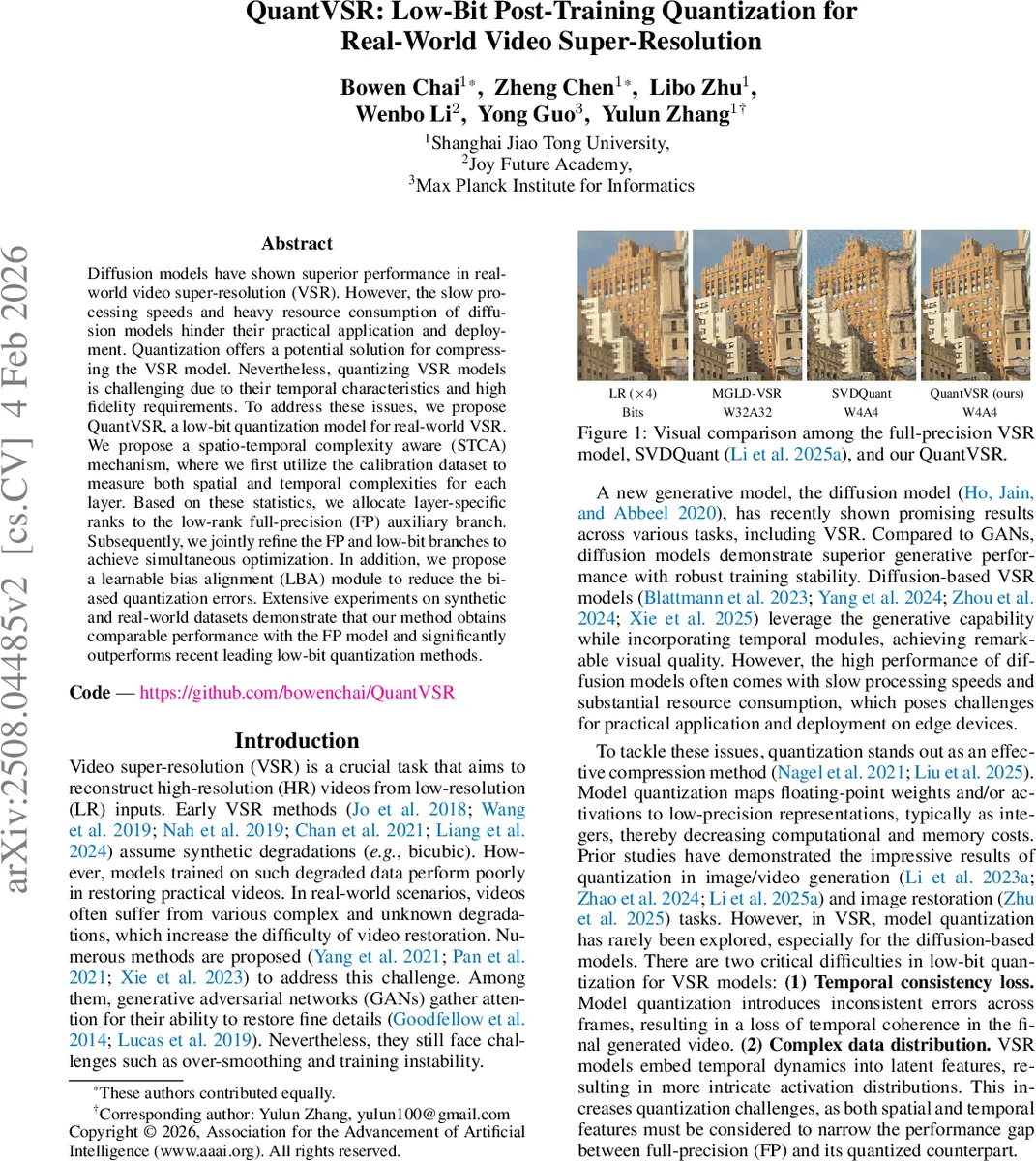

Extensive experiments are conducted on synthetic benchmarks and the real‑world MVSR4x dataset. QuantVSR with 4‑bit quantization achieves PSNR/SSIM drops of less than 0.1 dB/0.001 compared to the full‑precision baseline, while reducing parameters by 84.39 % and FLOPs by 82.56 %. Visual comparisons show negligible blurring or temporal flickering. Compared against recent low‑bit VSR quantizers such as ViDiT‑Q and SVDQuant, QuantVSR consistently outperforms them by 0.3–0.5 dB in PSNR. Ablation studies confirm that (a) removing the STCA rank‑allocation leads to a >0.6 dB PSNR loss, and (b) omitting LBA causes a significant bias increase, especially at 4‑bit.

The paper’s contributions are threefold: (1) introducing the first low‑bit PTQ framework tailored for diffusion‑based VSR, (2) devising a spatio‑temporal complexity‑driven rank allocation that balances accuracy and efficiency, and (3) proposing a learnable bias alignment to mitigate low‑bit quantization bias. QuantVSR demonstrates that high‑quality video super‑resolution can be brought to edge devices without sacrificing visual fidelity, opening the door for practical deployment of diffusion models in real‑time video applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment