DiaryPlay: AI-Assisted Authoring of Interactive Vignettes for Everyday Storytelling

An interactive vignette is a popular and immersive visual storytelling approach that invites viewers to role-play a character and influences the narrative in an interactive environment. However, it has not been widely used by everyday storytellers yet due to authoring complexity, which conflicts with the immediacy of everyday storytelling. We introduce DiaryPlay, an AI-assisted authoring system for interactive vignette creation in everyday storytelling. It takes a natural language story as input and extracts the three core elements of an interactive vignette (environment, characters, and events), enabling authors to focus on refining these elements instead of constructing them from scratch. Then, it automatically transforms the single-branch story input into a branch-and-bottleneck structure using an LLM-powered narrative planner, which enables flexible viewer interactions while freeing the author from multi-branching. A technical evaluation (N=16) shows that DiaryPlay-generated character activities are on par with human-authored ones regarding believability. A user study (N=16) shows that DiaryPlay effectively supports authors in creating interactive vignette elements, maintains authorial intent while reacting to viewer interactions, and provides engaging viewing experiences.

💡 Research Summary

DiaryPlay addresses a clear gap in the current landscape of interactive storytelling: everyday users want to turn their casual, often single‑branch narratives into immersive, role‑playing experiences, yet existing authoring tools (e.g., Unity, RPGMaker, Roblox Studio) demand months of development, extensive asset creation, and complex branching logic. The authors first conducted a formative study with 13 active social‑media participants, confirming a desire for interactive vignettes that preserve the immediacy of everyday storytelling while offering the audience a first‑person perspective. From this they derived three design goals: (1) lightweight authoring that matches everyday storytelling effort, (2) faithful conveyance of the author’s core message, and (3) engaging viewing experiences with situation‑aware NPCs in a branching narrative.

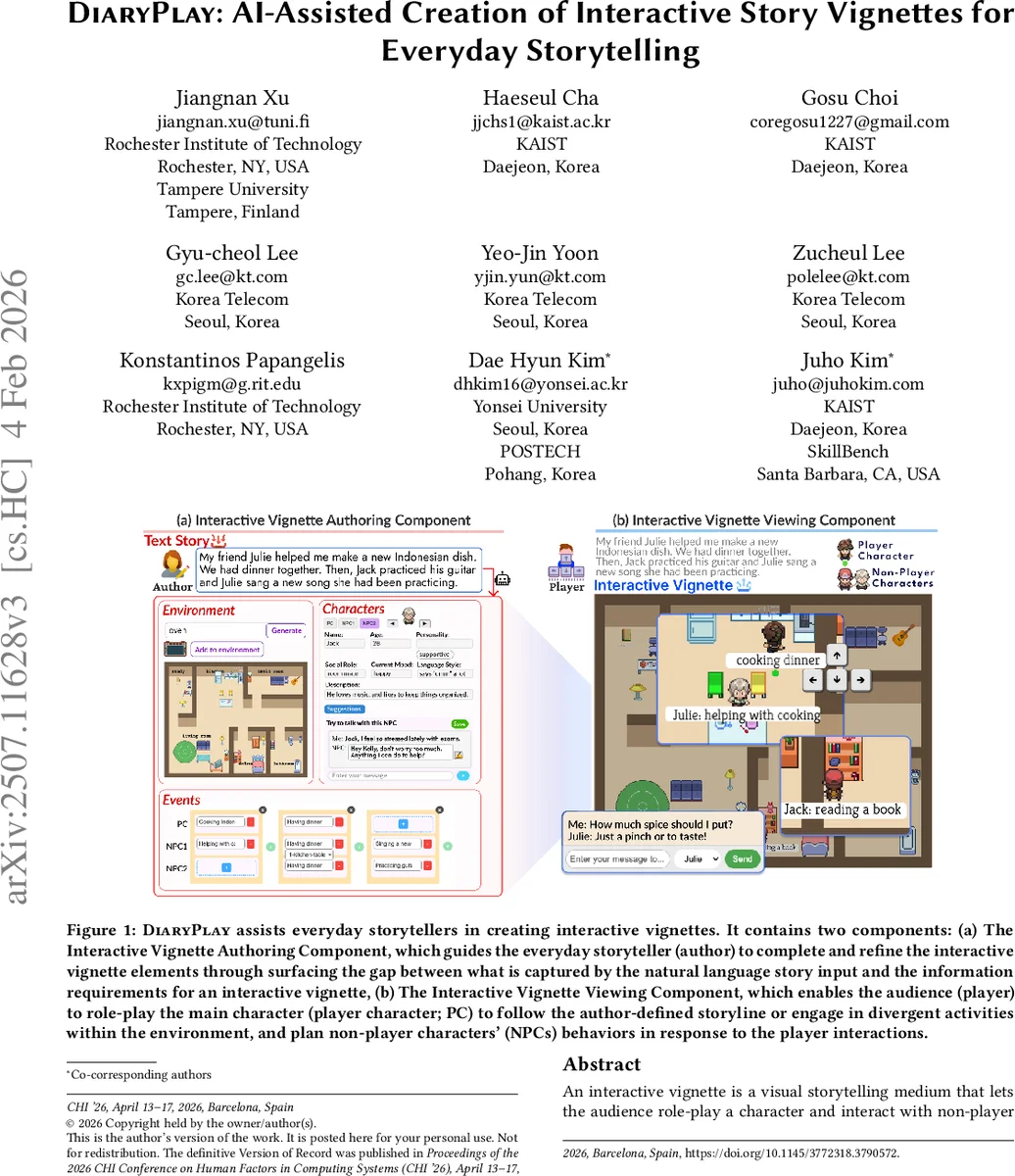

DiaryPlay implements these goals through two tightly coupled components. The Authoring Component takes a natural‑language story as input and, using a large language model (LLM), automatically extracts the three canonical vignette elements defined by Zhao et al.—environment, characters, and events. The system visualizes these elements (e.g., a scene layout populated with objects mentioned in the text, character avatars linked to events) and lets the author quickly edit, add, or remove items, thereby keeping the human‑in‑the‑loop paradigm while offloading the bulk of low‑level work to AI.

The Viewing Component delivers the interactive experience to the audience. Its core is the Controlled Divergence (CD) module, an LLM‑driven narrative planner that converts the single‑branch input story into a “branch‑and‑bottleneck” structure. The bottleneck represents the author’s intended main storyline; surrounding branches are small, optional divergences that the player may explore without derailing the core narrative. At runtime the CD module monitors player actions, evaluates their impact on the current bottleneck, and generates NPC behaviors that satisfy two constraints simultaneously: (a) persona consistency—NPC actions must align with the character’s predefined personality traits, and (b) narrative coherence—NPC actions must also make sense within the story’s logical flow. This is achieved through carefully engineered prompts and post‑processing rules that guide the LLM to balance these sometimes competing objectives.

Technical evaluation involved 16 participants who compared NPC activities generated by the CD module against human‑authored equivalents across eight pre‑designed stories. Participants rated believability, character consistency, and narrative fit on a 5‑point Likert scale; the AI‑generated content achieved an average of 4.3/5, only 0.1 points below human baselines, demonstrating that the CD module can produce NPC actions that are practically indistinguishable from those crafted by a human author.

A subsequent user study (N = 16) measured the end‑to‑end authoring experience. Participants completed a full vignette within an average of 20 minutes, far shorter than traditional pipelines. Post‑study surveys reported high satisfaction with reduced effort (4.6/5) and successful transmission of the intended story message (4.5/5). Qualitative feedback highlighted the value of “meaningful micro‑divergences”: when players deviated from the main plot, the system introduced small, context‑appropriate events that enriched the experience without breaking the author’s narrative intent. Viewing sessions confirmed that audiences could still grasp the core story even after exploring divergent paths, and that NPC reactions felt natural and responsive to player actions.

The authors acknowledge several limitations. First, LLMs can hallucinate, occasionally producing objects or dialogues that do not exist in the original story, requiring author oversight. Second, the current prototype targets 2D or low‑fidelity visual environments; scaling to high‑resolution 3D worlds would demand additional asset pipelines. Third, the participant pool is relatively small and culturally homogeneous, so broader generalization remains to be tested. Future work is proposed in three directions: (1) integrating multimodal inputs (images, audio) to enrich scene generation, (2) extending the system to support more sophisticated graphics engines, and (3) conducting large‑scale, cross‑cultural evaluations to validate robustness and appeal.

In sum, DiaryPlay demonstrates that a well‑designed human‑AI collaboration can dramatically lower the barrier for everyday storytellers to produce interactive, role‑playing vignettes. By automatically extracting story elements and employing a controlled‑divergence narrative planner, the system preserves authorial intent while offering audiences a compelling, immersive experience—opening a new avenue for personal digital storytelling beyond static text, photos, or videos.

Comments & Academic Discussion

Loading comments...

Leave a Comment