Language models can learn implicit multi-hop reasoning, but only if they have lots of training data

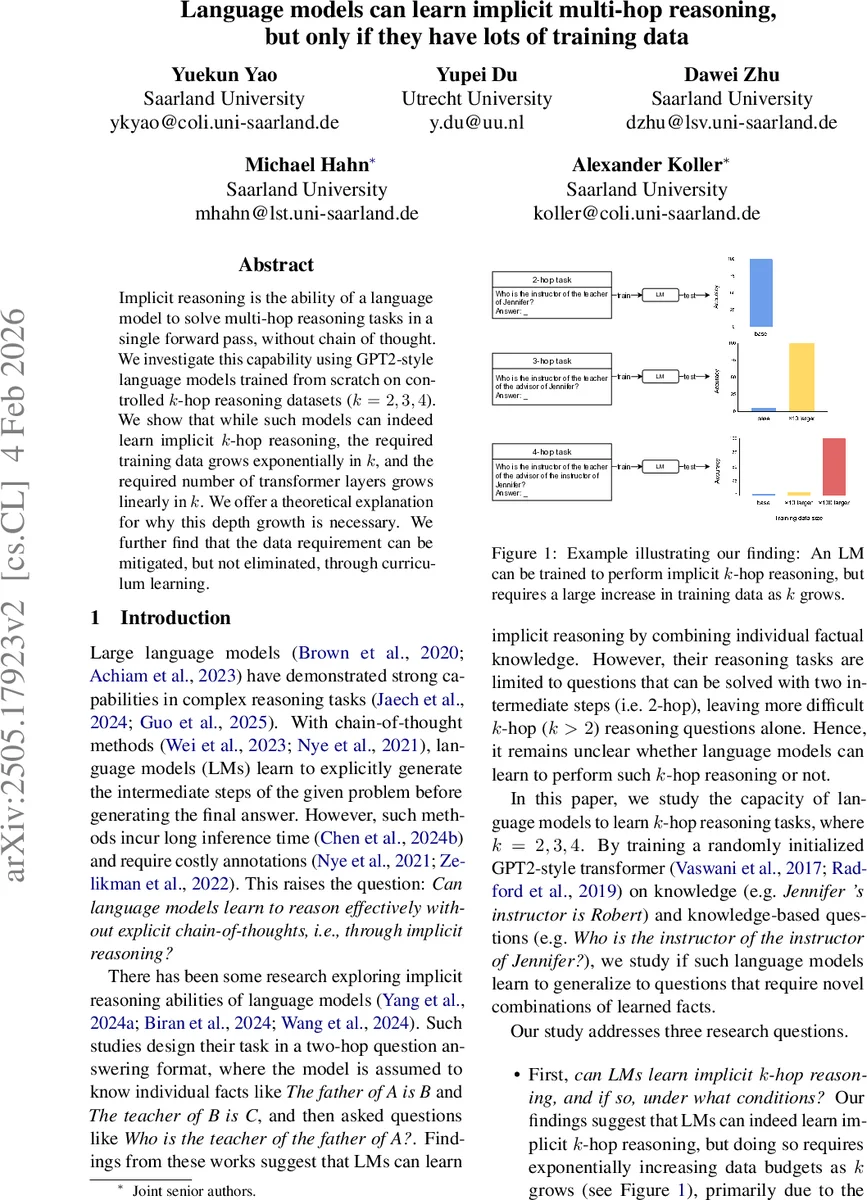

Implicit reasoning is the ability of a language model to solve multi-hop reasoning tasks in a single forward pass, without chain of thought. We investigate this capability using GPT2-style language models trained from scratch on controlled $k$-hop reasoning datasets ($k = 2, 3, 4$). We show that while such models can indeed learn implicit $k$-hop reasoning, the required training data grows exponentially in $k$, and the required number of transformer layers grows linearly in $k$. We offer a theoretical explanation for why this depth growth is necessary. We further find that the data requirement can be mitigated, but not eliminated, through curriculum learning.

💡 Research Summary

This paper investigates whether language models can perform multi‑hop reasoning implicitly, i.e., without generating an explicit chain‑of‑thought. The authors train GPT‑2‑style transformers from scratch on synthetic knowledge‑based datasets that require 2‑, 3‑, and 4‑hop reasoning. Each dataset consists of (1) an “entity profile” that lists all 1‑hop facts about a given entity in natural language, and (2) a question that asks for the result of composing k relations, e.g., “Who is the instructor of the instructor of Jennifer?”. The synthetic data are generated by sampling a set of entities and relations, arranging entities in five hierarchical layers, and linking each entity to a fixed number of randomly chosen upper‑layer entities via distinct relations. This construction guarantees that any k‑hop query starting from a bottom‑layer entity has a unique answer.

The models use the smallest GPT‑2 architecture (12 layers, ~124 M parameters) with Rotary Position Embeddings and an extended vocabulary that includes all entity names. Training is performed with a causal language‑modeling loss, a cosine learning‑rate schedule, and 20 k optimization steps (40 k for the hardest 4‑hop large setting). For each k‑hop task the authors vary the training data budget by scaling the number of reasoning questions relative to a base 2‑hop budget (×1, ×2, ×5, ×10, ×20, ×50, ×100). Test accuracy is measured on 3 000 held‑out questions per task (1 000 for the small 2‑hop set) using greedy decoding.

Key findings:

- Implicit multi‑hop reasoning is learnable – With a sufficiently large training budget, the models achieve near‑perfect (≈100 %) accuracy on 2‑, 3‑, and 4‑hop tasks, despite never being given explicit intermediate steps.

- Data requirement grows exponentially with the number of hops – For the small dataset, 3‑hop learning needs at least a 5× increase over the 2‑hop budget, and 4‑hop needs ≥20×. For the large dataset the requirements are even steeper: ≥10× for 3‑hop and ≥100× for 4‑hop. This mirrors the combinatorial explosion of possible fact chains (|R|^k).

- Model depth must increase linearly with k – Successful 4‑hop performance requires the full 12‑layer transformer; analysis of hidden states shows a layer‑wise progression where shallow layers encode 1‑hop information, deeper layers encode subsequent hops. The authors formalize a lower bound (Theorem 5.1) showing that, for the transformer architecture, depth proportional to the number of reasoning steps is unavoidable.

- Curriculum learning mitigates but does not eliminate the data burden – Training first on easier m‑hop tasks (m < k) and then gradually introducing the target k‑hop task dramatically reduces the data needed to reach high accuracy. Simply mixing m‑hop and k‑hop examples yields only modest gains.

- Interpretability aligns with prior work – Probing experiments reveal that each layer systematically predicts the intermediate “bridge” entity for its corresponding hop, confirming a step‑by‑step internal reasoning process similar to findings by Biran et al. (2024) and Wang et al. (2024).

Additional experiments with pretrained GPT‑2 and larger variants reproduce the same trends, reinforcing that the phenomenon is not an artifact of the tiny model. The authors release code and datasets to facilitate further study.

In summary, the paper demonstrates that implicit multi‑hop reasoning is theoretically possible for language models, but practical deployment demands exponentially more training examples as the reasoning depth grows and a linearly deeper architecture. Curriculum learning offers a useful strategy to lower the data ceiling, yet the fundamental scaling challenge remains. This work clarifies why current large language models often fail on complex implicit reasoning tasks and points toward data‑efficient training curricula as a promising direction for future research.

Comments & Academic Discussion

Loading comments...

Leave a Comment