Bringing Diversity from Diffusion Models to Semantic-Guided Face Asset Generation

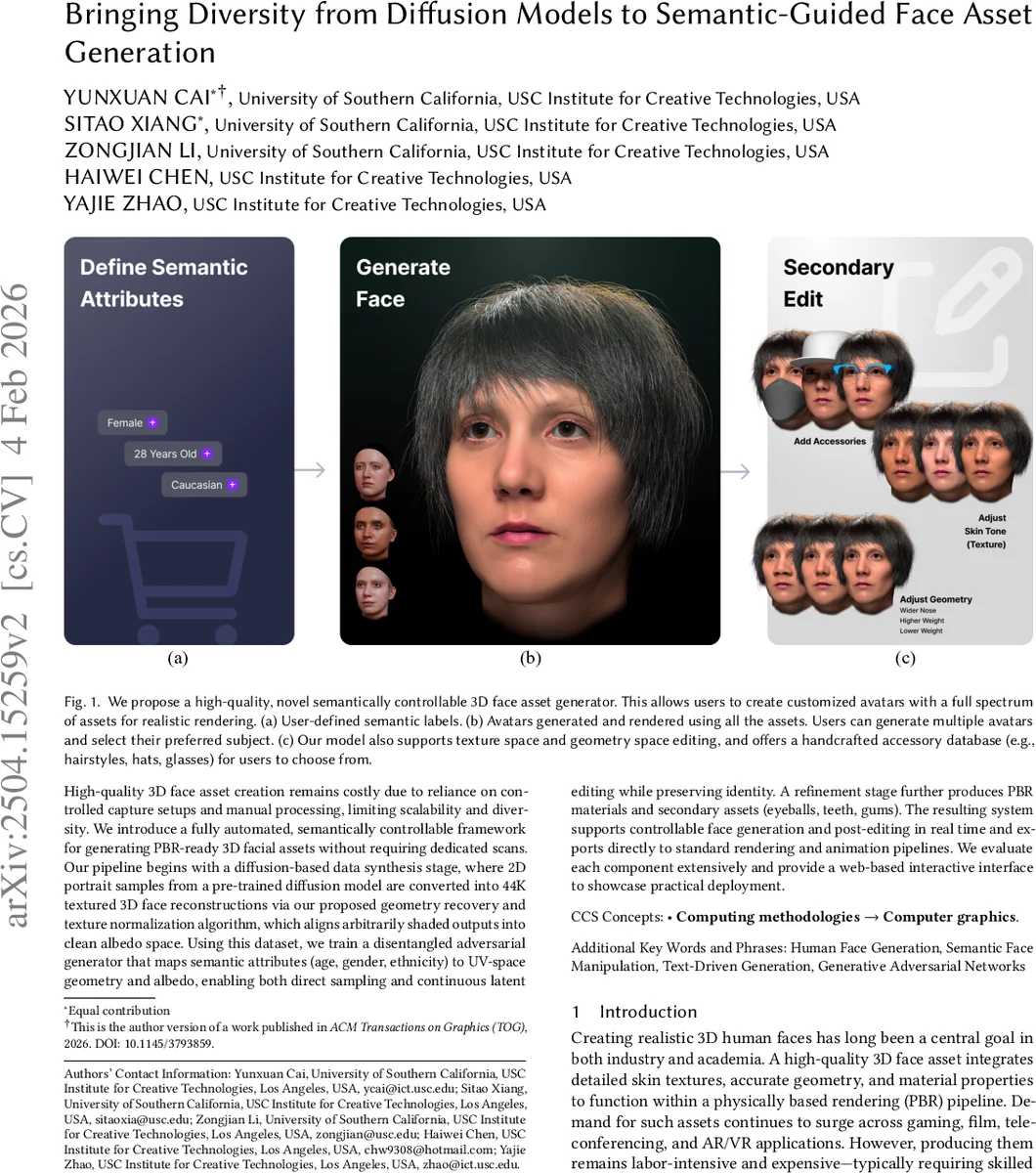

Digital modeling and reconstruction of human faces serve various applications. However, its availability is often hindered by the requirements of data capturing devices, manual labor, and suitable actors. This situation restricts the diversity, expressiveness, and control over the resulting models. This work aims to demonstrate that a semantically controllable generative network can provide enhanced control over the digital face modeling process. To enhance diversity beyond the limited human faces scanned in a controlled setting, we introduce a novel data generation pipeline that creates a high-quality 3D face database using a pre-trained diffusion model. Our proposed normalization module converts synthesized data from the diffusion model into high-quality scanned data. Using the 44,000 face models we obtained, we further developed an efficient GAN-based generator. This generator accepts semantic attributes as input, and generates geometry and albedo. It also allows continuous post-editing of attributes in the latent space. Our asset refinement component subsequently creates physically-based facial assets. We introduce a comprehensive system designed for creating and editing high-quality face assets. Our proposed model has undergone extensive experiment, comparison and evaluation. We also integrate everything into a web-based interactive tool. We aim to make this tool publicly available with the release of the paper.

💡 Research Summary

The paper presents a complete pipeline for generating high‑quality, semantically controllable 3D face assets without the need for costly capture studios. The authors first exploit a pre‑trained image diffusion model (e.g., Stable Diffusion) to synthesize a large variety of 2‑D portrait images conditioned on textual prompts that encode three demographic attributes: age, gender, and ethnicity. These images are fed into a state‑of‑the‑art 3‑D face reconstruction network, producing a 3‑D mesh together with UV‑mapped texture. Because diffusion outputs contain lighting, shading, and sometimes incomplete texture regions, the authors design a texture‑normalization pipeline that performs intrinsic image decomposition to separate albedo from illumination, and then fills missing regions to obtain clean, PBR‑ready albedo maps. A sanity‑check and label‑consistency filter automatically discards low‑quality samples, resulting in a curated dataset of 44 000 textured 3‑D faces at 4K resolution, each annotated with age, gender, and ethnicity.

Using this dataset, the second stage trains a conditional, disentangled GAN based on the DisUnknown architecture. The generator receives the three semantic attributes and outputs UV‑space geometry (displacement map), albedo, specular, and additional displacement maps. The training incorporates a disentanglement loss that forces each attribute to affect only its intended dimension, and an identity‑preservation loss that keeps the person’s core facial features unchanged during attribute manipulation. Compared with direct diffusion‑model inversion, the GAN can synthesize a full asset in 0.014 seconds on a single NVIDIA A6000 GPU, a speed‑up of three orders of magnitude over the ~40 seconds required for diffusion‑based generation.

The third stage refines the generated assets for physically‑based rendering. Separate networks predict specular and high‑frequency displacement maps, and a library of secondary assets (eyeballs, teeth, gums, hairstyles, glasses) is attached to the base mesh. The final output is a complete PBR‑ready asset (geometry, albedo, specular, displacement) exportable as FBX or GLTF, ready for game engines, film pipelines, or AR/VR applications.

A web‑based interactive UI demonstrates the system: users can type a description such as “a 40‑year‑old Hispanic male”, upload a portrait for inversion, or edit attributes continuously in the latent space while preserving identity. Extensive quantitative metrics (FID, LPIPS, diversity scores) and user studies show that the proposed method outperforms prior works like DreamFace, MetaHuman, and diffusion‑only pipelines in texture realism, geometric diversity, and controllability.

Key contributions are: (1) a novel data‑generation pipeline that leverages diffusion priors to create a large, diverse, high‑quality 3‑D face dataset; (2) a texture‑normalization framework that transfers unconstrained diffusion outputs into a clean albedo domain; (3) a conditional GAN that provides real‑time, attribute‑driven generation and inversion with identity preservation; (4) a full PBR asset creation workflow including secondary assets; and (5) an open‑source web interface for practical deployment.

Limitations include reliance on the quality of the underlying 3‑D reconstruction (which may miss fine details compared to professional scans), potential residual lighting artifacts after normalization, coarse demographic labeling (only three categories), and the current focus on static faces without expression dynamics. Future work could extend the pipeline to dynamic facial animation, finer skin‑tone granularity, and tighter integration with rigging systems. Overall, the paper delivers a practical, scalable solution that bridges the gap between generative diversity and production‑ready 3‑D face assets.

Comments & Academic Discussion

Loading comments...

Leave a Comment