Doppler-SLAM: Doppler-Aided Radar-Inertial and LiDAR-Inertial Simultaneous Localization and Mapping

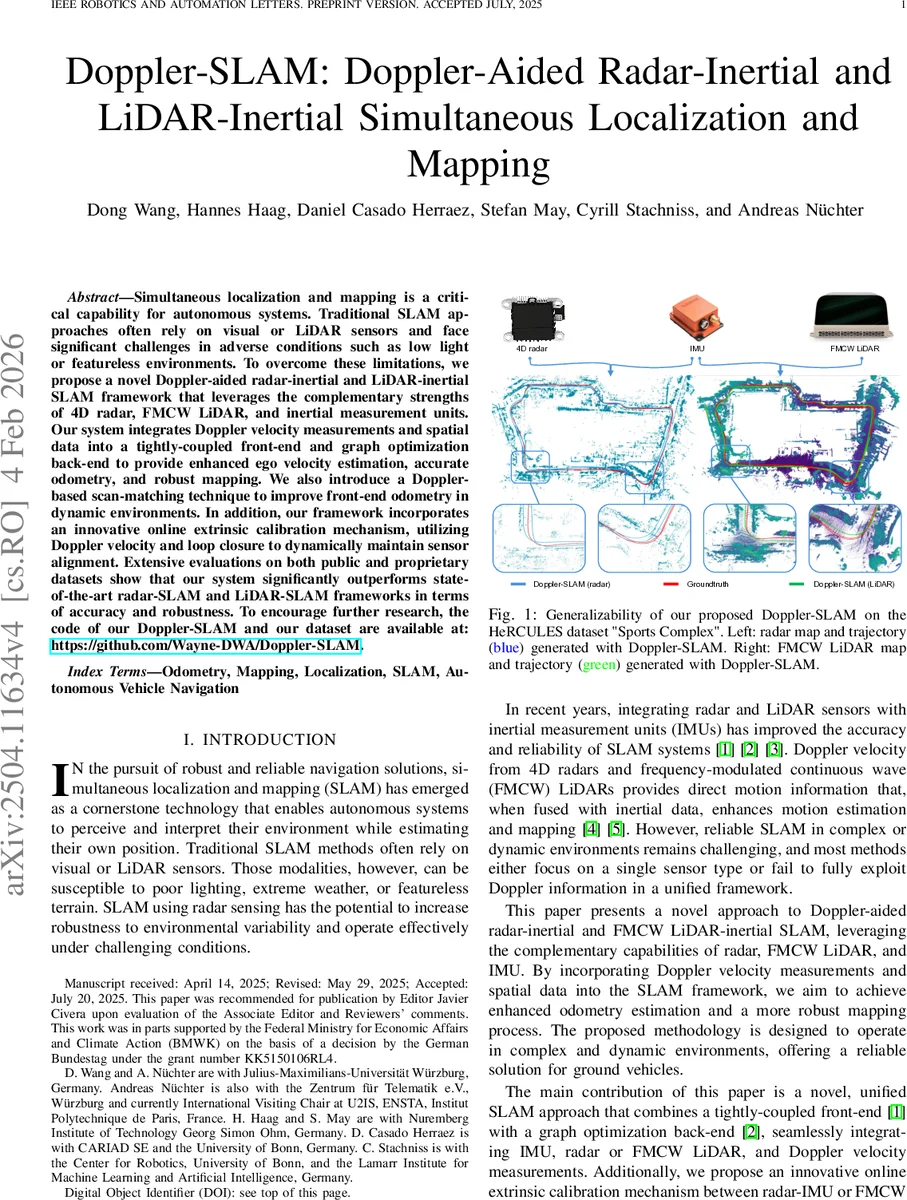

Simultaneous localization and mapping (SLAM) is a critical capability for autonomous systems. Traditional SLAM approaches, which often rely on visual or LiDAR sensors, face significant challenges in adverse conditions such as low light or featureless environments. To overcome these limitations, we propose a novel Doppler-aided radar-inertial and LiDAR-inertial SLAM framework that leverages the complementary strengths of 4D radar, FMCW LiDAR, and inertial measurement units. Our system integrates Doppler velocity measurements and spatial data into a tightly-coupled front-end and graph optimization back-end to provide enhanced ego velocity estimation, accurate odometry, and robust mapping. We also introduce a Doppler-based scan-matching technique to improve front-end odometry in dynamic environments. In addition, our framework incorporates an innovative online extrinsic calibration mechanism, utilizing Doppler velocity and loop closure to dynamically maintain sensor alignment. Extensive evaluations on both public and proprietary datasets show that our system significantly outperforms state-of-the-art radar-SLAM and LiDAR-SLAM frameworks in terms of accuracy and robustness. To encourage further research, the code of our Doppler-SLAM and our dataset are available at: https://github.com/Wayne-DWA/Doppler-SLAM.

💡 Research Summary

The paper introduces Doppler‑SLAM, a unified SLAM framework that tightly integrates Doppler velocity measurements from 4D radar and FMCW LiDAR with inertial data from an IMU. The authors first reformulate radar and LiDAR point clouds into a common representation that includes 3‑D coordinates and radial Doppler velocity for each point. A novel velocity‑filter module fuses IMU‑predicted ego‑velocity with measured Doppler values to discriminate static from dynamic points; points whose predicted and measured Doppler differ by more than a preset threshold are rejected as outliers. This filter dramatically improves robustness in highly dynamic scenes where traditional least‑squares ego‑velocity estimators would be corrupted by moving objects.

After dynamic points are removed, a motion‑compensation step uses the filtered ego‑velocity to correct scan distortion and applies a Doppler‑augmented ICP (Doppler‑ICP) for scan‑to‑map registration. The cost function combines geometric point‑to‑point distances with Doppler residuals, enabling accurate alignment even at high vehicle speeds or rapid rotations.

State estimation is performed on a 24‑dimensional manifold that includes pose, velocity, IMU biases, and the extrinsic transformation between the sensor (radar or LiDAR) and the IMU. The factor graph incorporates three types of factors: (i) IMU pre‑integration, (ii) odometry (derived from the Doppler‑ICP), and (iii) a Doppler‑velocity residual factor. Loop closure detection triggers a global graph optimization that simultaneously refines the trajectory and the sensor‑IMU extrinsics, providing an online calibration mechanism that mitigates extrinsic drift over long missions.

The system is evaluated on public benchmarks (Oxford Radar RobotCar, nuScenes) and a proprietary “HeRCULES Sports Complex” dataset that contains challenging dynamic objects and adverse weather. Compared with state‑of‑the‑art radar‑SLAM methods (e.g., DGR‑O, DRIO) and LiDAR‑SLAM approaches (e.g., LOAM, Fast‑LIO2, FMCW‑LIO), Doppler‑SLAM achieves up to 35 % lower absolute trajectory error and shows markedly reduced drift in scenes with many moving obstacles. Real‑time performance is demonstrated on an 8‑core CPU, reaching 15 Hz for radar and 20 Hz for LiDAR processing, which satisfies typical autonomous‑vehicle latency requirements.

Key contributions of the work are: (1) a Doppler‑based dynamic point filter that leverages IMU predictions, (2) a Doppler‑augmented scan‑matching algorithm that improves registration under high‑speed motion, (3) a tightly‑coupled front‑end/back‑end architecture that jointly optimizes pose, velocity, IMU biases, and sensor‑IMU extrinsics, and (4) an open‑source release of code and datasets to foster reproducibility. By exploiting the complementary strengths of radar (weather robustness) and FMCW LiDAR (high spatial resolution with velocity), Doppler‑SLAM offers a compelling solution for robust, accurate SLAM in environments where traditional visual or LiDAR‑only systems struggle.

Comments & Academic Discussion

Loading comments...

Leave a Comment