It's Not Just Timestamps: A Study on Docker Reproducibility

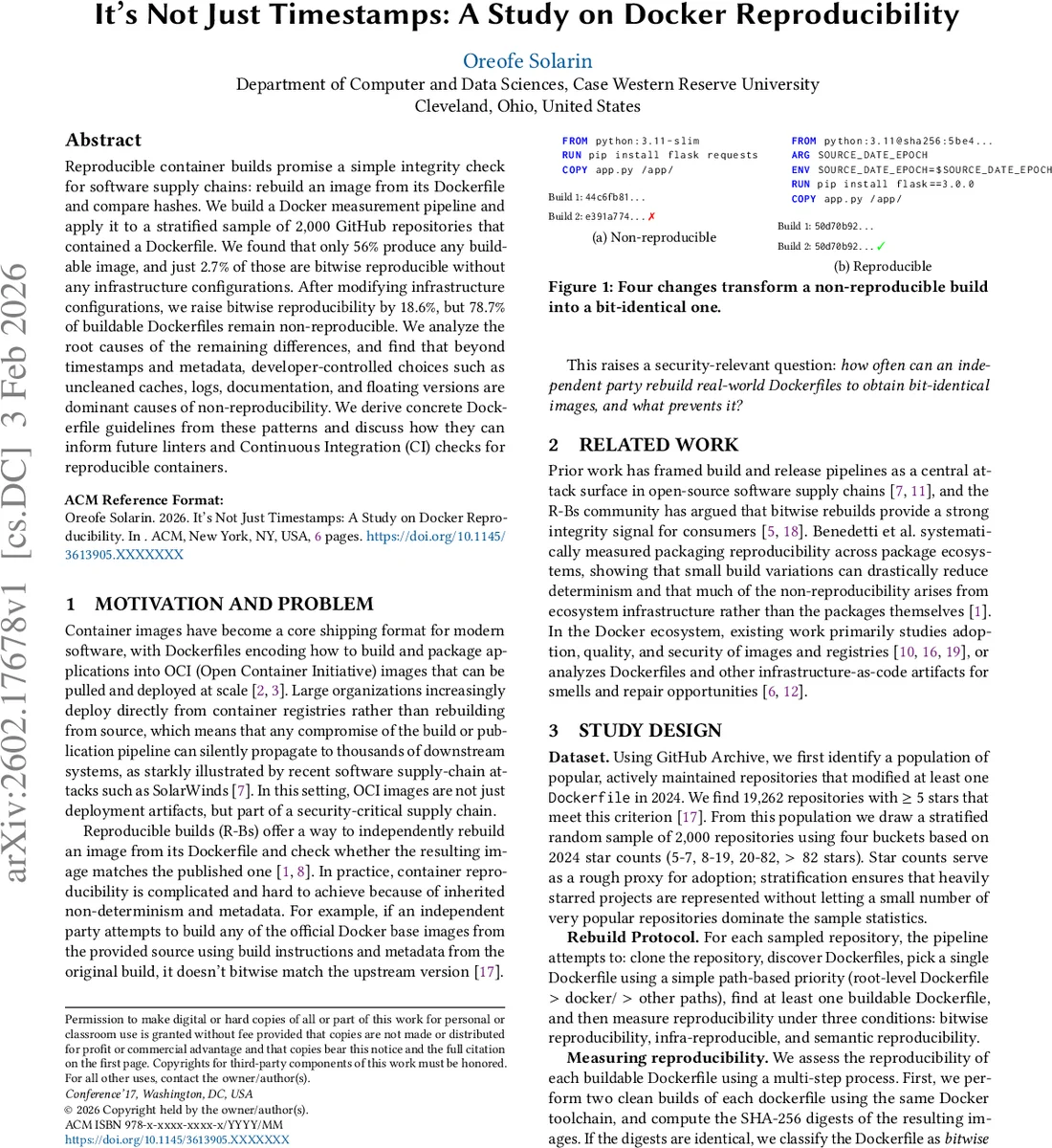

Reproducible container builds promise a simple integrity check for software supply chains: rebuild an image from its Dockerfile and compare hashes. We build a Docker measurement pipeline and apply it to a stratified sample of 2,000 GitHub repositories that contained a Dockerfile. We found that only 56% produce any buildable image, and just 2.7% of those are bitwise reproducible without any infrastructure configurations. After modifying infrastructure configurations, we raise bitwise reproducibility by 18.6%, but 78.7% of buildable Dockerfiles remain non-reproducible. We analyze the root causes of the remaining differences, and find that beyond timestamps and metadata, developer-controlled choices such as uncleaned caches, logs, documentation, and floating versions are dominant causes of non-reproducibility. We derive concrete Dockerfile guidelines from these patterns and discuss how they can inform future linters and Continuous Integration (CI) checks for reproducible containers.

💡 Research Summary

This paper presents a large‑scale empirical study of reproducible Docker container builds, focusing on how often real‑world Dockerfiles can be rebuilt to produce bit‑identical images and what factors prevent reproducibility. The authors first constructed a measurement pipeline that clones a repository, discovers Dockerfiles, selects a single Dockerfile per repository using a simple path‑priority rule, and then attempts two clean builds of that Dockerfile with the same Docker toolchain. The resulting images are compared by SHA‑256 digest; identical digests indicate “bitwise reproducibility as‑is.” If the digests differ, the pipeline hardens the build environment by fixing SOURCE_DATE_EPOCH to the Git commit timestamp and enabling Docker’s rewrite‑timestamp option, thereby removing known sources of nondeterminism in the Docker toolchain. After this “infrastructure hardening,” identical digests are labeled “infra‑reproducible.” Images that still differ are examined with diffoci and diffoscope to separate metadata differences from true content differences, yielding a classification of “semantically reproducible” or “non‑reproducible.” All scripts, datasets, and diffoscope reports are released for replication.

To obtain a representative sample, the authors queried GitHub Archive for repositories that modified at least one Dockerfile in 2024 and had at least five stars, yielding 19,262 candidates. They performed stratified random sampling across four star‑count buckets (5‑7, 8‑19, 20‑82, >82) to select 2,000 repositories, ensuring that both popular and less‑popular projects are represented. This design mitigates the risk that a few highly‑starred projects dominate the statistics.

The empirical results are striking. Only 56.1 % (1,123 of 2,000) of the sampled repositories produced a buildable Docker image under the default pipeline; the remaining 43.9 % failed due to cloning errors, Docker build errors, inaccessible resources, or timeouts. Among the buildable Dockerfiles, bitwise reproducibility without any special configuration was extremely rare—just 2.7 % (30 of 1,123). After applying the infrastructure hardening (SOURCE_DATE_EPOCH and timestamp rewriting), the proportion of bitwise reproducible images rose by 18.6 percentage points to 21.3 % (209 additional Dockerfiles). Nevertheless, 78.7 % (884 Dockerfiles) remained non‑reproducible even after eliminating known infrastructure sources of nondeterminism. No Dockerfile was found to be semantically reproducible after this step, indicating that developer‑controlled factors dominate the remaining differences.

To understand the residual nondeterminism, the authors manually inspected diffoscope reports for the 884 non‑reproducible pairs. They identified several recurring categories: (1) filesystem metadata (timestamps, ownership, permissions) – present in every image; (2) file ordering/format differences – 78.1 % of images; (3) system logs (e.g., /var/log) – 43.3 %; (4) caches and on‑disk databases (apt, pip, npm caches) – 36.8 %; (5) compiled artifacts (ELF binaries, bytecode) – 20.0 %; (6) application‑specific files such as generated reports or downloaded models – 13.0 %; (7) random or nondeterministic data (UUIDs, timestamps generated at build time) – 9.4 %; and (8) package‑manager state inconsistencies – 5.6 %. The authors emphasize that while timestamps and metadata are ubiquitous, they are not the sole cause; developer decisions that embed runtime state, leave caches, or use floating version specifications are the dominant contributors to non‑reproducibility.

Based on these findings, the paper proposes concrete Dockerfile guidelines: (i) combine package installations into a single RUN statement and use --no-install-recommends to avoid unnecessary packages; (ii) explicitly purge package manager caches after each installation (apt-get clean && rm -rf /var/lib/apt/lists/*, pip cache purge, etc.); (iii) pin base images and all installed package versions; (iv) avoid baking runtime state such as /etc/machine-id, logs, or generated reports into the final image; (v) prefer COPY of pre‑downloaded artifacts rather than downloading during the build; and (vi) eliminate nondeterministic commands or replace them with deterministic equivalents. The authors argue that these practices can be codified into linter rules and CI checks, providing developers with immediate feedback on reproducibility violations.

From a supply‑chain security perspective, the study reveals a significant verification gap: even with hardened infrastructure, only about one in five buildable Dockerfiles supports a simple hash‑based rebuild‑and‑compare integrity check. This limits the practicality of reproducible builds as a routine defense mechanism. Moreover, the results suggest a shift in responsibility: deterministic build infrastructure is necessary but not sufficient; developers must also adopt reproducibility‑aware Dockerfile authoring practices.

The paper acknowledges several limitations. First, the possibility of version drift in remote registries between builds may affect reproducibility measurements. Second, the root‑cause labels derived from diffoci/diffoscope are approximate and may miss subtle differences. Third, the study examines only a single Dockerfile per repository, selected by path priority, and performs only two builds on a single CI platform and architecture, thereby capturing primarily same‑host nondeterminism. Future work includes building automated tools that detect the identified non‑reproducibility patterns, recommending fixes, and extending the evaluation to multiple architectures, CI environments, and multi‑Dockerfile projects.

In summary, this work provides the first large‑scale quantification of Docker reproducibility, demonstrates that the majority of real‑world Dockerfiles are non‑reproducible even after infrastructure hardening, and offers actionable guidance for developers, tool builders, and security practitioners to improve the determinism of container builds.

Comments & Academic Discussion

Loading comments...

Leave a Comment