ST-Raptor: An Agentic System for Semi-Structured Table QA

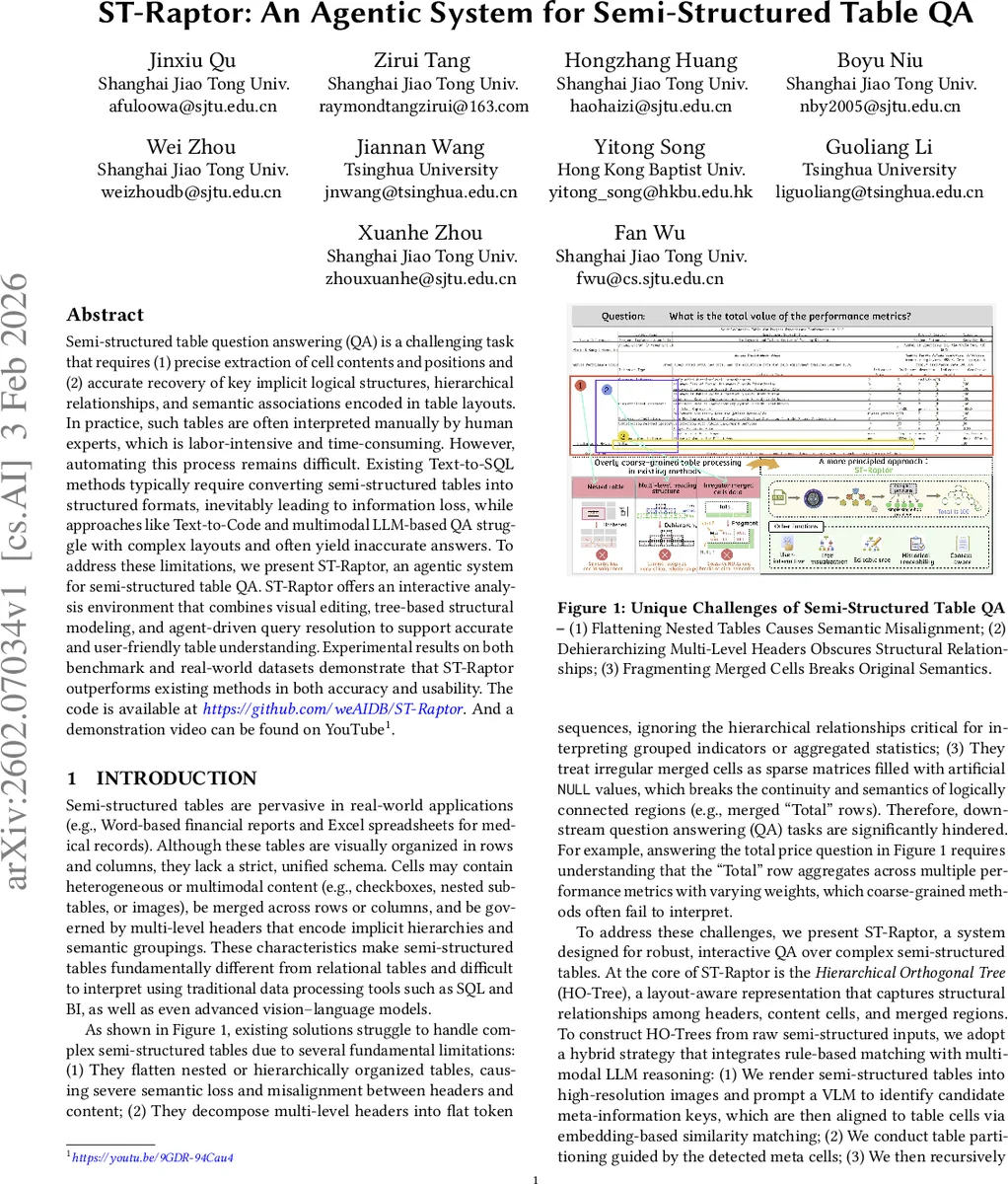

Semi-structured table question answering (QA) is a challenging task that requires (1) precise extraction of cell contents and positions and (2) accurate recovery of key implicit logical structures, hierarchical relationships, and semantic associations encoded in table layouts. In practice, such tables are often interpreted manually by human experts, which is labor-intensive and time-consuming. However, automating this process remains difficult. Existing Text-to-SQL methods typically require converting semi-structured tables into structured formats, inevitably leading to information loss, while approaches like Text-to-Code and multimodal LLM-based QA struggle with complex layouts and often yield inaccurate answers. To address these limitations, we present ST-Raptor, an agentic system for semi-structured table QA. ST-Raptor offers an interactive analysis environment that combines visual editing, tree-based structural modeling, and agent-driven query resolution to support accurate and user-friendly table understanding. Experimental results on both benchmark and real-world datasets demonstrate that ST-Raptor outperforms existing methods in both accuracy and usability. The code is available at https://github.com/weAIDB/ST-Raptor, and a demonstration video is available at https://youtu.be/9GDR-94Cau4.

💡 Research Summary

ST‑Raptor is an agentic system designed to tackle the challenging problem of question answering over semi‑structured tables, which often contain merged cells, multi‑level headers, nested sub‑tables, and multimodal content. Traditional Text‑to‑SQL pipelines lose critical layout information when flattening such tables, while existing Text‑to‑Code or multimodal LLM approaches struggle to correctly interpret complex visual structures, leading to inaccurate answers.

The core of ST‑Raptor is the Hierarchical Orthogonal Tree (HO‑Tree), a layout‑aware representation that captures the hierarchical relationships among headers, content cells, and merged regions. HO‑Tree construction follows a hybrid pipeline: raw tables (Excel, CSV, HTML, Markdown, or screenshots) are rendered into high‑resolution images; a vision‑language model (Gemini‑3.0‑preview) extracts candidate meta‑information cells as JSON key‑value pairs; these candidates are aligned to actual table cells using embedding‑based similarity; then, based on layout heuristics (top‑level header detection, merged‑region identification, coordinate alignment), the system recursively builds the HO‑Tree, linking each content cell to its appropriate semantic header. When multiple sheets are present, they are unified under a single root, enabling global reasoning across documents.

To make the automatically generated trees editable, ST‑Raptor provides a web‑based Tree Editor. Users can drag‑and‑drop nodes, rename or delete them, change node types, or even construct a tree from scratch. Edits are serialized as JSON and immediately fed back to the backend, allowing the downstream QA component to operate on the corrected structure. This human‑in‑the‑loop capability mitigates model errors and adapts the system to domain‑specific quirks.

The Answer Generator receives a natural‑language query, tags columns by type (numeric, categorical, free‑text), and automatically decomposes the query into a sequence of sub‑operations that map onto the nine core tree operations (sub‑tree retrieval, aggregation, filtering, etc.). For example, “What is the average completion rate across all KPI categories?” becomes: locate KPI subtree → extract completion rates → compute average. A two‑stage verification mechanism ensures answer fidelity: forward verification checks logical constraints and execution traces of the sub‑operations, while backward verification re‑phrases the original question and validates that the generated answer is semantically consistent. This dramatically reduces hallucinations common in pure LLM pipelines.

The Orchestration Agent orchestrates multi‑turn, multimodal interactions. It maintains a dynamic memory of previous turns, resolves ambiguous references (“this product”), routes image‑based queries to the VLM for table localization, and dispatches text‑based queries to a general LLM (Gemini‑2.0). The agent also handles cross‑file and cross‑sheet contexts, enabling seamless switching between different tables within a single session.

Experimental evaluation was conducted on two benchmarks: SSTQA (102 real‑world semi‑structured tables covering 19 scenarios) and WikiTQ‑ST (Wikipedia tables converted to semi‑structured form). 25 % of the tables were presented as images to simulate realistic usage. ST‑Raptor was compared against strong baselines including OpenSearch‑SQL, TableLLaMA, TableLLM, ReAct‑table, TAT‑LLM, mPLUG‑DocOwl 1.5, GPT‑4o, and DeepSeek‑V3. The system achieved the highest accuracy, surpassing the best baseline by 11.2 percentage points. Ablation studies attribute this gain to three factors: (1) the HO‑Tree abstraction that decouples layout parsing from logical reasoning and allows user‑driven correction, (2) the question‑decomposition module that aligns complex natural‑language queries with tree operations, and (3) the agent that coordinates vision‑based extraction and context tracking across multi‑turn dialogs. Even on the simpler WikiTQ‑ST dataset, ST‑Raptor maintained an edge, demonstrating that preserving hierarchical layout information benefits both complex and relatively flat tables.

In summary, the paper contributes (i) a novel pipeline that integrates visual extraction, hierarchical tree modeling, and agent‑driven reasoning; (ii) a robust method for extracting meta‑information from table images using VLMs and embedding similarity; (iii) a set of nine tree operations that serve as a lingua franca for table reasoning; (iv) a dual‑phase verification scheme that improves answer faithfulness; and (v) an interactive UI that empowers users to edit and refine the underlying structural representation. Limitations include dependence on VLM quality for low‑resolution or noisy images and the rule‑based nature of layout heuristics, which may not capture all domain‑specific nuances. Future work will explore domain‑adaptive layout learning, lighter‑weight vision models, and meta‑reinforcement learning to automatically discover optimal tree operations.

Comments & Academic Discussion

Loading comments...

Leave a Comment