LISA: Laplacian In-context Spectral Analysis

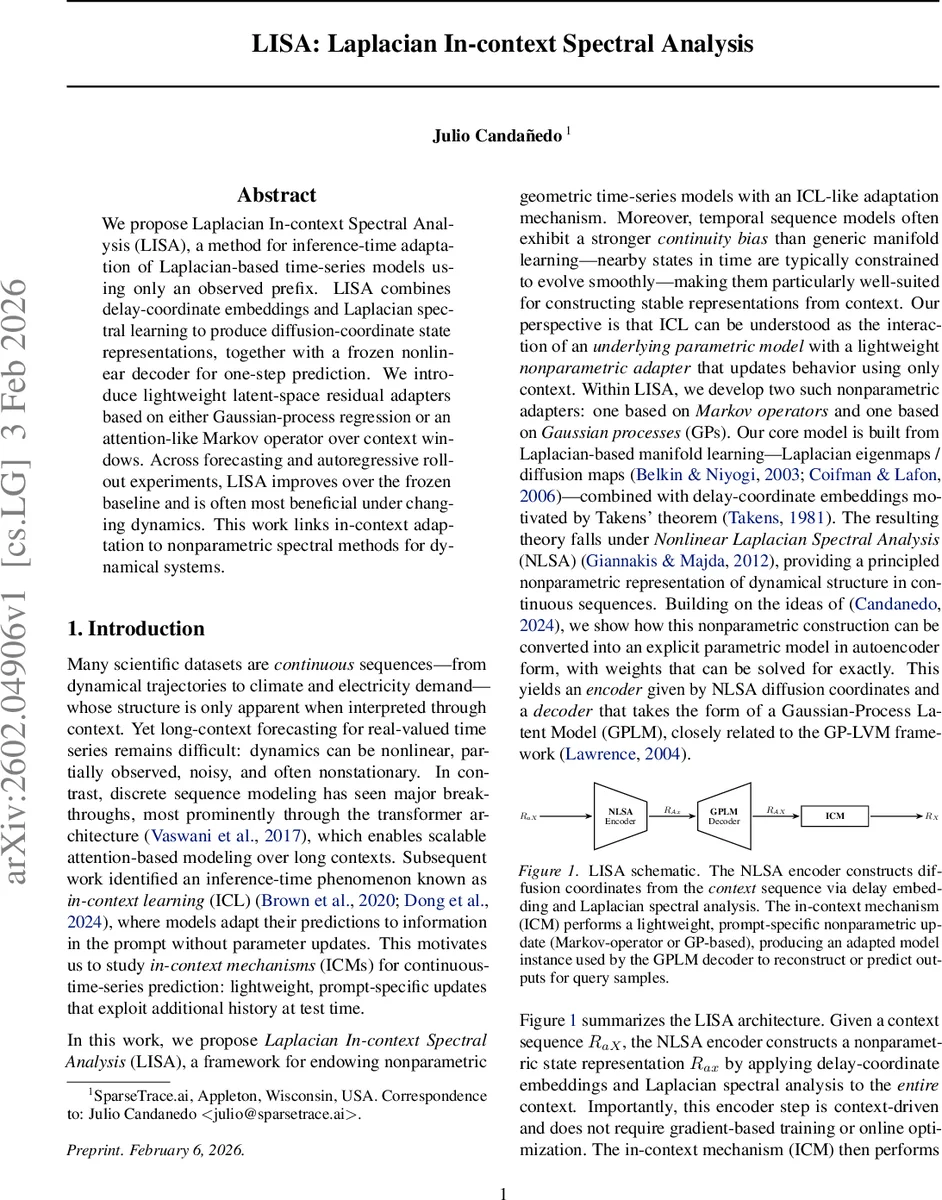

We propose Laplacian In-context Spectral Analysis (LISA), a method for inference-time adaptation of Laplacian-based time-series models using only an observed prefix. LISA combines delay-coordinate embeddings and Laplacian spectral learning to produce diffusion-coordinate state representations, together with a frozen nonlinear decoder for one-step prediction. We introduce lightweight latent-space residual adapters based on either Gaussian-process regression or an attention-like Markov operator over context windows. Across forecasting and autoregressive rollout experiments, LISA improves over the frozen baseline and is often most beneficial under changing dynamics. This work links in-context adaptation to nonparametric spectral methods for dynamical systems.

💡 Research Summary

**

The paper introduces LISA (Laplacian In‑context Spectral Analysis), a novel framework that equips non‑parametric, Laplacian‑based time‑series models with an inference‑time adaptation mechanism that relies solely on the observed prefix (context) at test time. The core idea is to combine Takens‑style delay‑coordinate embeddings with Laplacian eigen‑decomposition (diffusion maps) to obtain a set of diffusion coordinates that capture the low‑dimensional manifold structure of the underlying dynamical system. These coordinates are produced by a frozen encoder (the NLSA encoder) and fed into a frozen nonlinear decoder that implements a Gaussian‑Process Latent Model (GPLM) for one‑step prediction.

The adaptation layer, called the In‑Context Mechanism (ICM), operates without any gradient updates. Two variants are proposed:

-

ICGP (In‑Context Gaussian Process) – For each delay window in the prefix, the residual between the decoder’s prediction and the true next observation is computed. These residuals are regressed in diffusion‑coordinate space using an RBF kernel Gaussian process. The posterior mean provides a residual correction for the query window, while the posterior variance is used to gate the correction strength (γ_var). An additional context‑size gate (γ_ctx) scales the correction based on the number of context windows. The final prediction is the baseline decoder output plus the gated residual.

-

ICNW (In‑Context Nadaraya‑Watson) – Instead of a GP, a kernel‑weighted average (Nadaraya‑Watson estimator) of the context residuals is used. The RBF kernel defines a row‑stochastic matrix (attention‑like weights) that linearly combines the residuals to produce the correction. This variant is computationally lighter and resembles an attention mechanism over context windows.

Both ICMs are applied after the frozen encoder and decoder have been trained on a large training set. At test time, only the prefix length ℓ (which can be larger than the minimal window length L) is used to compute the adaptation; no model parameters are changed.

Experiments cover three scenarios:

-

Stationary chaotic attractors (e.g., Rössler, Lorenz). LISA and its attention‑based variant ALSA consistently improve over the baseline NLSA across metrics such as mean‑squared error (MSE), autocorrelation‑function MSE, spectral divergence (JS/KL‑style), and maximum mean discrepancy (MMD). Performance gains increase with longer context, confirming that additional recent history refines the diffusion‑coordinate representation.

-

Forced/non‑stationary dynamics – A regime‑switch Lorenz‑63 experiment where training data come from regime A and testing from a distinct regime B. The baseline fails to adapt to the shifted dynamics, while LISA’s GP‑based correction rapidly re‑centers the predictor, yielding markedly lower forecast errors and better statistical fidelity.

-

Real‑world electricity load data – Compared against a strong supervised neural baseline (PTST). Despite being unsupervised, LISA attains comparable MSE and statistical metrics, and demonstrates robustness to scaling and noise.

Key insights include: (i) diffusion coordinates provide a natural space for kernel‑based residual correction because they preserve the manifold geometry of the dynamics; (ii) lightweight, non‑gradient adaptation can bridge the gap between global generalization (learned from large data) and local, rapid adjustment to distribution shifts; (iii) the length of the prefix and kernel bandwidth parameters (β, ε, k₀) control the trade‑off between adaptation speed and stability.

Overall, LISA successfully merges classical spectral methods (Laplacian eigenmaps, NLSA) with modern in‑context learning ideas, offering a principled, computationally efficient way to achieve test‑time adaptation for complex, possibly non‑stationary time‑series. The framework opens avenues for real‑time forecasting systems that need to remain accurate under evolving dynamics without costly retraining.

Comments & Academic Discussion

Loading comments...

Leave a Comment