Data Verification is the Future of Quantum Computing Copilots

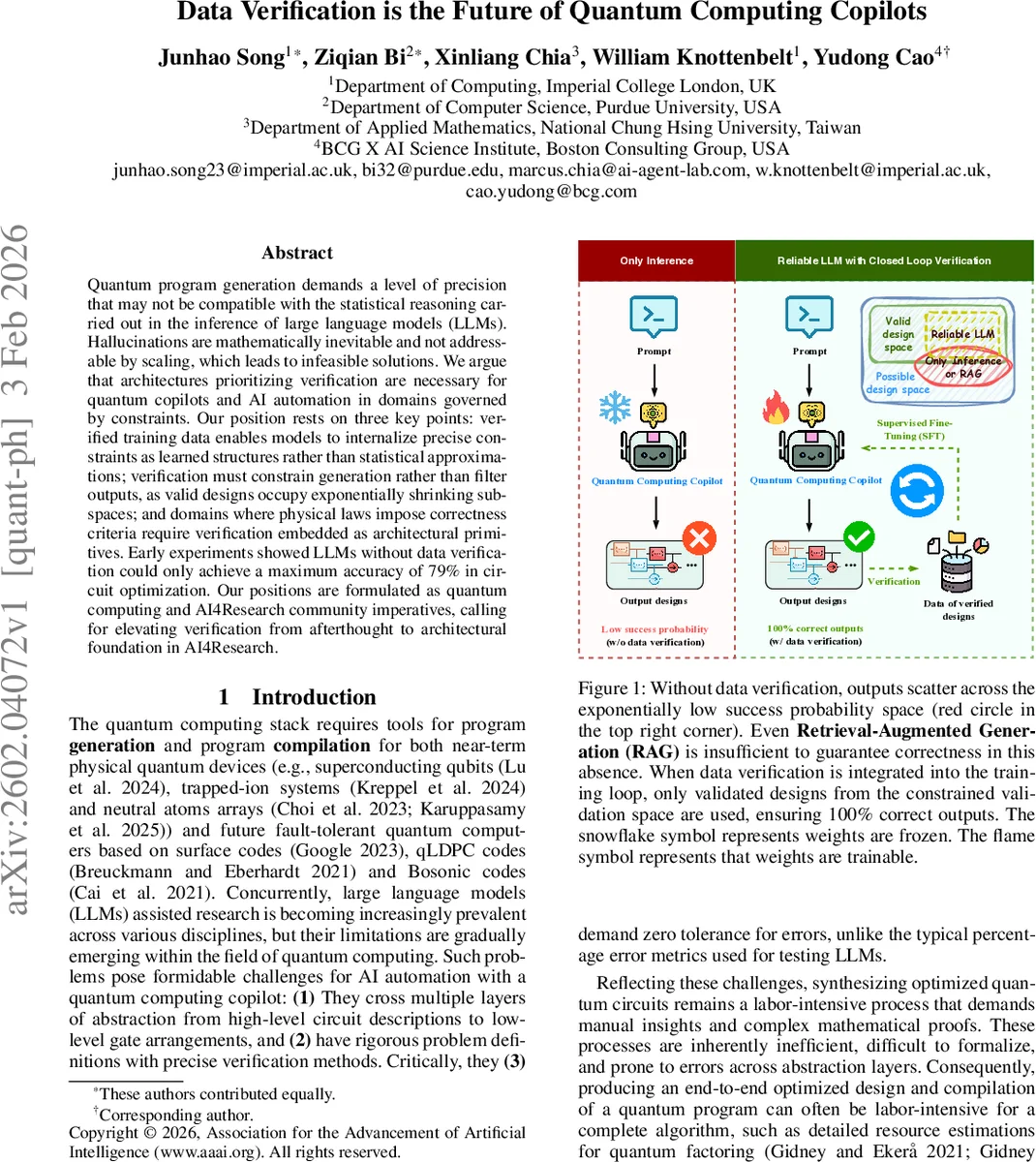

Quantum program generation demands a level of precision that may not be compatible with the statistical reasoning carried out in the inference of large language models (LLMs). Hallucinations are mathematically inevitable and not addressable by scaling, which leads to infeasible solutions. We argue that architectures prioritizing verification are necessary for quantum copilots and AI automation in domains governed by constraints. Our position rests on three key points: verified training data enables models to internalize precise constraints as learned structures rather than statistical approximations; verification must constrain generation rather than filter outputs, as valid designs occupy exponentially shrinking subspaces; and domains where physical laws impose correctness criteria require verification embedded as architectural primitives. Early experiments showed LLMs without data verification could only achieve a maximum accuracy of 79% in circuit optimization. Our positions are formulated as quantum computing and AI4Research community imperatives, calling for elevating verification from afterthought to architectural foundation in AI4Research.

💡 Research Summary

The paper “Data Verification is the Future of Quantum Computing Copilots” argues that the generation of quantum programs demands a level of mathematical precision that cannot be reliably achieved by large language models (LLMs) that rely on statistical inference. Hallucinations—outputs that violate the strict physical and logical constraints of quantum circuits—are mathematically inevitable in purely statistical models, and scaling model size or data volume does not eliminate them. The authors propose three core positions. First (P1), verified training data is a minimum requirement: only datasets that have been formally proven (e.g., with theorem provers such as Lean or Z3) to satisfy domain‑specific constraints (unitarity, reversibility, hardware topology) should be used for training. This enables the model to internalize constraints as learned structures rather than as noisy approximations. Second (P2), verification must be embedded as a priori constraints during generation, not as a posteriori filtering step. The design space of quantum circuits grows exponentially (O(dⁿ)), while the fraction of valid designs shrinks exponentially (O((δ/d)ⁿ)). Consequently, filtering generated outputs after the fact incurs exponential computational cost and remains infeasible. Third (P3), a verification‑first architecture should become a standard paradigm for AI‑4‑Research in any scientific domain governed by hard physical laws (e.g., drug discovery, materials science, engineering design).

To substantiate these claims, the authors conducted an extensive benchmark. They evaluated 34 publicly available LLMs ranging from 270 M to 120 B parameters on 70 000 multiple‑choice questions derived from a verified dataset of Cuccaro adder circuits (bit‑widths 2–8). Each question presented four candidate circuits that all satisfied the functional specification but differed in gate count, depth, and T‑gate usage; the correct answer was the circuit minimizing a weighted cost function. Models that had been fine‑tuned on verified data (gemma3:12 B, gpt‑oss:120 B) achieved accuracies between 60 % and 79 %, while generic models hovered near random performance (21 %–29 %). Calibration analysis showed that verification‑aware models assigned 60 %–80 % confidence to correct answers, whereas generic models were poorly calibrated (20 %–35 %). Some models even produced malformed outputs, further reducing their effective accuracy. These results demonstrate that simply increasing model size does not guarantee correctness in highly constrained domains.

The paper also discusses practical challenges. Generating verified quantum datasets is resource‑intensive (e.g., the QDataSet required three months of HPC time to produce 14 TB of validated data for only one‑ and two‑qubit systems). Nevertheless, the authors argue that the long‑term benefits—reliable quantum copilots, reduced human expert labor, and accelerated discovery—justify the investment. They call for the community to adopt verification‑first pipelines, develop automated theorem‑proving tools for dataset creation, and explore constraint‑aware sampling methods. In conclusion, the authors position data verification not as an afterthought but as the architectural foundation necessary for trustworthy AI assistants in quantum computing and other physics‑driven scientific fields.

Comments & Academic Discussion

Loading comments...

Leave a Comment