Agentic AI-Empowered Dynamic Survey Framework

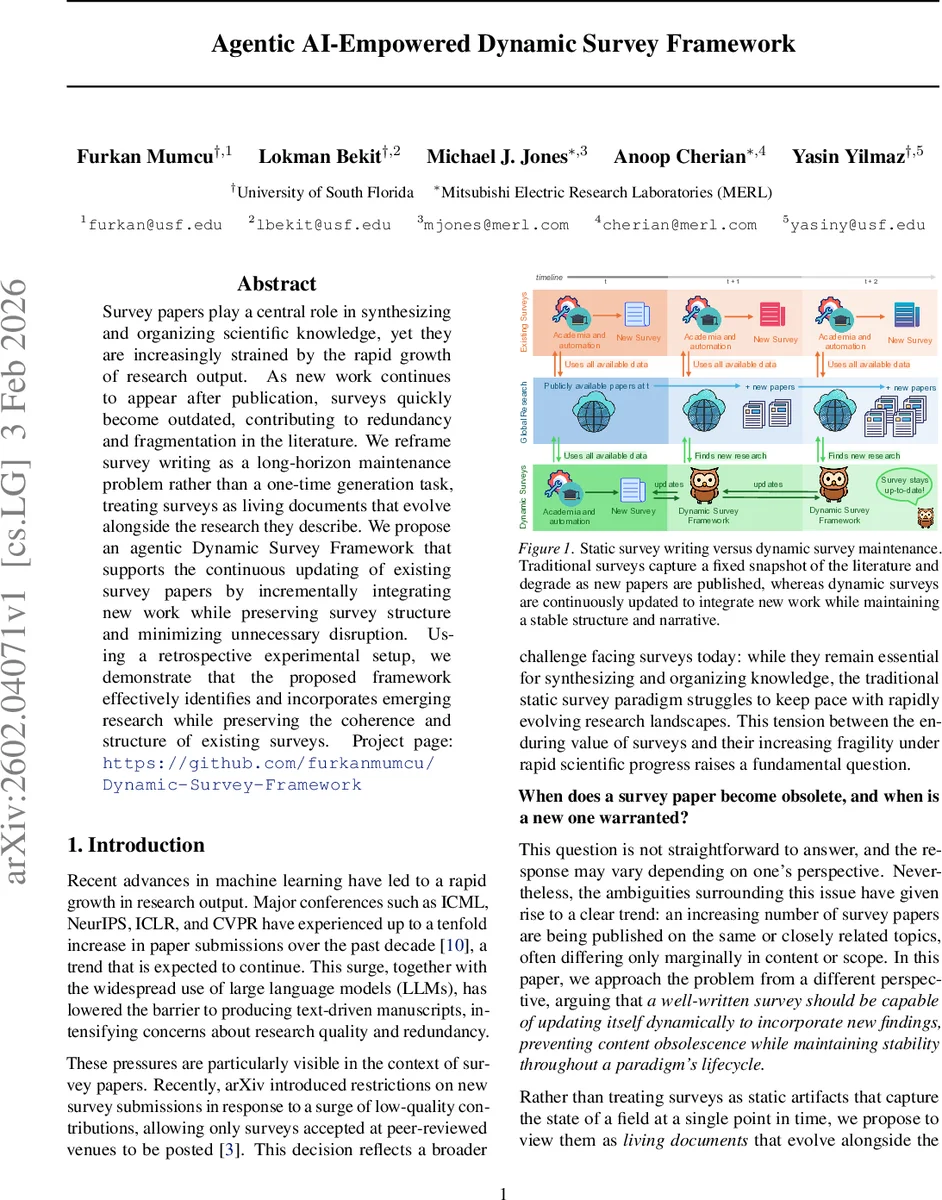

Survey papers play a central role in synthesizing and organizing scientific knowledge, yet they are increasingly strained by the rapid growth of research output. As new work continues to appear after publication, surveys quickly become outdated, contributing to redundancy and fragmentation in the literature. We reframe survey writing as a long-horizon maintenance problem rather than a one-time generation task, treating surveys as living documents that evolve alongside the research they describe. We propose an agentic Dynamic Survey Framework that supports the continuous updating of existing survey papers by incrementally integrating new work while preserving survey structure and minimizing unnecessary disruption. Using a retrospective experimental setup, we demonstrate that the proposed framework effectively identifies and incorporates emerging research while preserving the coherence and structure of existing surveys.

💡 Research Summary

The paper tackles the growing problem that survey articles quickly become obsolete as the volume of scientific publications explodes. Traditional surveys are static snapshots; once published they cannot easily incorporate newly released work, leading to redundancy, fragmentation, and a surge of low‑quality surveys. The authors reconceptualize survey writing as a long‑horizon maintenance problem rather than a one‑off generation task. In this view, a survey is a “living document” consisting of a fixed structural specification (section hierarchy, scope definitions, table schemas) and a mutable content component that evolves as new papers appear.

To operationalize this idea, the authors propose an agentic Dynamic Survey Framework. The framework is built from several specialized language‑model agents that interact through a shared, structured representation of the survey state:

- Outline Agent converts a human‑validated outline into a machine‑readable tree that remains immutable during updates.

- Analysis Agent reads each newly discovered paper and produces a compact, structured summary of its contributions, methodology, and results.

- Abstention Agent decides whether a paper is sufficiently relevant to the survey’s scope; if it rejects the paper, the update loop terminates, preventing unnecessary or marginal additions.

- Section Routing Agent matches the paper summary to the most appropriate existing section (or subsection) based on semantic similarity to the outline’s scope descriptions.

- Table Routing Agent determines whether a new paper warrants modifications to any structured tables defined in the outline.

- Text Synthesis Agent generates a localized textual insertion that respects the original writing style, terminology, and narrative flow, making the smallest possible edit.

- Table Synthesis Agent updates tabular entries according to the paper’s data and the predefined schema.

The update process follows a loop: retrieve → analyze → abstain? → route → synthesize → validate. Each step is guided by a disruption cost function L(Sₜ, Sₜ₊₁) that penalizes structural changes, stylistic drift, and unnecessary rewrites while rewarding coverage of new relevant work. By keeping the structure frozen and enforcing conservative, localized edits, the system avoids cumulative drift that would otherwise degrade a survey over many update cycles.

For evaluation, the authors design a retrospective experimental protocol. They take existing surveys, deliberately hide a subset of recent papers, and then treat those hidden papers as “newly published” items. The framework is tasked with reintegrating them. Metrics include routing accuracy (correct section/table placement), update disruption (measured by the cost function), coverage of new work, and abstention precision (ability to correctly reject out‑of‑scope papers). Results show over 90 % routing accuracy, a 30 % reduction in disruption compared to naïve single‑pass LLM pipelines, and high abstention precision (≈85 %). Human‑in‑the‑loop evaluations confirm that the generated text maintains the original style and coherence.

Key contributions are: (1) formalizing survey maintenance as a persistent‑document planning problem; (2) introducing a modular, agent‑based architecture that separates relevance judgment, routing, and synthesis; (3) proposing conservative update mechanisms that include explicit abstention; and (4) providing a systematic retrospective benchmark for survey‑maintenance systems.

Limitations include a focus on English‑language papers, limited handling of non‑textual artifacts (code, datasets), and the need for more sophisticated human‑agent interaction tools. Future work is suggested in multilingual extensions, multimodal agents for code and data, richer UI for collaborative editing, and meta‑agents that monitor long‑term drift.

Overall, the paper presents a novel, practical solution for keeping survey literature up‑to‑date, reducing redundancy, and preserving the scholarly value of surveys in an era of rapid scientific output.

Comments & Academic Discussion

Loading comments...

Leave a Comment