AnyStyle: Single-Pass Multimodal Stylization for 3D Gaussian Splatting

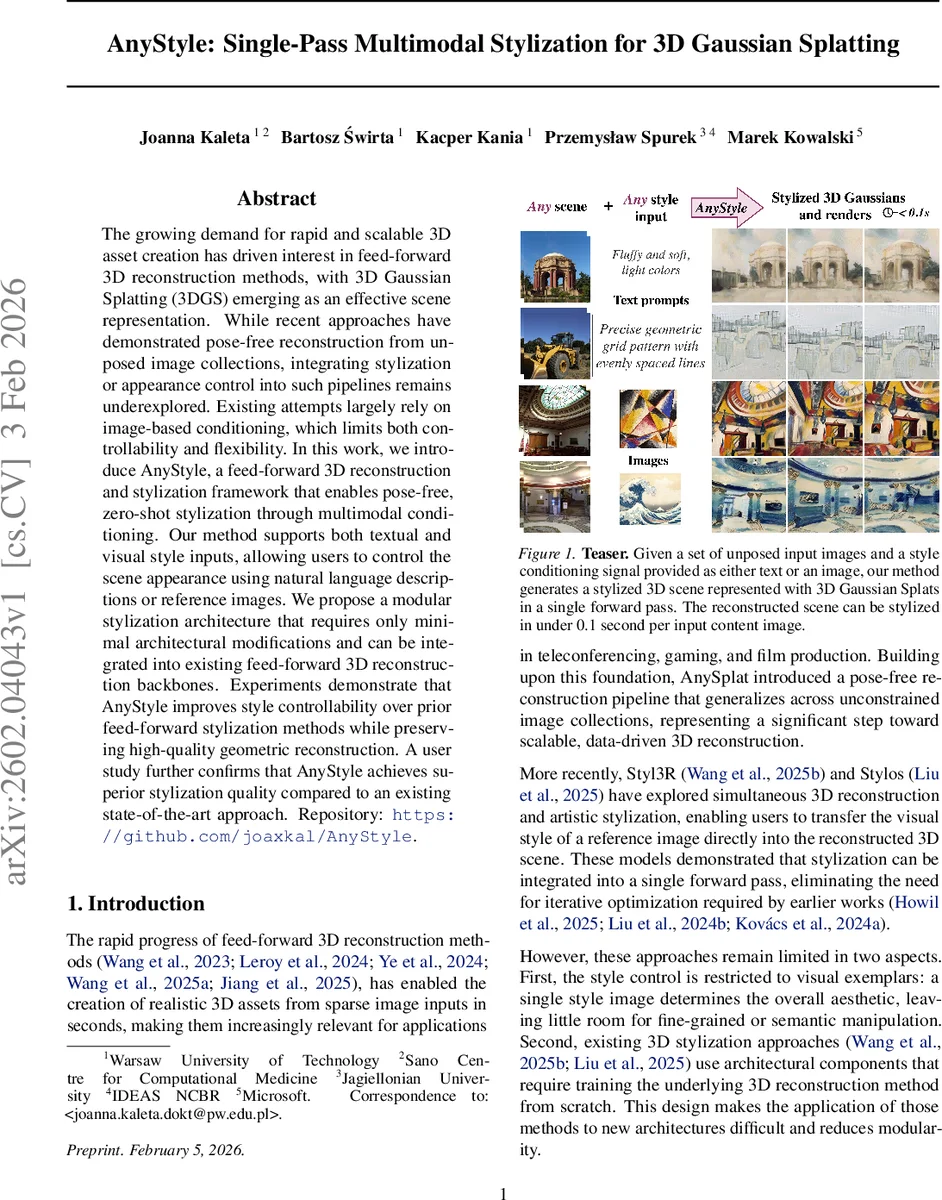

The growing demand for rapid and scalable 3D asset creation has driven interest in feed-forward 3D reconstruction methods, with 3D Gaussian Splatting (3DGS) emerging as an effective scene representation. While recent approaches have demonstrated pose-free reconstruction from unposed image collections, integrating stylization or appearance control into such pipelines remains underexplored. Existing attempts largely rely on image-based conditioning, which limits both controllability and flexibility. In this work, we introduce AnyStyle, a feed-forward 3D reconstruction and stylization framework that enables pose-free, zero-shot stylization through multimodal conditioning. Our method supports both textual and visual style inputs, allowing users to control the scene appearance using natural language descriptions or reference images. We propose a modular stylization architecture that requires only minimal architectural modifications and can be integrated into existing feed-forward 3D reconstruction backbones. Experiments demonstrate that AnyStyle improves style controllability over prior feed-forward stylization methods while preserving high-quality geometric reconstruction. A user study further confirms that AnyStyle achieves superior stylization quality compared to an existing state-of-the-art approach. Repository: https://github.com/joaxkal/AnyStyle.

💡 Research Summary

AnyStyle introduces a lightweight, multimodal stylization layer for feed‑forward 3D Gaussian Splatting (3DGS) that enables zero‑shot style transfer using either text prompts or reference images—all in a single forward pass. The system builds on a pretrained AnySplat backbone, which remains frozen to preserve accurate geometry, camera poses, and the underlying Gaussian distribution. A duplicated “Style Branch” copies the backbone’s Aggregator and Gaussian Head, and a set of style‑injector modules are inserted at selected layers. Each injector receives a style embedding produced by Long‑CLIP, projects it to the token dimension via a small MLP, and adds it to the feature map through a zero‑initialized 1×1 convolution (Zero‑Conv). Because the convolution starts with zero weights, the network’s behavior is identical to the original AnySplat before training, allowing the style branch to be fine‑tuned without disturbing the geometric reconstruction.

The multimodal conditioning works as follows: a text prompt or a style image is encoded by Long‑CLIP into a shared latent vector zₛ. During training, batches alternate between text‑conditioned and image‑conditioned samples, encouraging the model to learn a modality‑agnostic style representation. The style injection can be applied either directly to the tokens exiting the duplicated Aggregator (head injection) or to intermediate tokens inside the Aggregator (layer injection). Experiments show that head injection yields higher overall style consistency, while layer injection offers finer control over specific attributes such as color palette versus brushstroke texture.

Quantitatively, AnyStyle achieves the best ArtFID scores among feed‑forward 3D stylization methods and improves CLIP‑Score and LPIPS compared to recent baselines such as Stylos and Styl3R. A user study with 30 participants reported a 78 % preference for AnyStyle’s results, citing superior preservation of scene structure and more expressive style control. Ablation studies confirm that (1) zero‑initialized convolutions are essential for stable training, (2) keeping the backbone frozen maintains geometric fidelity, and (3) multimodal training reduces the modality gap between text and image inputs.

Beyond performance, the architecture is deliberately modular: any transformer‑based 3D reconstruction backbone (e.g., DepthAnything‑3) can be swapped in, and the style injectors can be attached without altering internal attention mechanisms. This “architecture‑agnostic” property distinguishes AnyStyle from prior works that required joint training of geometry and style branches from scratch, limiting their applicability to a single model family.

The paper also discusses limitations and future directions. While the current design is tailored to 3DGS, extending the zero‑conv style injection concept to mesh‑based or volumetric representations remains an open problem. Automated selection of injection points, meta‑learning of optimal style‑branch configurations, and real‑time interactive editing of the CLIP‑derived style embedding are identified as promising research avenues.

In summary, AnyStyle delivers a practical solution for controllable 3D stylization: it supports both textual and visual style cues, operates in a single feed‑forward pass, preserves high‑quality geometry, and can be retrofitted onto existing 3DGS pipelines with minimal effort. These qualities make it immediately useful for real‑time applications such as gaming, AR/VR content creation, and rapid prototyping in visual effects, positioning AnyStyle as a strong candidate for becoming a standard component in future 3D generative AI toolkits.

Comments & Academic Discussion

Loading comments...

Leave a Comment