C-IDS: Solving Contextual POMDP via Information-Directed Objective

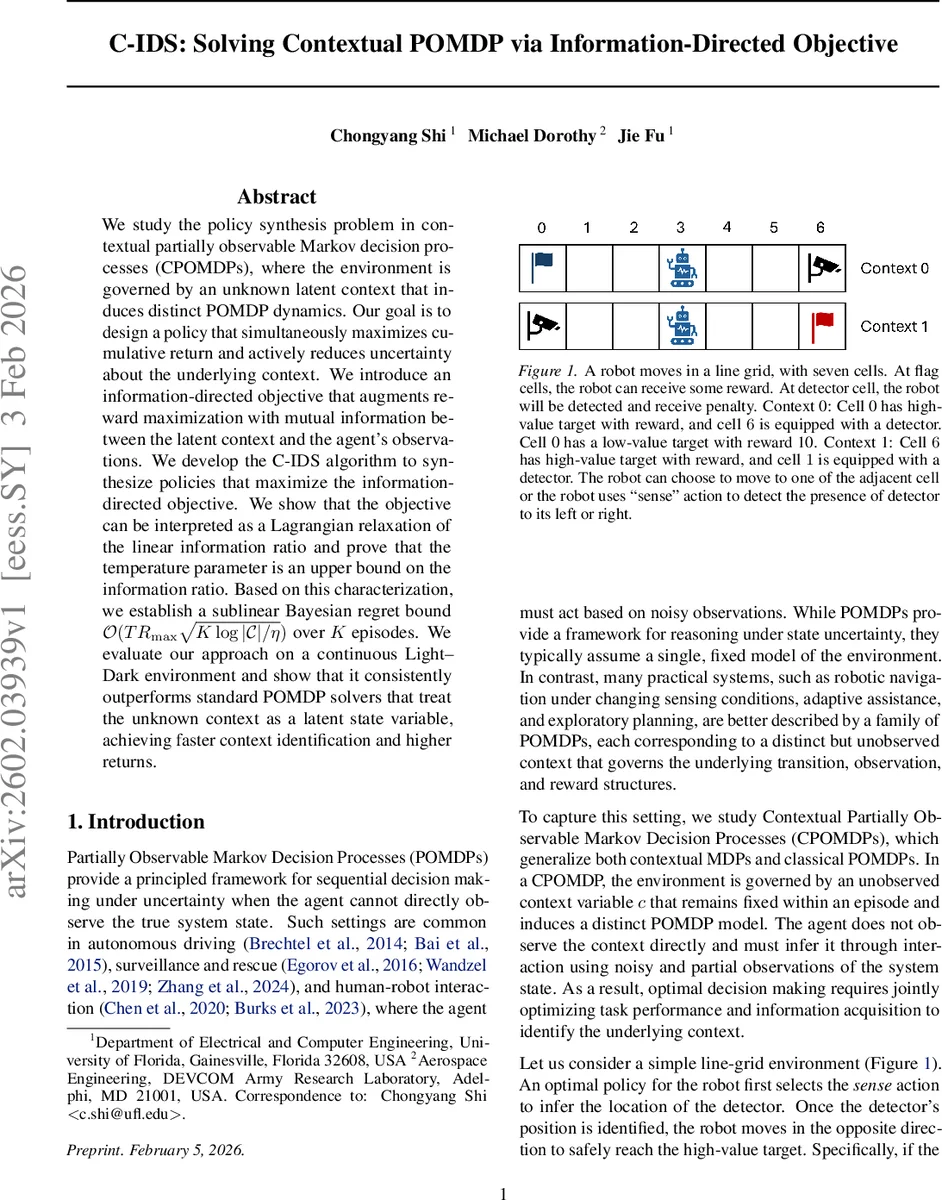

We study the policy synthesis problem in contextual partially observable Markov decision processes (CPOMDPs), where the environment is governed by an unknown latent context that induces distinct POMDP dynamics. Our goal is to design a policy that simultaneously maximizes cumulative return and actively reduces uncertainty about the underlying context. We introduce an information-directed objective that augments reward maximization with mutual information between the latent context and the agent’s observations. We develop the C-IDS algorithm to synthesize policies that maximize the information-directed objective. We show that the objective can be interpreted as a Lagrangian relaxation of the linear information ratio and prove that the temperature parameter is an upper bound on the information ratio. Based on this characterization, we establish a sublinear Bayesian regret bound over K episodes. We evaluate our approach on a continuous Light-Dark environment and show that it consistently outperforms standard POMDP solvers that treat the unknown context as a latent state variable, achieving faster context identification and higher returns.

💡 Research Summary

The paper tackles the policy synthesis problem in contextual partially observable Markov decision processes (CPOMDPs), a setting where an unknown latent context determines which of several POMDP models governs the environment during an episode. Unlike standard POMDPs that assume a single fixed model, CPOMDPs require the agent to both maximize cumulative reward and actively infer the hidden context from noisy observations.

To address this dual objective, the authors introduce an information‑directed objective that augments the expected reward with a mutual‑information term between the latent context C and the trajectory Y generated by the policy. Formally, for episode k the objective is

\

Comments & Academic Discussion

Loading comments...

Leave a Comment