Audit After Segmentation: Reference-Free Mask Quality Assessment for Language-Referred Audio-Visual Segmentation

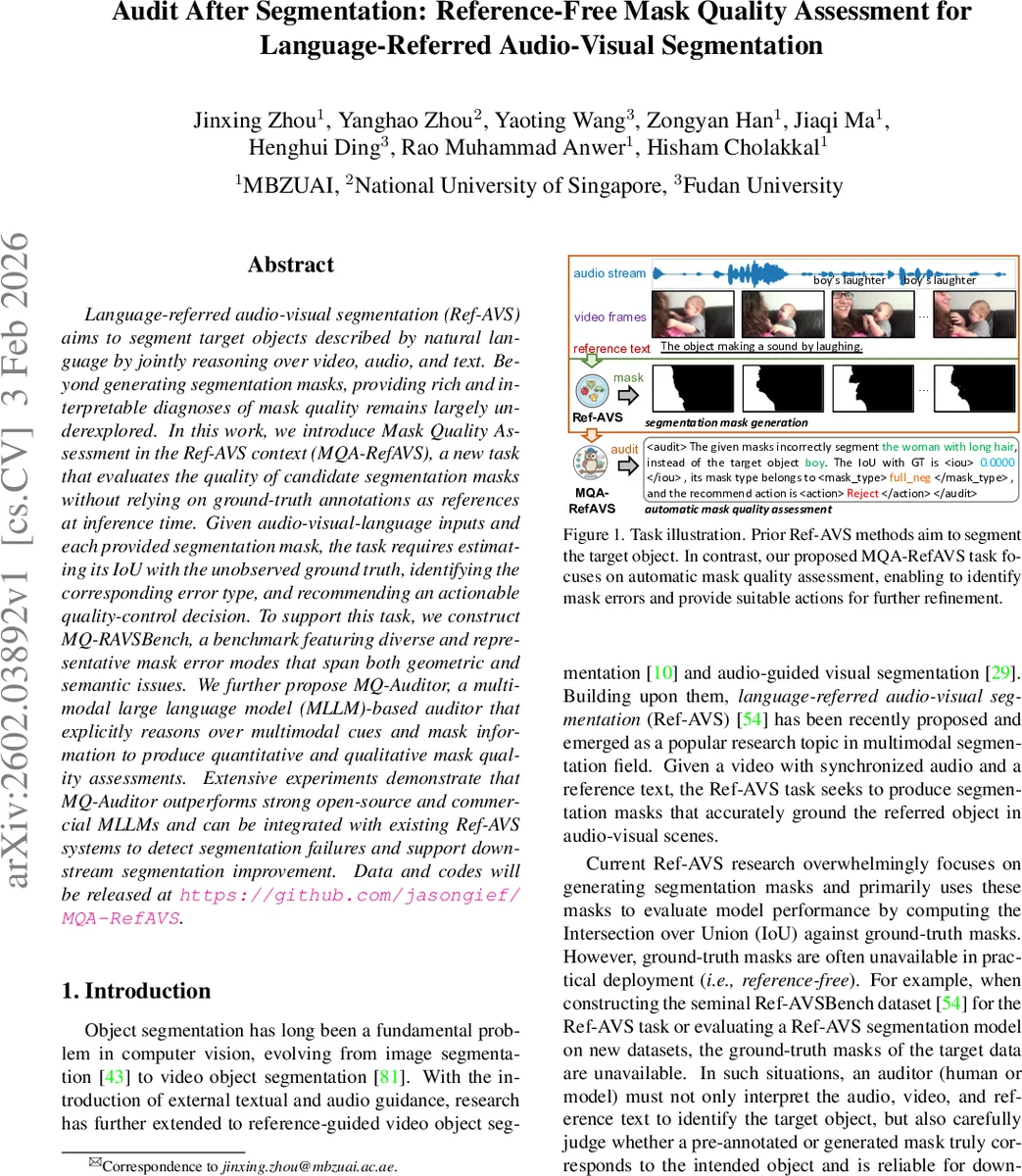

Language-referred audio-visual segmentation (Ref-AVS) aims to segment target objects described by natural language by jointly reasoning over video, audio, and text. Beyond generating segmentation masks, providing rich and interpretable diagnoses of mask quality remains largely underexplored. In this work, we introduce Mask Quality Assessment in the Ref-AVS context (MQA-RefAVS), a new task that evaluates the quality of candidate segmentation masks without relying on ground-truth annotations as references at inference time. Given audio-visual-language inputs and each provided segmentation mask, the task requires estimating its IoU with the unobserved ground truth, identifying the corresponding error type, and recommending an actionable quality-control decision. To support this task, we construct MQ-RAVSBench, a benchmark featuring diverse and representative mask error modes that span both geometric and semantic issues. We further propose MQ-Auditor, a multimodal large language model (MLLM)-based auditor that explicitly reasons over multimodal cues and mask information to produce quantitative and qualitative mask quality assessments. Extensive experiments demonstrate that MQ-Auditor outperforms strong open-source and commercial MLLMs and can be integrated with existing Ref-AVS systems to detect segmentation failures and support downstream segmentation improvement. Data and codes will be released at https://github.com/jasongief/MQA-RefAVS.

💡 Research Summary

This paper introduces a novel problem called Mask Quality Assessment for language‑referenced audio‑visual segmentation (MQA‑RefAVS). While existing Ref‑AVS research focuses on generating segmentation masks and evaluating them against ground‑truth masks using a single scalar metric (IoU), real‑world deployment often lacks any ground‑truth reference. Consequently, there is a need for an “auditor” that can judge the reliability of a candidate mask solely from the multimodal inputs (video, synchronized audio, and referring text).

To enable systematic study, the authors construct MQ‑RAVSBench, a benchmark built on the Ref‑AVSBench dataset. It contains 1,840 videos and 2,046 referring expressions, from which 26,061 mask instances are generated. For each video‑reference pair, six mask types are automatically created:

- Perfect – the original ground‑truth mask (IoU = 1, action = accept).

- Full‑Neg – masks that segment completely irrelevant objects, generated by prompting a large vision‑language model (Qwen2.5‑VL) for negative object phrases, grounding them with Rex‑Omni, and segmenting with SAM2 (IoU = 0, action = reject).

- Cutout – interior pixels are removed from the perfect mask using OpenCV structuring elements, yielding two difficulty levels (medium and hard) with IoU ranges

Comments & Academic Discussion

Loading comments...

Leave a Comment