Do We Need Asynchronous SGD? On the Near-Optimality of Synchronous Methods

Modern distributed optimization methods mostly rely on traditional synchronous approaches, despite substantial recent progress in asynchronous optimization. We revisit Synchronous SGD and its robust variant, called $m$-Synchronous SGD, and theoretically show that they are nearly optimal in many heterogeneous computation scenarios, which is somewhat unexpected. We analyze the synchronous methods under random computation times and adversarial partial participation of workers, and prove that their time complexities are optimal in many practical regimes, up to logarithmic factors. While synchronous methods are not universal solutions and there exist tasks where asynchronous methods may be necessary, we show that they are sufficient for many modern heterogeneous computation scenarios.

💡 Research Summary

The paper revisits the classic synchronous stochastic gradient descent (SGD) algorithm and its robust variant, m‑synchronous SGD, in the context of distributed optimization with heterogeneous worker speeds. While asynchronous methods have been promoted as a solution to the “straggler” problem—where the slowest worker dictates the iteration time—the authors demonstrate that synchronous approaches can be nearly optimal in a wide range of realistic settings.

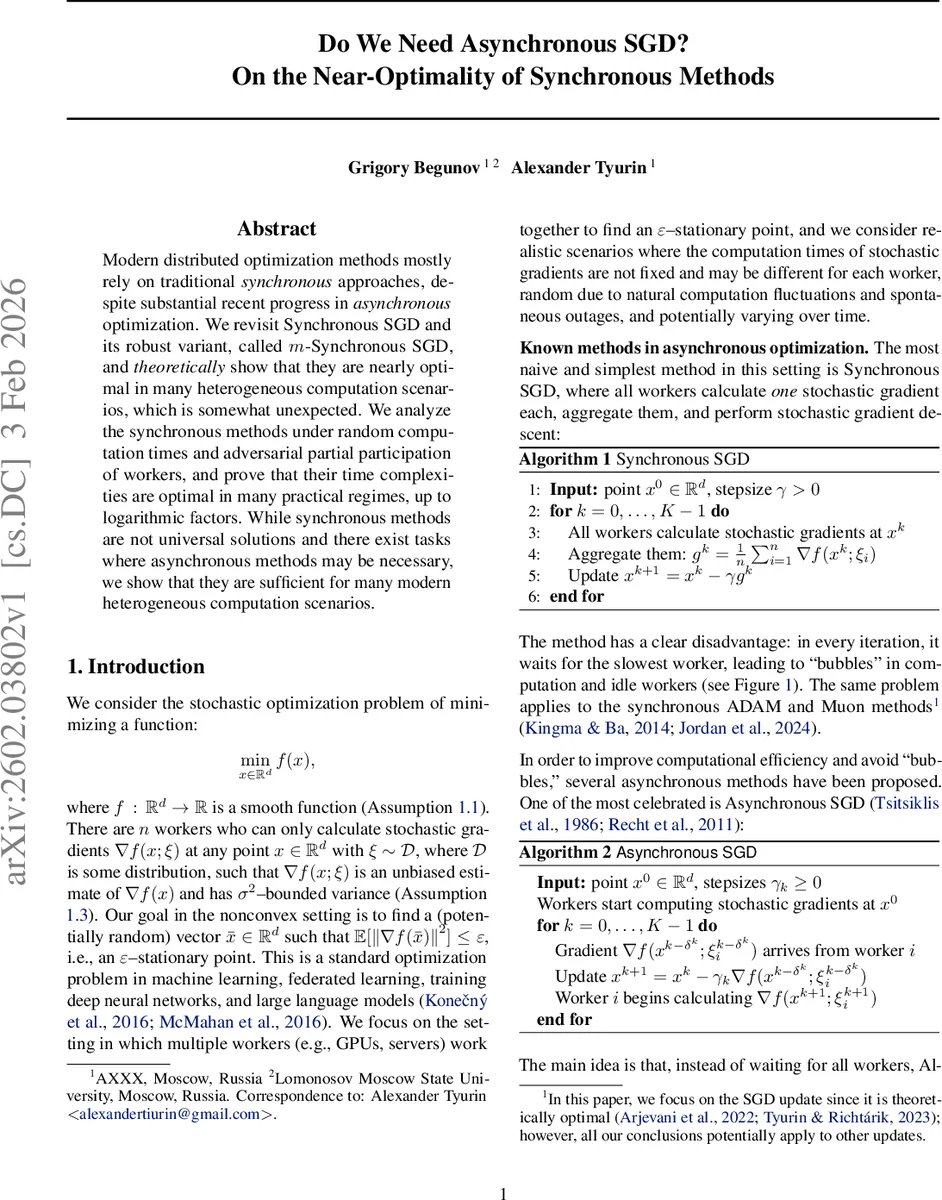

First, the authors formalize the optimization problem: minimize a smooth, possibly non‑convex function f over ℝ^d using unbiased stochastic gradients with bounded variance σ². The goal is to find an ε‑stationary point, i.e., a point whose expected gradient norm squared is at most ε. The standard synchronous SGD (Algorithm 1) aggregates gradients from all n workers each iteration, which incurs a waiting time equal to the slowest worker’s compute time τ_n. As a result, its naïve time complexity is O(τ_n·max{LΔ/ε, σ²LΔ/(nε²)}), clearly sub‑optimal compared with the known optimal asynchronous bound

(T_{\text{optimal}} = Θ\big(\min_{m∈

Comments & Academic Discussion

Loading comments...

Leave a Comment