FOVI: A biologically-inspired foveated interface for deep vision models

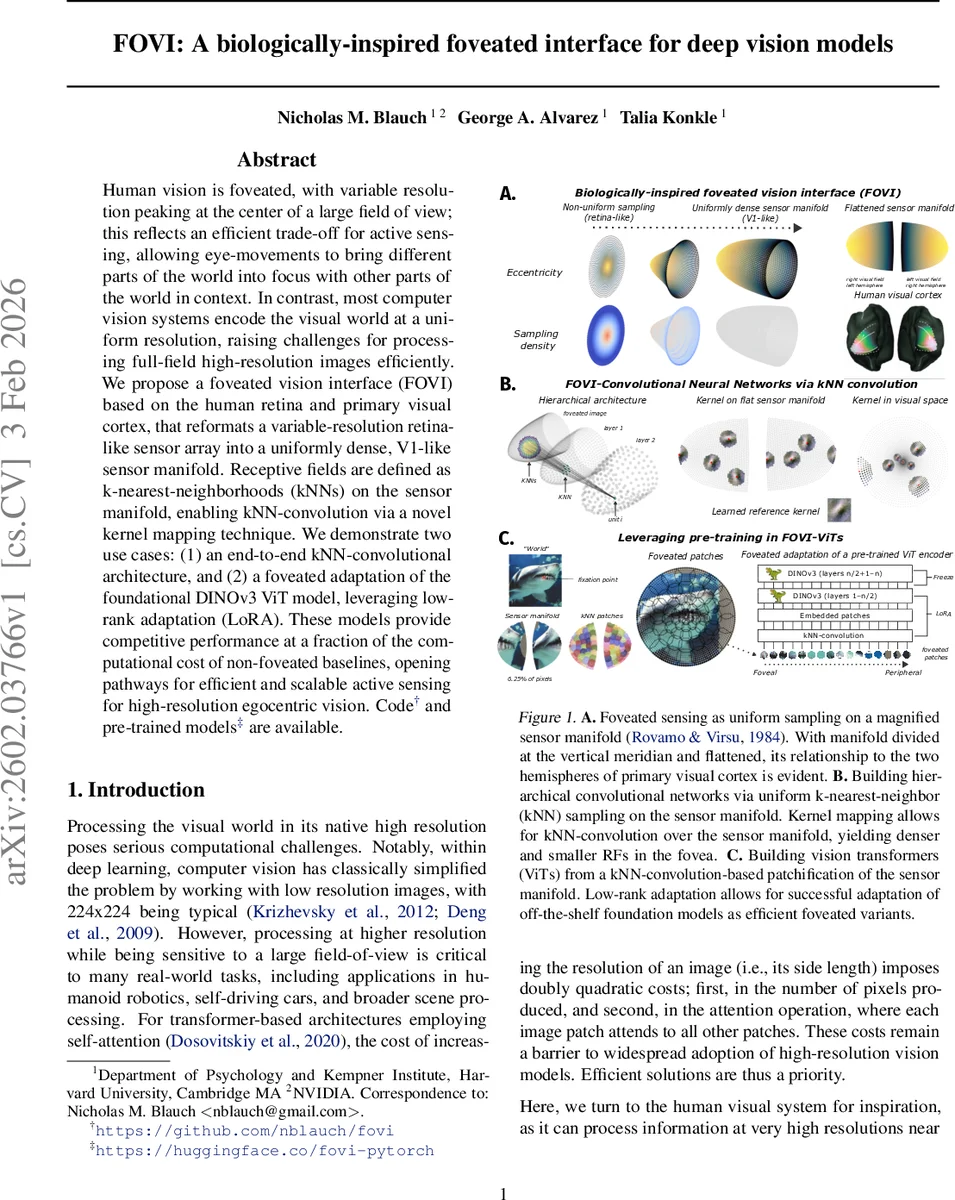

Human vision is foveated, with variable resolution peaking at the center of a large field of view; this reflects an efficient trade-off for active sensing, allowing eye-movements to bring different parts of the world into focus with other parts of the world in context. In contrast, most computer vision systems encode the visual world at a uniform resolution, raising challenges for processing full-field high-resolution images efficiently. We propose a foveated vision interface (FOVI) based on the human retina and primary visual cortex, that reformats a variable-resolution retina-like sensor array into a uniformly dense, V1-like sensor manifold. Receptive fields are defined as k-nearest-neighborhoods (kNNs) on the sensor manifold, enabling kNN-convolution via a novel kernel mapping technique. We demonstrate two use cases: (1) an end-to-end kNN-convolutional architecture, and (2) a foveated adaptation of the foundational DINOv3 ViT model, leveraging low-rank adaptation (LoRA). These models provide competitive performance at a fraction of the computational cost of non-foveated baselines, opening pathways for efficient and scalable active sensing for high-resolution egocentric vision. Code and pre-trained models are available at https://github.com/nblauch/fovi and https://huggingface.co/fovi-pytorch.

💡 Research Summary

The paper introduces FOVI (Foveated Vision Interface), a biologically‑inspired front‑end that transforms a retina‑like, eccentricity‑dependent sampling pattern into a uniformly dense sensor manifold, enabling conventional deep‑learning operations on foveated data. Drawing on the cortical magnification function (CMF) described by Rovamo & Virsu (1984), the authors model sampling density as M(r)=1/(r+a), where r is eccentricity and a controls foveation strength. By integrating this function they obtain a cortical distance w(r)=log(r+a)+C; uniformly sampling w yields radii that, when combined with an isotropy constraint on angular spacing, produce points that are evenly spaced on a 3‑D manifold yet correspond to a log‑polar distribution in visual space. Cutting the manifold along the vertical meridian and flattening it mirrors the left/right hemispheric layout of human V1.

To process data on this manifold the authors define receptive fields as k‑nearest‑neighbor (kNN) neighborhoods around output units tiled on the manifold. They introduce a kernel‑mapping technique that takes a reference 2‑D convolutional kernel learned in a Cartesian grid, transforms it into the polar coordinate system of each kNN (using geodesic distance r and visual angle θ), and samples the reference kernel at higher resolution (typically s=2√k). This yields weight‑shared, orientation‑aligned filters whose effective size varies with eccentricity, achieving a true foveated convolution while preserving the efficiency of standard convolutions.

Two use cases are presented. First, FOVI‑CNNs stack multiple kNN‑convolution layers, forming a hierarchical network analogous to AlexNet but with foveated receptive fields. Experiments on ImageNet‑1K show that moderate foveation (a≈0.5) outperforms uniform‑resolution baselines, while excessive foveation degrades performance due to loss of peripheral information—mirroring known biological trade‑offs.

Second, the authors adapt a state‑of‑the‑art DINOv3 Vision Transformer (ViT) by replacing the standard patch embedding with a foveated patch embedding generated via kNN‑convolution. Low‑rank adaptation (LoRA) is used to fine‑tune the pre‑trained ViT weights without full retraining. The resulting FOVI‑ViT models achieve near‑baseline accuracy (within 1–2 % Top‑1) while reducing FLOPs to roughly 30 % of the full‑resolution ViT, and they consistently beat non‑foveated ViTs constrained to the same computational budget.

Overall contributions include: (1) a mathematically grounded foveated sensor interface aligned with primate retino‑cortical mapping; (2) a novel kNN‑convolution and kernel‑mapping method enabling weight‑sharing on irregular sampling; (3) demonstration of both CNN and transformer architectures that leverage the interface for efficient high‑resolution vision; (4) open‑source code and pretrained models. Limitations noted are the static nature of the manifold (fixed a) and the lack of dynamic gaze‑driven sampling; future work could integrate real‑time eye‑tracking or event‑based sensors to realize fully active foveated perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment