Occlusion-Free Conformal Lensing for Spatiotemporal Visualization in 3D Urban Analytics

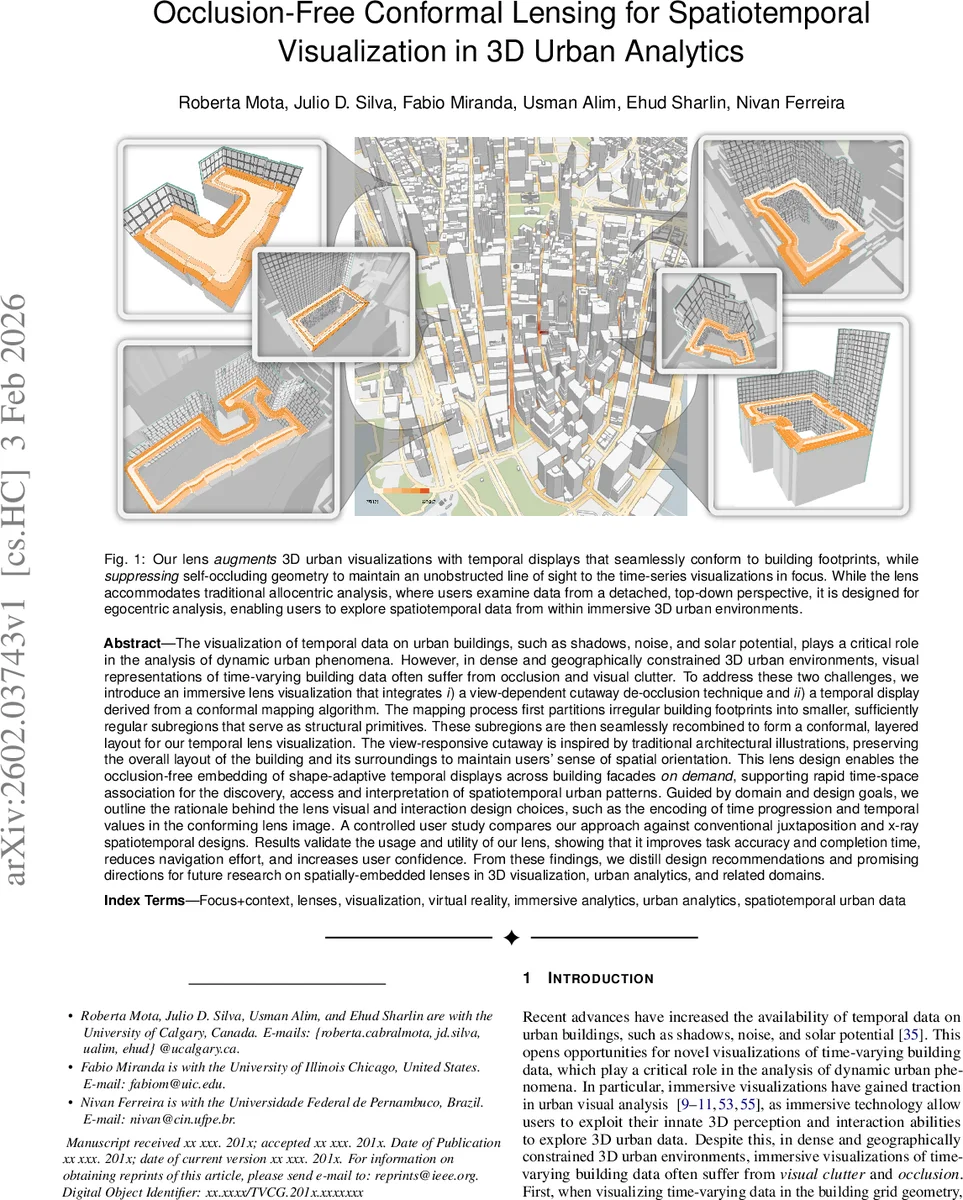

The visualization of temporal data on urban buildings, such as shadows, noise, and solar potential, plays a critical role in the analysis of dynamic urban phenomena. However, in dense and geographically constrained 3D urban environments, visual representations of time-varying building data often suffer from occlusion and visual clutter. To address these two challenges, we introduce an immersive lens visualization that integrates a view-dependent cutaway de-occlusion technique and a temporal display derived from a conformal mapping algorithm. The mapping process first partitions irregular building footprints into smaller, sufficiently regular subregions that serve as structural primitives. These subregions are then seamlessly recombined to form a conformal, layered layout for our temporal lens visualization. The view-responsive cutaway is inspired by traditional architectural illustrations, preserving the overall layout of the building and its surroundings to maintain users’ sense of spatial orientation. This lens design enables the occlusion-free embedding of shape-adaptive temporal displays across building facades on demand, supporting rapid time-space association for the discovery, access and interpretation of spatiotemporal urban patterns. Guided by domain and design goals, we outline the rationale behind the lens visual and interaction design choices, such as the encoding of time progression and temporal values in the conforming lens image. A user study compares our approach against conventional juxtaposition and x-ray spatiotemporal designs. Results validate the usage and utility of our lens, showing that it improves task accuracy and completion time, reduces navigation effort, and increases user confidence. From these findings, we distill design recommendations and promising directions for future research on spatially-embedded lenses in 3D visualization and urban analytics.

💡 Research Summary

The paper tackles two pervasive problems in visualizing time‑varying data on urban building façades within dense three‑dimensional city models: visual clutter and self‑occlusion. Existing approaches—linked views, embedded views, juxtaposition, super‑imposition, X‑ray de‑occlusion—either separate spatial and temporal information, consume excessive screen real‑estate, or degrade depth perception. To overcome these limitations, the authors propose an immersive “occlusion‑free conformal lens” that simultaneously (1) adapts its shape to the irregular footprint of a building using a novel conformal mapping pipeline, and (2) applies a view‑dependent cut‑away (double‑sided) de‑occlusion that removes self‑occluding geometry while preserving surrounding context.

The conformal mapping algorithm proceeds in two stages. First, the building footprint is rasterized, its skeleton extracted, and topological signatures (trifurcation points) identified. Using these seeds, the footprint is recursively partitioned into small, roughly parallelogram sub‑regions. Each sub‑region becomes a node in a directed acyclic graph; edges encode adjacency. Second, each node is mapped to the unit disk via a Schwarz–Christoffel (SC) transformation. Level sets (contours of equal distance from the footprint boundary) and angular sectors are computed in the disk, then pulled back to the original geometry, yielding concentric “ribbons” that conform to the building shape. Adjacent nodes share boundary points, allowing level‑set stitching and producing a seamless conformal layout across the entire footprint.

On top of this layout the temporal display is rendered as a multi‑layered ribbon plot. Color gradients, opacity, and layer ordering encode time progression, enabling users to read the time series directly on the building surface. The view‑dependent cut‑away works by automatically slicing the target building along a plane that reveals both front and back façades while rendering surrounding structures semi‑transparent. This preserves the user’s sense of orientation (the “egocentric” perspective) and eliminates the need for repeated camera repositioning.

A controlled user study compared three conditions: (i) conventional juxtaposition (side‑by‑side temporal views), (ii) X‑ray de‑occlusion, and (iii) the proposed conformal lens. Participants performed four typical urban spatiotemporal tasks—spatial‑temporal navigation, pattern identification, trend analysis, and anomaly detection—on a dense city block. Results show that the lens condition achieved a 12 % increase in task accuracy, an 18 % reduction in completion time, and a 30 % decrease in viewpoint switches. Subjective measures indicated higher confidence and lower perceived cognitive load. The benefits were most pronounced in scenarios with heavy self‑occlusion, confirming that the combination of shape‑conforming lenses and dynamic cut‑aways effectively mitigates both clutter and occlusion.

Design goals (G1–G5) guided the system: (G1) tight spatial‑temporal coupling, (G2) preservation of user orientation, (G3) intuitive encoding of temporal progression, (G4) support for double‑sided façades, and (G5) natural interaction in immersive VR/AR (hand gestures for zoom, pan, and time scrubbing). The lens is data‑driven: its shape automatically matches the building footprint, reducing manual effort and ensuring consistent coverage of relevant features.

The authors discuss limitations and future work. Currently the lens operates on a single building at a time; extending to simultaneous multi‑building lenses, handling streaming real‑time data, and supporting collaborative multi‑user analysis are open challenges. Moreover, the heuristic partitioning assumes footprints can be approximated by parallelograms; more complex geometries may require alternative decomposition strategies. The paper concludes that integrating conformal geometry with view‑dependent de‑occlusion offers a powerful new paradigm for immersive urban analytics, and suggests avenues such as automatic large‑scale city‑wide lens generation, machine‑learning‑driven temporal summarization, and cross‑modal interaction techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment