Rethinking the Reranker: Boundary-Aware Evidence Selection for Robust Retrieval-Augmented Generation

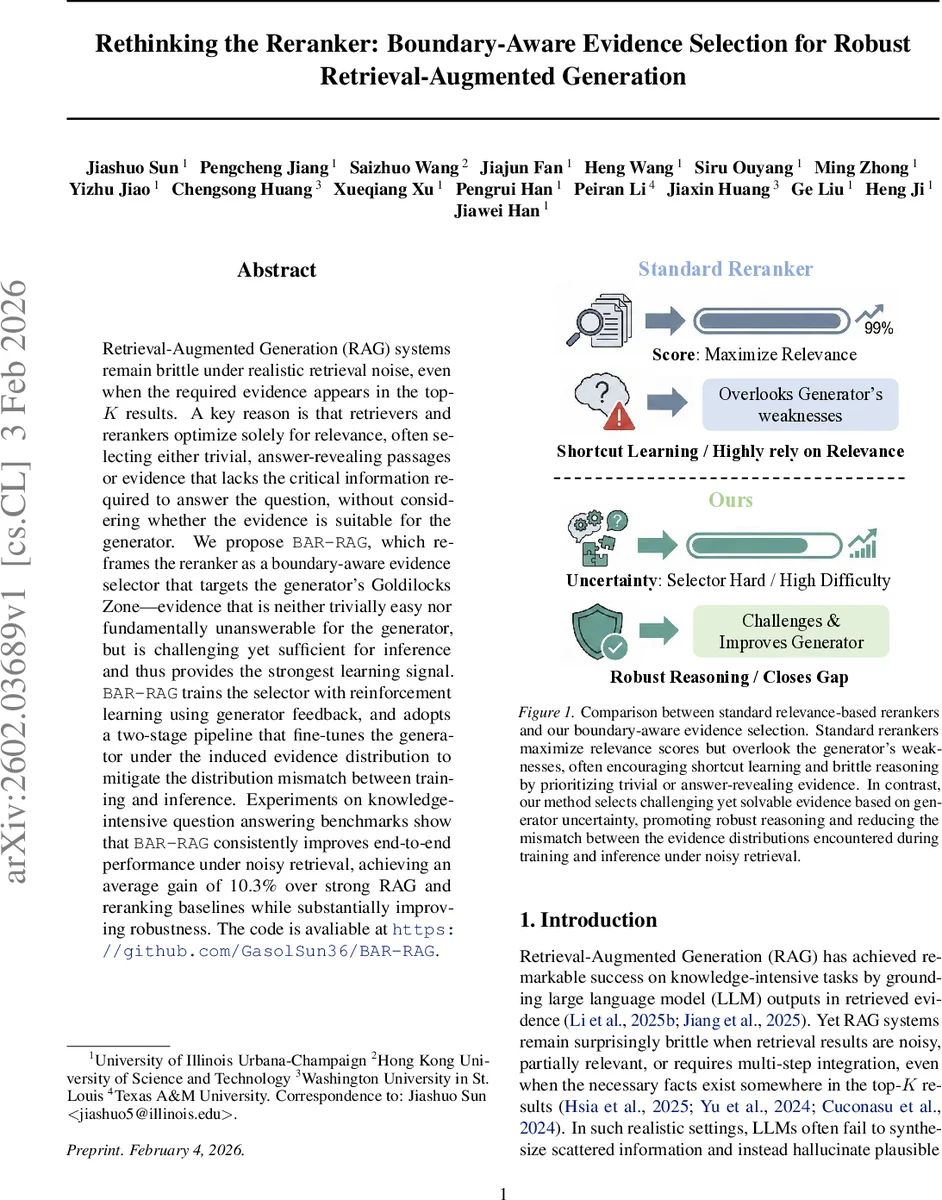

Retrieval-Augmented Generation (RAG) systems remain brittle under realistic retrieval noise, even when the required evidence appears in the top-K results. A key reason is that retrievers and rerankers optimize solely for relevance, often selecting either trivial, answer-revealing passages or evidence that lacks the critical information required to answer the question, without considering whether the evidence is suitable for the generator. We propose BAR-RAG, which reframes the reranker as a boundary-aware evidence selector that targets the generator’s Goldilocks Zone – evidence that is neither trivially easy nor fundamentally unanswerable for the generator, but is challenging yet sufficient for inference and thus provides the strongest learning signal. BAR-RAG trains the selector with reinforcement learning using generator feedback, and adopts a two-stage pipeline that fine-tunes the generator under the induced evidence distribution to mitigate the distribution mismatch between training and inference. Experiments on knowledge-intensive question answering benchmarks show that BAR-RAG consistently improves end-to-end performance under noisy retrieval, achieving an average gain of 10.3 percent over strong RAG and reranking baselines while substantially improving robustness. Code is publicly avaliable at https://github.com/GasolSun36/BAR-RAG.

💡 Research Summary

Retrieval‑augmented generation (RAG) has become a cornerstone for leveraging external knowledge in large language models (LLMs). Despite impressive results on curated benchmarks, real‑world deployments suffer from noisy or partially relevant retrieval results: the correct evidence may be present in the top‑K documents, yet the system often fails to synthesize it, leading to hallucinations or trivial answers. The authors identify a fundamental mismatch: traditional rerankers are trained solely to maximize relevance scores, ignoring the downstream generator’s needs. Consequently, rerankers either select passages that directly contain the answer (making the task trivial and encouraging shortcut learning) or choose evidence that lacks the crucial information, rendering the question effectively unsolvable for the generator.

BAR‑RAG reframes the reranker as a “boundary‑aware evidence selector”. The central insight is to target the generator’s “Goldilocks Zone”—evidence that is neither too easy (probability of correct answer ≈ 1) nor too hard (≈ 0), but lies near a target correctness rate c (e.g., 0.5). To operationalize this, the selector policy π_r samples candidate evidence sets S from the initial retrieval pool C. For each S, the frozen generator π_g performs K roll‑outs, producing answers aₖ. The empirical correctness probability \hat{p}(S) is estimated as the fraction of roll‑outs whose reward exceeds a threshold δ. A triangular boundary reward R_bdy(S) = min{ \hat{p}(S)/c , (1‑\hat{p}(S))/(1‑c) } peaks when \hat{p}(S)=c, encouraging selections that sit at the competence boundary.

Four reward components guide the selector: (1) Boundary reward (R_bdy) for difficulty alignment; (2) Relevance reward (R_rel) equal to the average initial retrieval score of the selected documents, ensuring that the evidence remains topically appropriate; (3) Format reward (R_fmt) that checks for well‑formed selector output (no duplicate indices, correct structure); (4) Count penalty (P_cnt) that penalizes deviation from a target number of documents k*. The final selector reward is R(S)=R_fmt·(λ_bdy·R_bdy + λ_rel·R_rel – P_cnt). This composite reward is optimized with Group Relative Policy Optimization (GRPO), which normalizes advantages within each batch of sampled evidence sets to stabilize training.

Training proceeds in two stages. Stage 1 focuses on the selector: the generator is frozen, and the selector learns via reinforcement learning to produce evidence sets that maximize the composite reward, thereby aligning with the generator’s current competence boundary. Stage 2 freezes the selector and fine‑tunes the generator on the evidence distribution induced by the selector. The generator receives a composite reward R_g(a) comprising (i) a format component (zero reward if output tags are malformed), (ii) an accuracy component R_acc that blends token‑level F1 and exact match (EM) against gold answers, and (iii) a citation component R_cite that rewards appropriate citation of a target number of documents within a

Experiments span a diverse suite of knowledge‑intensive QA benchmarks: Natural Questions (NQ), TriviaQA, PopQA, HotpotQA, and 2Wiki, covering both single‑hop and multi‑hop reasoning. Three backbone LLMs are evaluated: Qwen‑2.5‑3B‑Instruct, Qwen‑2.5‑7B‑Instruct, and LLaMA‑3.1‑8B‑Instruct. Baselines include vanilla RAG, RAG with a standard relevance‑based reranker, IRCoT, and RAG with supervised fine‑tuning (RAG SFT). Performance is measured by exact match (EM) and F1, with particular attention to the gap between retrieval recall (Recall@5/10) and final QA accuracy.

BAR‑RAG consistently outperforms all baselines, delivering an average gain of 10.3 percentage points in QA accuracy. Notably, the method narrows the recall‑accuracy gap: even when recall is high, standard RAG still suffers from low accuracy due to poor evidence quality; BAR‑RAG’s boundary‑aware selection ensures that the evidence actually contributes to reasoning, thus translating recall into answer quality. Ablation studies reveal that (1) training‑time filtering of trivially easy or fundamentally unsolvable queries is crucial; removing it drops performance by several points. (2) Omitting either Stage 1 (selector) or Stage 2 (generator fine‑tuning) reduces accuracy by 3–5 pp, confirming the necessity of both phases. (3) Excluding the boundary reward or relevance reward each harms performance, underscoring their complementary roles. (4) Removing the citation reward from the generator diminishes evidence attribution and slightly lowers overall accuracy.

The analysis shows that the selector learns to concentrate evidence sets whose estimated correctness probability \hat{p}(S) clusters around the target c (≈ 0.5), thereby forcing the generator to engage in genuine multi‑step inference rather than copying. The two‑stage pipeline mitigates the train‑test mismatch that plagues conventional RAG pipelines, because the generator is exposed during fine‑tuning to the same noisy, challenging evidence it will encounter at inference time.

In summary, BAR‑RAG makes three key contributions: (1) Recasting the reranker’s objective from pure relevance maximization to generator‑centric evidence selection; (2) Introducing a reinforcement‑learning framework that explicitly models the generator’s competence boundary and integrates relevance, format, and size constraints; (3) Demonstrating that a two‑stage training regime (selector → generator) substantially improves robustness to retrieval noise across multiple datasets and model sizes. The authors release code and data at https://github.com/GasolSun36/BAR‑RAG, facilitating reproducibility and future extensions such as sentence‑level selection, dynamic adjustment of the target correctness c, and multimodal evidence handling.

Comments & Academic Discussion

Loading comments...

Leave a Comment