Use Graph When It Needs: Efficiently and Adaptively Integrating Retrieval-Augmented Generation with Graphs

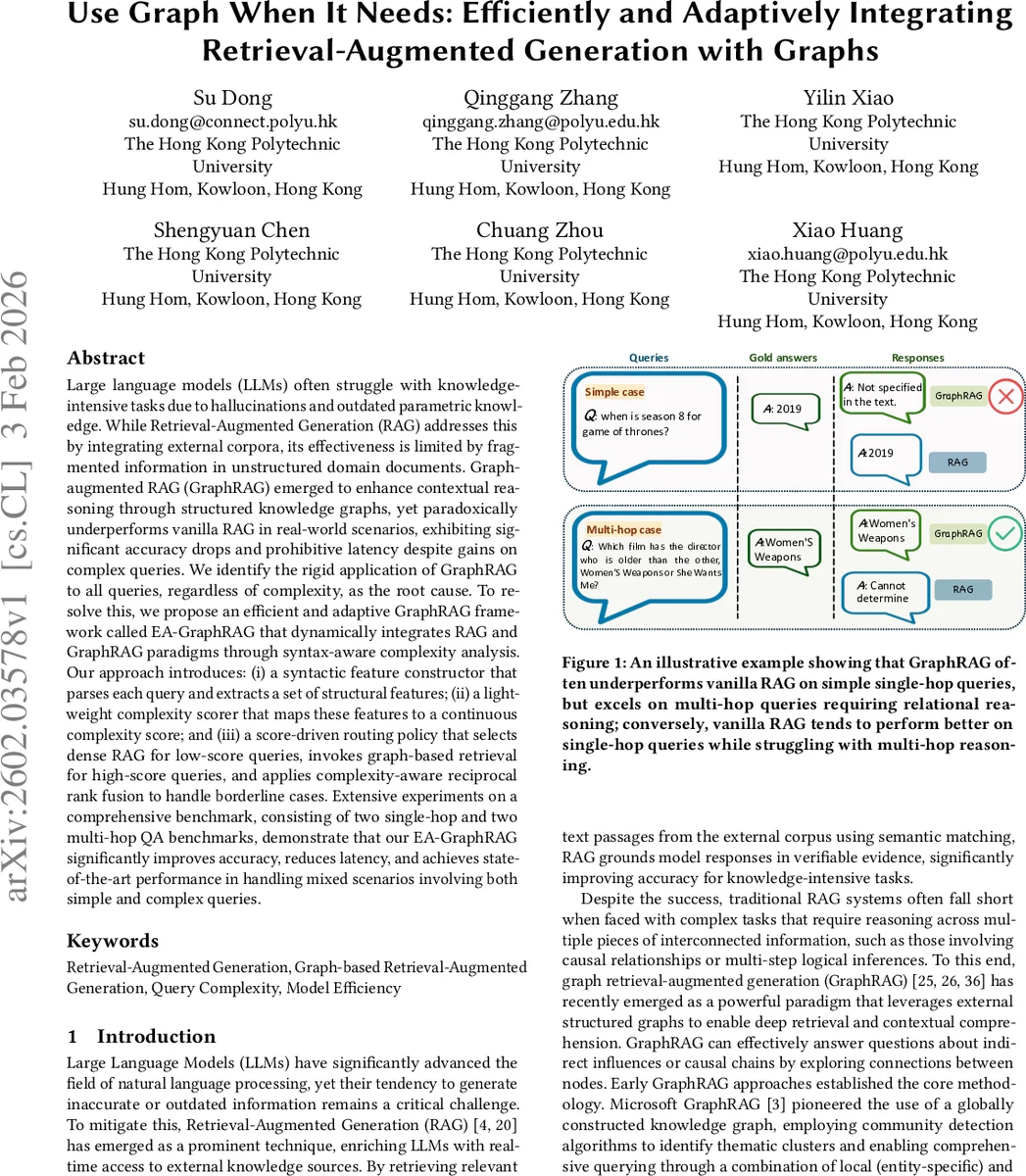

Large language models (LLMs) often struggle with knowledge-intensive tasks due to hallucinations and outdated parametric knowledge. While Retrieval-Augmented Generation (RAG) addresses this by integrating external corpora, its effectiveness is limited by fragmented information in unstructured domain documents. Graph-augmented RAG (GraphRAG) emerged to enhance contextual reasoning through structured knowledge graphs, yet paradoxically underperforms vanilla RAG in real-world scenarios, exhibiting significant accuracy drops and prohibitive latency despite gains on complex queries. We identify the rigid application of GraphRAG to all queries, regardless of complexity, as the root cause. To resolve this, we propose an efficient and adaptive GraphRAG framework called EA-GraphRAG that dynamically integrates RAG and GraphRAG paradigms through syntax-aware complexity analysis. Our approach introduces: (i) a syntactic feature constructor that parses each query and extracts a set of structural features; (ii) a lightweight complexity scorer that maps these features to a continuous complexity score; and (iii) a score-driven routing policy that selects dense RAG for low-score queries, invokes graph-based retrieval for high-score queries, and applies complexity-aware reciprocal rank fusion to handle borderline cases. Extensive experiments on a comprehensive benchmark, consisting of two single-hop and two multi-hop QA benchmarks, demonstrate that our EA-GraphRAG significantly improves accuracy, reduces latency, and achieves state-of-the-art performance in handling mixed scenarios involving both simple and complex queries.

💡 Research Summary

The paper addresses a critical limitation of current Retrieval‑Augmented Generation (RAG) and Graph‑augmented RAG (GraphRAG) systems: they apply a single retrieval strategy to all queries regardless of the query’s reasoning complexity. While GraphRAG excels at multi‑hop, relational reasoning, it suffers from substantial latency and often underperforms dense RAG on simple, single‑hop questions. To resolve this, the authors propose EA‑GraphRAG, an Efficient and Adaptive GraphRAG framework that dynamically selects between dense retrieval, graph‑based retrieval, or a hybrid fusion based on a syntactic complexity score computed for each query.

The framework consists of three main components. First, a syntactic feature constructor parses each input question using constituency and dependency parsers (Stanza and SpaCy). It extracts nine basic syntactic units (words, sentences, clauses, dependent clauses, T‑units, complex T‑units, coordinate phrases, complex nominals, verb phrases) via Tregex patterns, and computes a rich set of ratio‑based, depth‑based, and interaction features (e.g., mean clause length, subordination ratios, dependency distance statistics, entity density, lexical diversity, tree depth/width, etc.). Second, a lightweight complexity scorer is an MLP with feature‑attention and residual connections that maps the high‑dimensional feature vector ϕ(q) to a continuous score s(q) ∈ (0,1). Third, a score‑driven routing policy uses two thresholds τ_L and τ_H. If s(q) < τ_L, the system invokes a dense retriever (standard RAG) and returns the top‑k passages. If s(q) ≥ τ_H, it activates a graph‑based retriever that traverses a pre‑built heterogeneous knowledge graph (nodes = entities + passages, edges = OpenIE triples, occurrence links, synonym links). For intermediate scores (τ_L ≤ s(q) < τ_H), the system merges the dense and graph retrieval results using weighted Reciprocal Rank Fusion (wRRF), where the weights are derived directly from s(q) and 1‑s(q). The final answer is generated by a fixed LLM conditioned on the selected context.

The graph index follows the design of prior GraphRAG works: entities are extracted via a two‑stage LLM prompting pipeline, and the graph includes three edge types (relation, occurrence, synonym). This ensures that EA‑GraphRAG can reuse existing graph construction pipelines without additional overhead.

Experiments were conducted on a comprehensive benchmark comprising two single‑hop QA datasets (Natural Questions, TriviaQA) and two multi‑hop QA datasets (HotpotQA, ComplexWebQuestions). EA‑GraphRAG achieved a consistent accuracy boost: +7–9 percentage points over vanilla GraphRAG and +3–5 points over dense RAG on the overall mixed test set. Latency remained comparable to dense RAG, and in high‑complexity scenarios the adaptive routing reduced response time by over 30 % relative to pure GraphRAG. Ablation studies confirmed that the syntactic complexity features, the MLP scorer, and the dual‑threshold routing each contribute significantly to the performance gains.

In summary, EA‑GraphRAG introduces a novel “complexity‑aware adaptive routing” paradigm that reconciles the depth of graph‑based reasoning with the efficiency of dense retrieval. By leveraging inexpensive syntactic analysis to estimate query difficulty, the system automatically selects the most appropriate retrieval path, thereby improving both accuracy and runtime efficiency in realistic mixed‑query environments. The approach is lightweight, scalable, and readily integrable with existing RAG or GraphRAG pipelines, making it a promising direction for production‑grade knowledge‑intensive AI services. Future work may explore richer complexity estimators (e.g., meta‑learning, large‑LLM prompting) or dynamic graph restructuring based on the predicted complexity.

Comments & Academic Discussion

Loading comments...

Leave a Comment