Asymmetric Hierarchical Anchoring for Audio-Visual Joint Representation: Resolving Information Allocation Ambiguity for Robust Cross-Modal Generalization

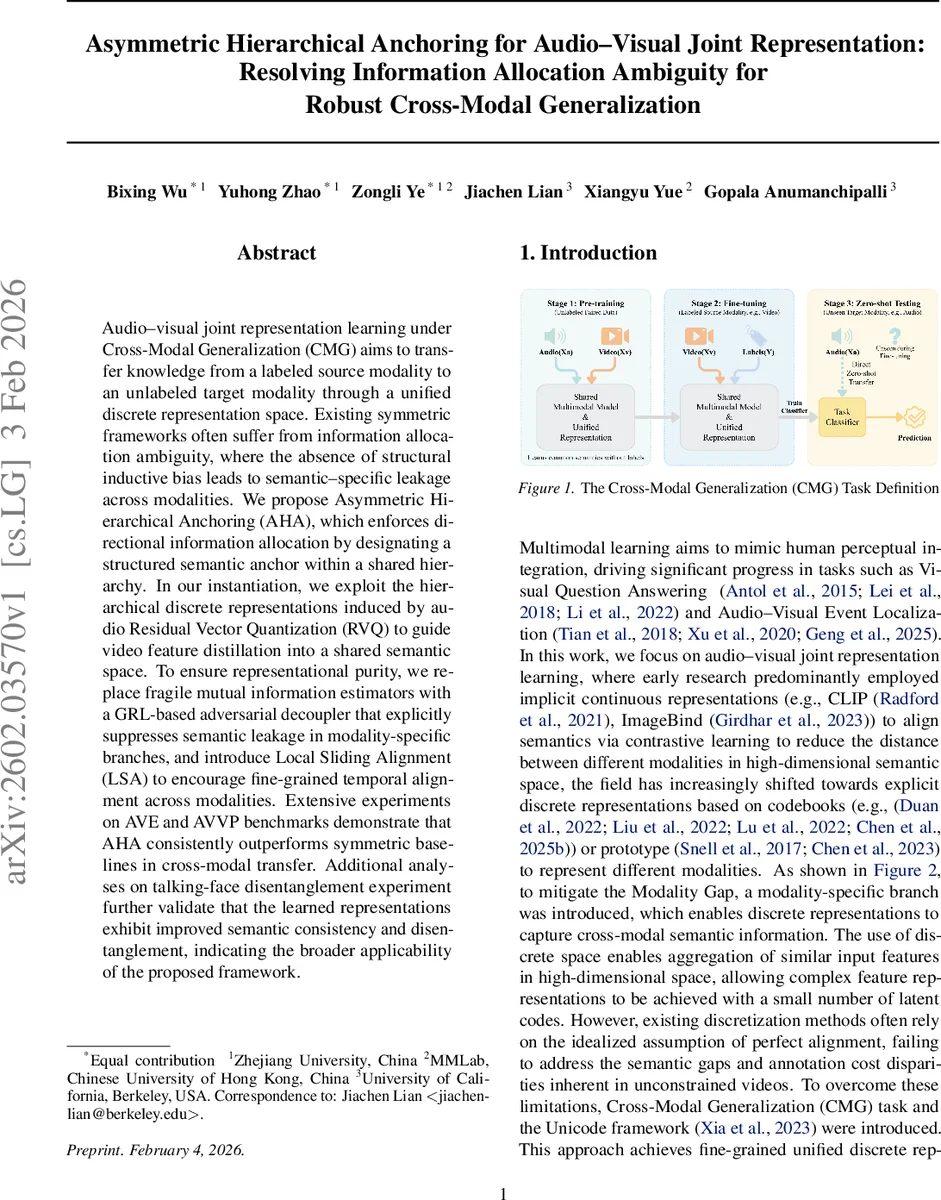

Audio-visual joint representation learning under Cross-Modal Generalization (CMG) aims to transfer knowledge from a labeled source modality to an unlabeled target modality through a unified discrete representation space. Existing symmetric frameworks often suffer from information allocation ambiguity, where the absence of structural inductive bias leads to semantic-specific leakage across modalities. We propose Asymmetric Hierarchical Anchoring (AHA), which enforces directional information allocation by designating a structured semantic anchor within a shared hierarchy. In our instantiation, we exploit the hierarchical discrete representations induced by audio Residual Vector Quantization (RVQ) to guide video feature distillation into a shared semantic space. To ensure representational purity, we replace fragile mutual information estimators with a GRL-based adversarial decoupler that explicitly suppresses semantic leakage in modality-specific branches, and introduce Local Sliding Alignment (LSA) to encourage fine-grained temporal alignment across modalities. Extensive experiments on AVE and AVVP benchmarks demonstrate that AHA consistently outperforms symmetric baselines in cross-modal transfer. Additional analyses on talking-face disentanglement experiment further validate that the learned representations exhibit improved semantic consistency and disentanglement, indicating the broader applicability of the proposed framework.

💡 Research Summary

The paper tackles the problem of Cross‑Modal Generalization (CMG) in audio‑visual learning, where a model trained on a labeled source modality must be transferred to an unlabeled target modality using a shared discrete representation space. Existing symmetric frameworks suffer from “information allocation ambiguity”: without structural bias, semantic information tends to leak into modality‑specific branches, causing codebook collapse and poor disentanglement.

To resolve this, the authors propose Asymmetric Hierarchical Anchoring (AHA). The key idea is to treat the hierarchical Residual Vector Quantization (RVQ) of audio as a structural semantic anchor. RVQ naturally decomposes a signal into multiple layers; the first k layers capture high‑level semantics while later layers encode residual, modality‑specific details. By designating the first k layers as a shared codebook, both audio and video semantic encoders are forced to map their high‑level representations onto the same discrete tokens. This asymmetric design ensures that the shared space contains only pure semantics, while modality‑specific information is pushed to higher‑level residual layers.

Two auxiliary mechanisms reinforce the anchor. First, instead of unstable mutual‑information estimators (e.g., CLUB), the paper employs a Gradient Reversal Layer (GRL)‑based adversarial decoupler. A discriminator tries to distinguish paired (video‑specific feature, semantic token) from mismatched pairs, while the video‑specific encoder is trained via GRL to fool the discriminator, effectively removing semantic content from the specific branch. The authors further introduce velocity‑aware anchor sampling, giving higher probability to time steps where the semantic token changes rapidly, thus focusing the adversarial game on dynamic content.

Second, Local Sliding Alignment (LSA) replaces global alignment with a windowed matching scheme. For each time step t, a local search window Ωₜ (|t‑j| ≤ R) is defined, and a positive tolerance set Pₜ (|t‑j| ≤ nₚₒₛ) specifies acceptable matches. A soft target distribution is uniform over Pₜ, and the model predicts alignment probabilities via a masked softmax over the audio and video units within Ωₜ. A bidirectional cross‑entropy loss encourages both audio‑to‑video and video‑to‑audio alignment, handling temporal asynchrony common in unconstrained videos.

The overall training objective combines (i) reconstruction losses for audio and video decoders, (ii) the LSA alignment loss, (iii) the GRL adversarial loss, and (iv) a Cross‑Modal Contrastive Predictive Coding (Cross‑CPC) loss that further refines fine‑grained temporal correspondence.

Experiments on two standard benchmarks—Audio‑Visual Event (AVE) localization and Audio‑Visual Video Parsing (AVVP)—show that AHA consistently outperforms symmetric baselines. In zero‑shot transfer (unlabeled target modality), the gain reaches 4–5 percentage points in mean average precision (mAP) or accuracy. An additional talking‑face disentanglement study demonstrates that the audio anchor yields clean separation between lip motion and background visual cues, improving both quantitative metrics (L1 distance, SSIM) and qualitative visualizations.

Ablation studies vary the number of shared RVQ layers (k), the GRL strength (λ), and the LSA window size (R). Results indicate that a single shared layer (k = 1) offers the best trade‑off between semantic purity and reconstruction fidelity, while moderate λ and R values maximize adversarial decoupling without destabilizing training. t‑SNE visualizations confirm that audio and video tokens cluster together in the shared space, whereas modality‑specific features occupy distinct regions.

In summary, AHA introduces three synergistic components—(1) a hierarchical audio‑driven semantic anchor, (2) GRL‑based adversarial disentanglement, and (3) local sliding temporal alignment—to eliminate information leakage and achieve robust cross‑modal generalization. The approach is broadly applicable to multimodal generation, controllable synthesis, and unsupervised cross‑modal learning, and opens avenues for extending the asymmetric anchoring principle to additional modalities such as text or depth.

Comments & Academic Discussion

Loading comments...

Leave a Comment