AffordanceGrasp-R1:Leveraging Reasoning-Based Affordance Segmentation with Reinforcement Learning for Robotic Grasping

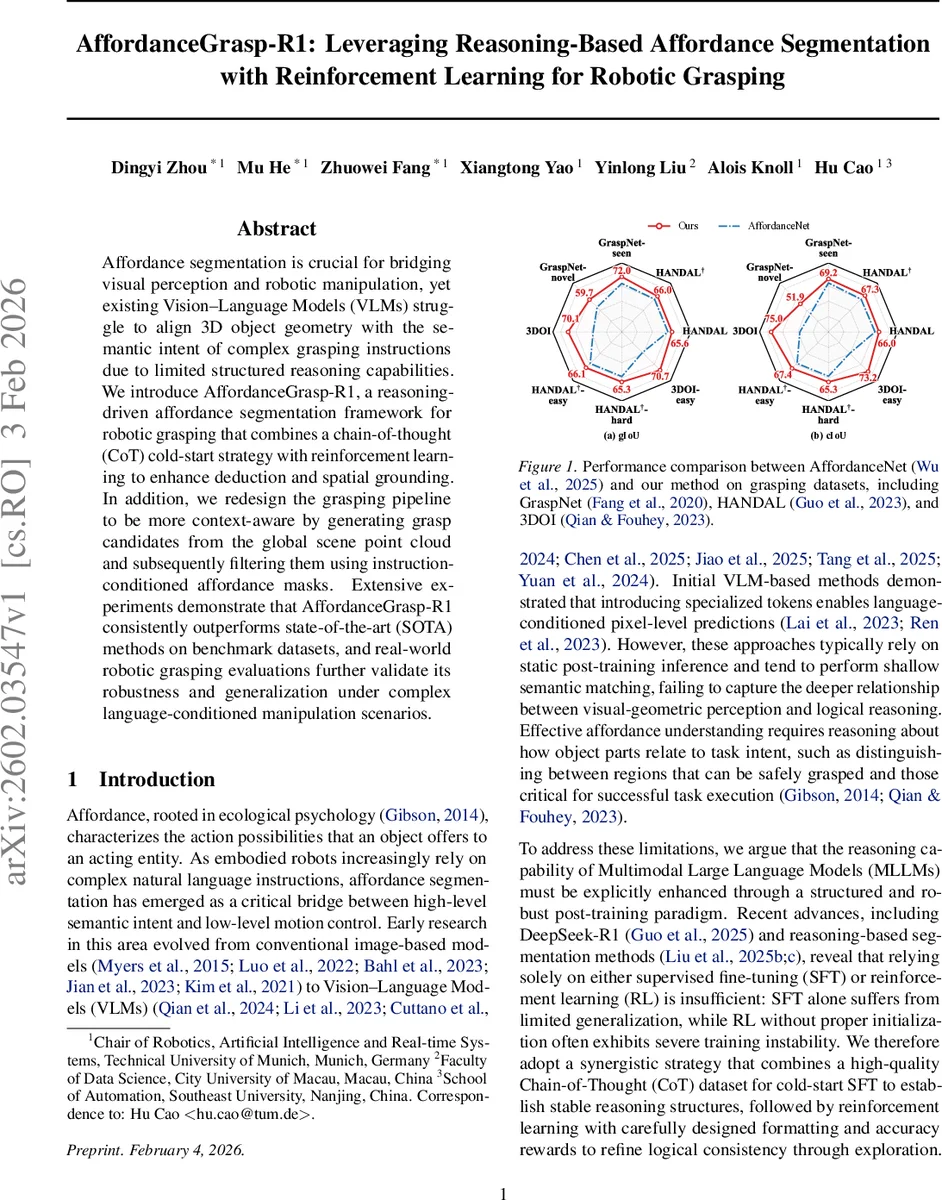

We introduce AffordanceGrasp-R1, a reasoning-driven affordance segmentation framework for robotic grasping that combines a chain-of-thought (CoT) cold-start strategy with reinforcement learning to enhance deduction and spatial grounding. In addition, we redesign the grasping pipeline to be more context-aware by generating grasp candidates from the global scene point cloud and subsequently filtering them using instruction-conditioned affordance masks. Extensive experiments demonstrate that AffordanceGrasp-R1 consistently outperforms state-of-the-art (SOTA) methods on benchmark datasets, and real-world robotic grasping evaluations further validate its robustness and generalization under complex language-conditioned manipulation scenarios.

💡 Research Summary

AffordanceGrasp‑R1 tackles the longstanding gap between language‑conditioned affordance perception and 3‑D grasp execution in robotic manipulation. Existing vision‑language models (VLMs) struggle to align complex natural‑language instructions with the geometry of objects, largely because they lack structured reasoning capabilities and discard global scene context when generating grasp candidates. The proposed system introduces a three‑module architecture—an MLLM for multimodal reasoning, a segmentation module based on SAM‑2, and a grasping module that operates on the full‑scene point cloud.

The MLLM (Qwen2.5‑VL‑7B) is post‑trained in two stages. First, a “Chain‑of‑Thought (CoT) cold‑start” stage uses a curated dataset of 3,313 samples containing high‑quality reasoning traces. Supervised fine‑tuning (SFT) on this data endows the model with stable logical chains, mitigating the cold‑start problem that typically hampers pure reinforcement learning. Second, a reinforcement‑learning (RL) stage employs 39,087 samples and the Group Relative Policy Optimization (GRPO) algorithm, which forgoes a learned value function and instead normalizes rewards within each batch to compute advantages. The reward function explicitly measures logical consistency, reliance on visual evidence, and completeness of the inference steps, ensuring that the model’s output aligns with both language semantics and visual cues.

Instead of having the MLLM directly output pixel‑wise masks (a source of noise), it generates structured spatial prompts: a bounding box and a central point derived from the original affordance mask. These prompts are fed to a LoRA‑fine‑tuned SAM‑2, which produces high‑fidelity affordance masks. This decoupled design leverages the strong segmentation capabilities of SAM while allowing the MLLM to focus solely on reasoning.

Crucially, the grasping pipeline preserves the global scene point cloud. Candidate grasps are generated by a pre‑trained GA‑Grasp model on the entire point cloud, after which the affordance mask filters out unsuitable candidates. This approach maintains consistency with the grasp model’s pre‑training distribution and retains contextual information that would otherwise be lost if the point cloud were cropped to the mask (as in prior AffordanceNet).

The authors built a post‑training dataset on top of RAGNet, extracting bounding boxes and inscribed‑circle centers from each mask, discarding samples with IoU < 0.6 between the reconstructed mask and the ground truth. CoT annotations were generated automatically using a visual‑reasoning model (Qwen‑QVQ‑72B‑Preview) and distilled into concise reasoning rules, which were then used to synthesize new QA pairs with CoT. An automatic quality check evaluates each CoT on the three reward dimensions, correcting or regenerating low‑quality samples.

Extensive evaluation on three public grasp datasets (GraspNet, HANDAL, 3DOI) shows that AffordanceGrasp‑R1 outperforms the current state‑of‑the‑art in both generalized IoU (gIoU) and class‑specific IoU (cIoU). On the RAGNet benchmark, it achieves a 12 % absolute gain over the previous best method, AffordanceNet. Real‑world robot experiments further validate the approach: under “easy” language commands (e.g., “pick up the cup”) the system attains an 80 % success rate, while under “hard” commands requiring nuanced reasoning (e.g., “grasp the handle of the cup and pour water”) it reaches 72 % success, all in a zero‑shot setting without task‑specific fine‑tuning.

In summary, AffordanceGrasp‑R1 makes three key contributions: (1) a high‑quality CoT dataset that stabilizes MLLM reasoning; (2) a synergistic SFT + RL post‑training pipeline that enhances logical deduction and spatial grounding; and (3) a redesigned grasping pipeline that integrates instruction‑conditioned affordance masks with the global scene point cloud, preserving context and improving robustness. The work demonstrates that combining structured reasoning, reinforcement learning, and decoupled segmentation can bridge the semantic gap between language instructions and precise 3‑D robot actions, opening avenues for more adaptable and intelligent manipulation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment