Decoupling Skeleton and Flesh: Efficient Multimodal Table Reasoning with Disentangled Alignment and Structure-aware Guidance

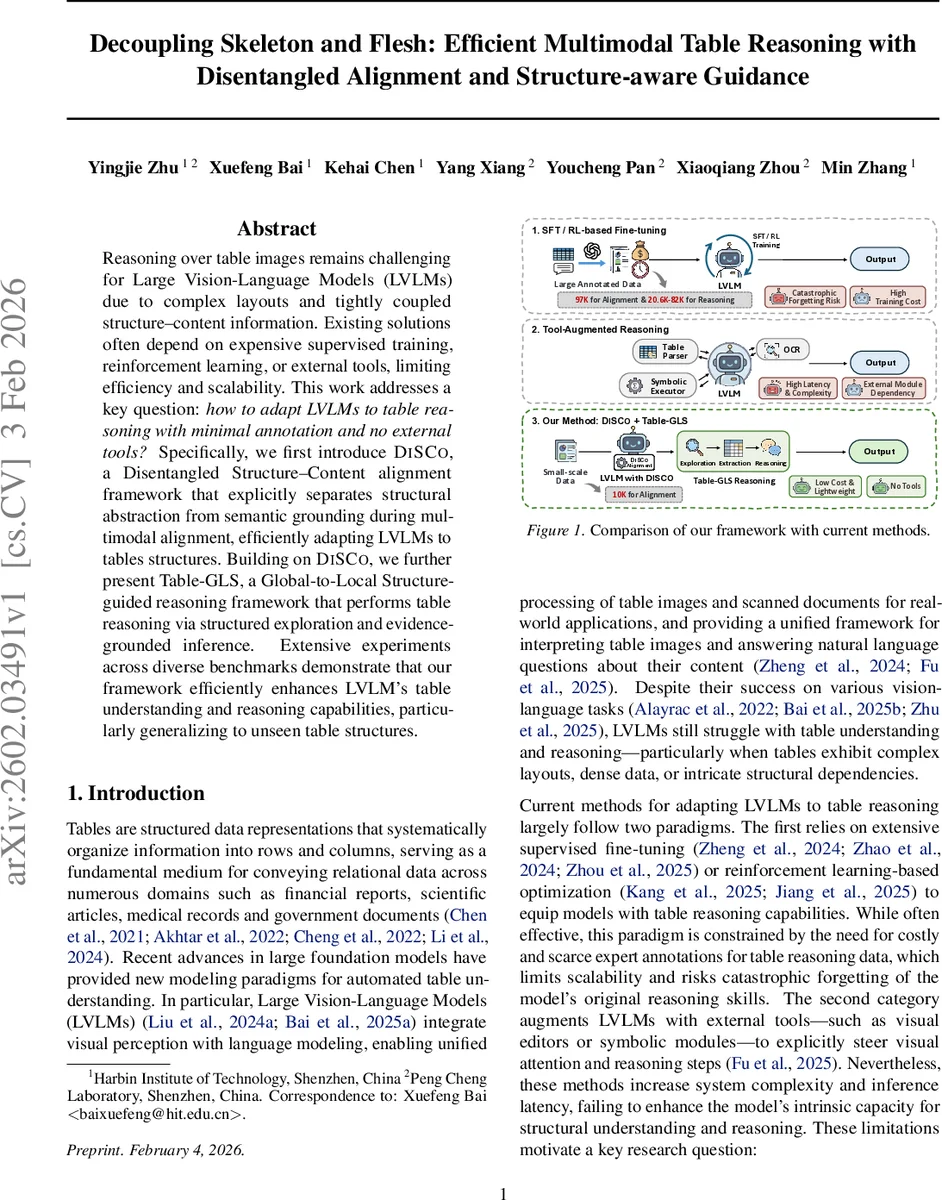

Reasoning over table images remains challenging for Large Vision-Language Models (LVLMs) due to complex layouts and tightly coupled structure-content information. Existing solutions often depend on expensive supervised training, reinforcement learning, or external tools, limiting efficiency and scalability. This work addresses a key question: how to adapt LVLMs to table reasoning with minimal annotation and no external tools? Specifically, we first introduce DiSCo, a Disentangled Structure-Content alignment framework that explicitly separates structural abstraction from semantic grounding during multimodal alignment, efficiently adapting LVLMs to tables structures. Building on DiSCo, we further present Table-GLS, a Global-to-Local Structure-guided reasoning framework that performs table reasoning via structured exploration and evidence-grounded inference. Extensive experiments across diverse benchmarks demonstrate that our framework efficiently enhances LVLM’s table understanding and reasoning capabilities, particularly generalizing to unseen table structures.

💡 Research Summary

This paper tackles the problem of adapting large vision‑language models (LVLMs) to reason over table images without relying on costly supervised fine‑tuning, reinforcement learning, or external tools such as OCR or visual editors. The authors propose a two‑stage framework: (1) DiSCo (Disentangled Structure‑Content Alignment) and (2) Table‑GLS (Global‑to‑Local Structure‑guided reasoning).

DiSCo addresses the entanglement of table layout and cell semantics that is common in existing LVLM‑based table alignment methods. It splits the alignment task into two complementary objectives. For structure alignment, all cell contents in a textual representation (HTML, Markdown, LaTeX) are replaced with a placeholder token, preserving only layout tokens (row/column delimiters, header hierarchy, span markers). The LVLM is trained to predict this anonymized structure sequence conditioned on the table image, forcing it to learn pure visual layout cues. For content alignment, two levels are introduced: a global content task where the model generates a concise description of the whole table (number of rows/columns and a brief summary of each), and a local content task where the model is queried with a specific row‑column index and must output the exact cell text. By separating these objectives, DiSCo enables the LVLM to reuse its existing semantic knowledge while efficiently learning structural representations with as few as 10 K annotated table images.

Building on the disentangled representations, Table‑GLS provides a training‑free inference pipeline that guides the LVLM through three steps. First, Global Structure Exploration: given a question and the table image, the model is prompted to identify which rows and columns are relevant, producing a short reasoning trace and lists of target row labels and column headers. Second, Self‑Refined Sub‑table Extraction: the model self‑checks the adequacy of the identified rows/columns, revises the plan if needed, and extracts a minimal sub‑table expressed in a semi‑structured textual format. This verification step mitigates error propagation from the first stage. Third, Evidence‑Grounded Reasoning: the model answers the original question using only the extracted sub‑table as evidence, ensuring that the final answer is grounded in verified visual content. No additional fine‑tuning or external modules are required, and the computational overhead remains low.

The authors evaluate the combined DiSCo + Table‑GLS pipeline on 21 diverse table understanding and reasoning benchmarks, covering tasks such as cell‑level QA, aggregation, logical inference, and multimodal document comprehension. Compared with strong baselines that rely on supervised fine‑tuning (SFT) or reinforcement learning (RL), the proposed approach achieves consistent improvements of 3–5 percentage points in accuracy, with even larger gains (7–9 pp) on “unseen layout” splits where test tables have novel structural patterns. Importantly, the performance boost is obtained with only 10 K table images (≈97 K structure alignment samples and 20.6 K–82 K reasoning samples), demonstrating high data efficiency. Ablation studies confirm that both the disentangled alignment and the global‑to‑local reasoning contribute additively to the final performance.

In summary, the paper introduces (1) a novel disentangled alignment strategy that cleanly separates visual layout learning from semantic grounding, and (2) a lightweight, tool‑free reasoning framework that leverages the learned structure to guide evidence‑based answer generation. Together they enable LVLMs to handle complex, real‑world table images with minimal annotation cost and strong generalization to new table designs, offering a practical pathway for deploying multimodal table reasoning in downstream applications such as financial report analysis, scientific literature mining, and automated document processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment