Hierarchical Concept-to-Appearance Guidance for Multi-Subject Image Generation

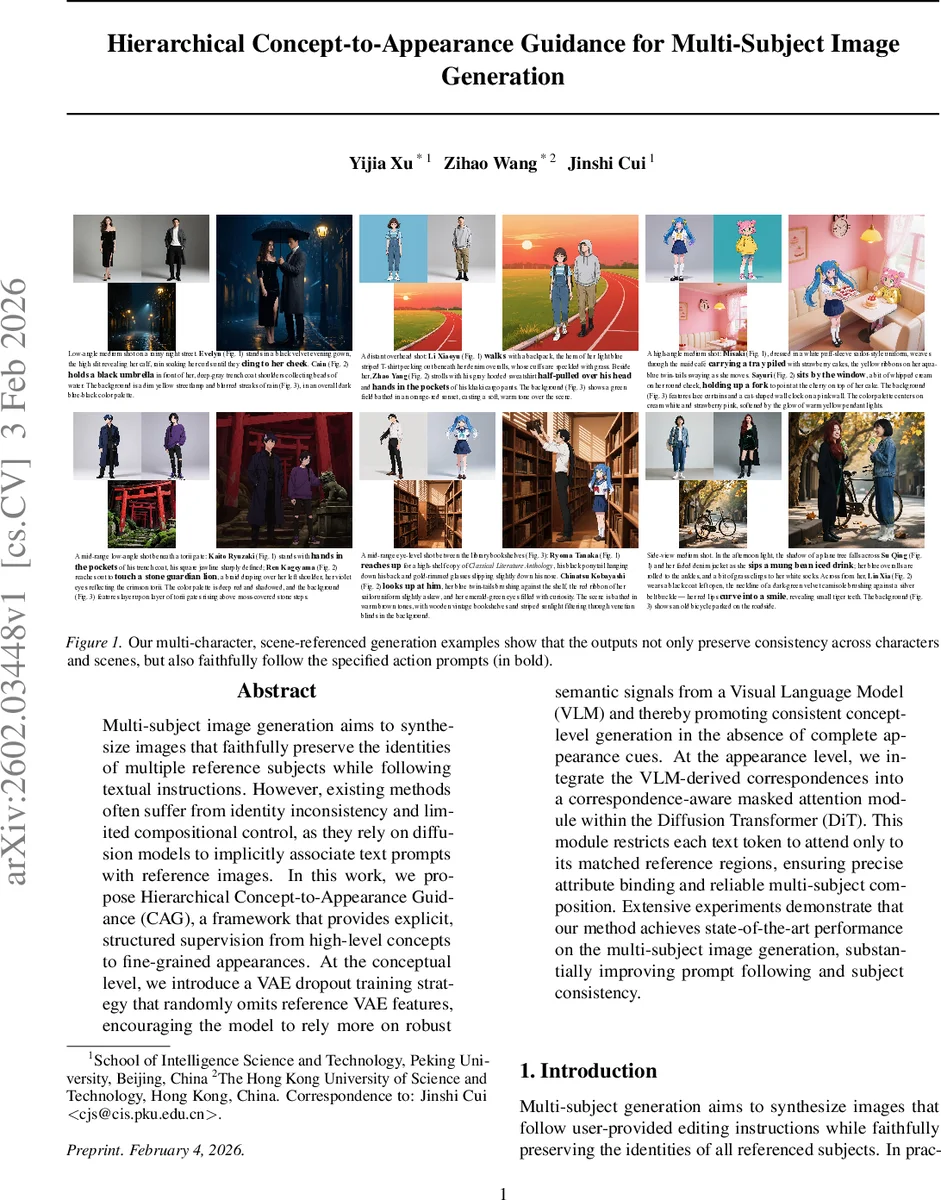

Multi-subject image generation aims to synthesize images that faithfully preserve the identities of multiple reference subjects while following textual instructions. However, existing methods often suffer from identity inconsistency and limited compositional control, as they rely on diffusion models to implicitly associate text prompts with reference images. In this work, we propose Hierarchical Concept-to-Appearance Guidance (CAG), a framework that provides explicit, structured supervision from high-level concepts to fine-grained appearances. At the conceptual level, we introduce a VAE dropout training strategy that randomly omits reference VAE features, encouraging the model to rely more on robust semantic signals from a Visual Language Model (VLM) and thereby promoting consistent concept-level generation in the absence of complete appearance cues. At the appearance level, we integrate the VLM-derived correspondences into a correspondence-aware masked attention module within the Diffusion Transformer (DiT). This module restricts each text token to attend only to its matched reference regions, ensuring precise attribute binding and reliable multi-subject composition. Extensive experiments demonstrate that our method achieves state-of-the-art performance on the multi-subject image generation, substantially improving prompt following and subject consistency.

💡 Research Summary

The paper addresses the challenging task of multi‑subject image generation, where a model must synthesize a scene that follows a textual instruction while preserving the identities of several reference subjects. Existing diffusion‑based approaches typically concatenate reference images or use cross‑attention, but they rely heavily on low‑level VAE latents and lack explicit mechanisms to align high‑level textual concepts with fine‑grained visual details. Consequently, identity drift and compositional errors become common, especially as the number of subjects grows.

To overcome these limitations, the authors propose Hierarchical Concept‑to‑Appearance Guidance (CAG), a framework that introduces two complementary levels of supervision: a conceptual level and an appearance level.

Conceptual level – VAE dropout. During training, the reference VAE features are randomly omitted with probability p. When dropped, the diffusion transformer receives only the vision‑language model (VLM) embeddings of the reference images and the instruction. This forces the model to extract semantic information from the VLM rather than over‑relying on the VAE latents, improving robustness when low‑level visual cues are missing or noisy. A curriculum schedule gradually increases p so that the model first learns with full VAE information and later learns to depend more on VLM semantics.

Appearance level – Correspondence‑aware masked attention. The VLM is used to produce dense word‑to‑region alignments between the instruction and each reference image. These alignments are turned into binary masks that restrict each text token’s attention to the VAE latent patches belonging to its matched region. In the Diffusion Transformer (DiT), queries, keys, and values are formed by concatenating VLM‑derived reference tokens, instruction tokens, target‑image VAE tokens, and reference‑image VAE tokens. The mask is applied to the key/value side, ensuring that a token such as “blue hat” can only attend to the latent region that the VLM has identified as the hat of the appropriate subject. This mechanism is applied at every DiT layer, guaranteeing consistent token‑to‑region binding from early to late diffusion steps.

To handle multiple subjects, the authors introduce a positional offset for each reference‑image token sequence, preventing positional overlap and allowing the transformer to distinguish different individuals.

The training objective combines the standard diffusion denoising loss with an alignment loss that penalizes mismatches between the VLM‑predicted word‑region map and the actual masked attention distribution.

Experiments are conducted on three public multi‑subject benchmarks, comparing CAG against state‑of‑the‑art methods such as OmniGen2, MOSAIC, UMO, and DreamOmni2. Evaluation metrics include CLIP‑Score (text fidelity), Identity‑Score (subject preservation), FID (image quality), and human preference studies. CAG achieves a CLIP‑Score of 0.84 (vs. 0.73 best prior), Identity‑Score of 0.91 (vs. 0.78), and an FID of 12.3 (vs. 18.7). Qualitative examples demonstrate that attributes are correctly bound to the intended subjects, with minimal cross‑subject leakage.

Ablation studies confirm that both components are essential: removing the masked attention drops Identity‑Score to 0.68, while disabling VAE dropout reduces CLIP‑Score and harms robustness. The positional offset also contributes to lower cross‑subject attention errors.

Limitations include reliance on a CLIP‑based VLM, which may struggle with fine‑grained actions or subtle facial expressions, and the fixed dropout probability, which can affect training stability if set too high. The authors suggest future work integrating more powerful dynamic VLMs (e.g., Flamingo‑style) and adaptive dropout schedules, as well as higher‑resolution VAE encoders.

In summary, CAG introduces a principled hierarchical guidance scheme that explicitly bridges high‑level textual concepts and low‑level visual appearances. By compelling the diffusion model to use semantic VLM cues and by tightly controlling token‑to‑region attention, the method markedly improves text adherence, identity consistency, and overall compositional quality in multi‑subject image generation, setting a new benchmark for the field.

Comments & Academic Discussion

Loading comments...

Leave a Comment