When control meets large language models: From words to dynamics

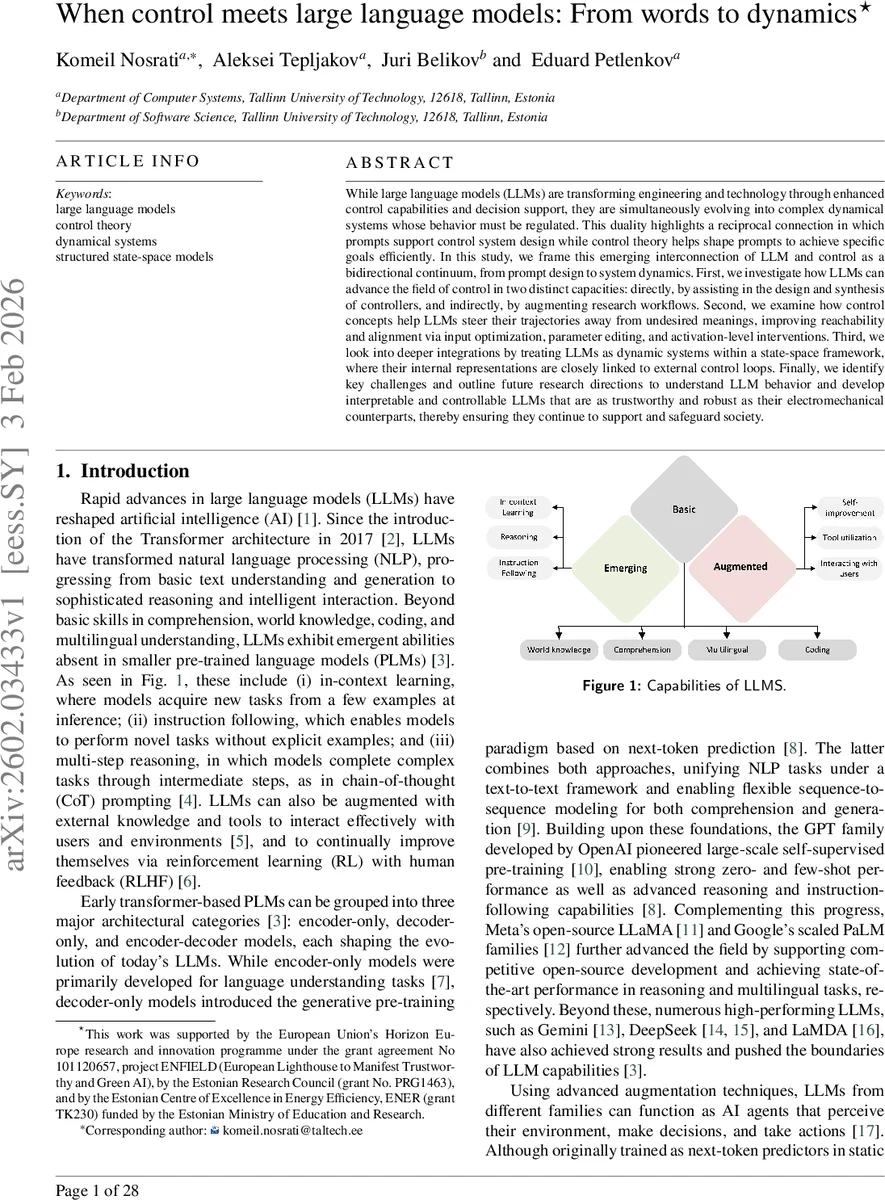

While large language models (LLMs) are transforming engineering and technology through enhanced control capabilities and decision support, they are simultaneously evolving into complex dynamical systems whose behavior must be regulated. This duality highlights a reciprocal connection in which prompts support control system design while control theory helps shape prompts to achieve specific goals efficiently. In this study, we frame this emerging interconnection of LLM and control as a bidirectional continuum, from prompt design to system dynamics. First, we investigate how LLMs can advance the field of control in two distinct capacities: directly, by assisting in the design and synthesis of controllers, and indirectly, by augmenting research workflows. Second, we examine how control concepts help LLMs steer their trajectories away from undesired meanings, improving reachability and alignment via input optimization, parameter editing, and activation-level interventions. Third, we look into deeper integrations by treating LLMs as dynamic systems within a state-space framework, where their internal representations are closely linked to external control loops. Finally, we identify key challenges and outline future research directions to understand LLM behavior and develop interpretable and controllable LLMs that are as trustworthy and robust as their electromechanical counterparts, thereby ensuring they continue to support and safeguard society.

💡 Research Summary

This paper presents a comprehensive examination of the emerging bidirectional relationship between large language models (LLMs) and control theory, framing it as a continuum that stretches from prompt design to system dynamics. The authors identify three principal paradigms that capture the ways in which LLMs and control concepts interact.

1. LLMs Supporting Control

The first paradigm is divided into indirect and direct contributions. Indirectly, LLMs act as intelligent assistants that automate many routine but essential research tasks: literature reviews, summarization of patents, generation of simulation code, extraction and organization of mathematical formulations, conversion of raw experimental logs into coherent technical reports, and preprocessing of heterogeneous datasets. These capabilities accelerate the research workflow, allowing engineers to focus on higher‑level design questions. Directly, LLMs can participate in the technical aspects of controller synthesis. They can suggest control structures (PID, LQR, MPC, nonlinear strategies), formulate optimization problems, explore parameter spaces, guide experiment design, and interpret simulation results, effectively extending human expertise and reducing time‑to‑solution.

2. Control Theory Shaping LLM Behavior

The second paradigm flips the direction of influence: control concepts are employed to steer LLM outputs away from undesired meanings and improve alignment. The authors discuss three concrete mechanisms. (a) Input optimization and prompt engineering treat the prompt as a control input; iterative feedback adjusts the prompt until the model’s trajectory reaches a desired output region. (b) Parameter editing and activation‑level interventions edit internal weights or intermediate activations, akin to model‑editing techniques that suppress or amplify specific token behaviors. (c) Reinforcement Learning from Human Feedback (RLHF) is interpreted as a closed‑loop supervisory control system, where the reward model provides a supervisory signal and the RL algorithm acts as a controller that corrects errors and adapts the policy. This view highlights that alignment is not a peripheral fine‑tuning step but a core feedback‑driven regulation process.

3. Treating LLMs as Dynamical Systems

The third paradigm adopts a deeper integration by modeling LLMs themselves as structured state‑space systems (SSMs). Recent architectures such as Mamba embed state‑space equations directly into the model’s computation graph, making the hidden state evolution explicit. Within this framework, controllability, observability, and stability—cornerstones of control theory—can be applied to the internal representations of LLMs. Controllability assesses whether a given prompt (input) can drive the hidden state to any desired region; observability examines whether external probes (e.g., probing queries) can infer the hidden state; stability analysis (Lyapunov methods, input‑output gain) ensures that small perturbations in prompts or external disturbances do not cause divergent or unsafe outputs. This perspective enables rigorous, mathematically grounded analysis of LLM behavior and opens the door to designing external controllers that monitor and correct LLM outputs in real time.

Historical Context

The paper traces the lineage from early cybernetics and the 1940s feedback concepts through the birth of reinforcement learning in the 1960s, the rise of deep learning, and the 2017 introduction of the Transformer. It emphasizes that self‑attention can be viewed as a dynamical system, and that RLHF (2022) explicitly embeds a feedback loop into LLM fine‑tuning. The recent convergence of control and LLMs (2023 onward) is illustrated by state‑space‑inspired architectures, controllability studies, and real‑world deployments in traffic management, autonomous vehicles, and secure control system design.

Current Challenges

The authors enumerate four major challenges: (1) lack of scalable methods to observe and manipulate the hidden states of massive LLMs in real time; (2) computational complexity of applying classic control algorithms to billions‑parameter models, limiting real‑time applicability; (3) insufficient control‑theoretic verification frameworks for safety and ethical alignment; (4) handling temporal uncertainty, latency, and non‑stationarity when LLMs are coupled with physical control loops.

Future Research Directions

To address these gaps, the paper proposes: (i) designing LLMs with native state‑space structures and training objectives that promote controllability; (ii) developing automated, control‑theoretic prompt optimization pipelines that can be integrated into existing development environments; (iii) creating robust stability and safety analysis tools (e.g., Lyapunov‑based certificates) for generative models; (iv) exploring cooperative control mechanisms in multi‑modal, multi‑agent settings where several LLMs and physical controllers interact.

Conclusion

Overall, the study argues that viewing LLMs not merely as language generators but as components of a broader control ecosystem yields a powerful new research paradigm. By leveraging control theory to both harness LLM capabilities for controller design and to regulate LLM behavior for alignment and safety, the community can move toward trustworthy, robust AI systems that are as reliable as traditional electromechanical controllers.

Comments & Academic Discussion

Loading comments...

Leave a Comment