Verified Critical Step Optimization for LLM Agents

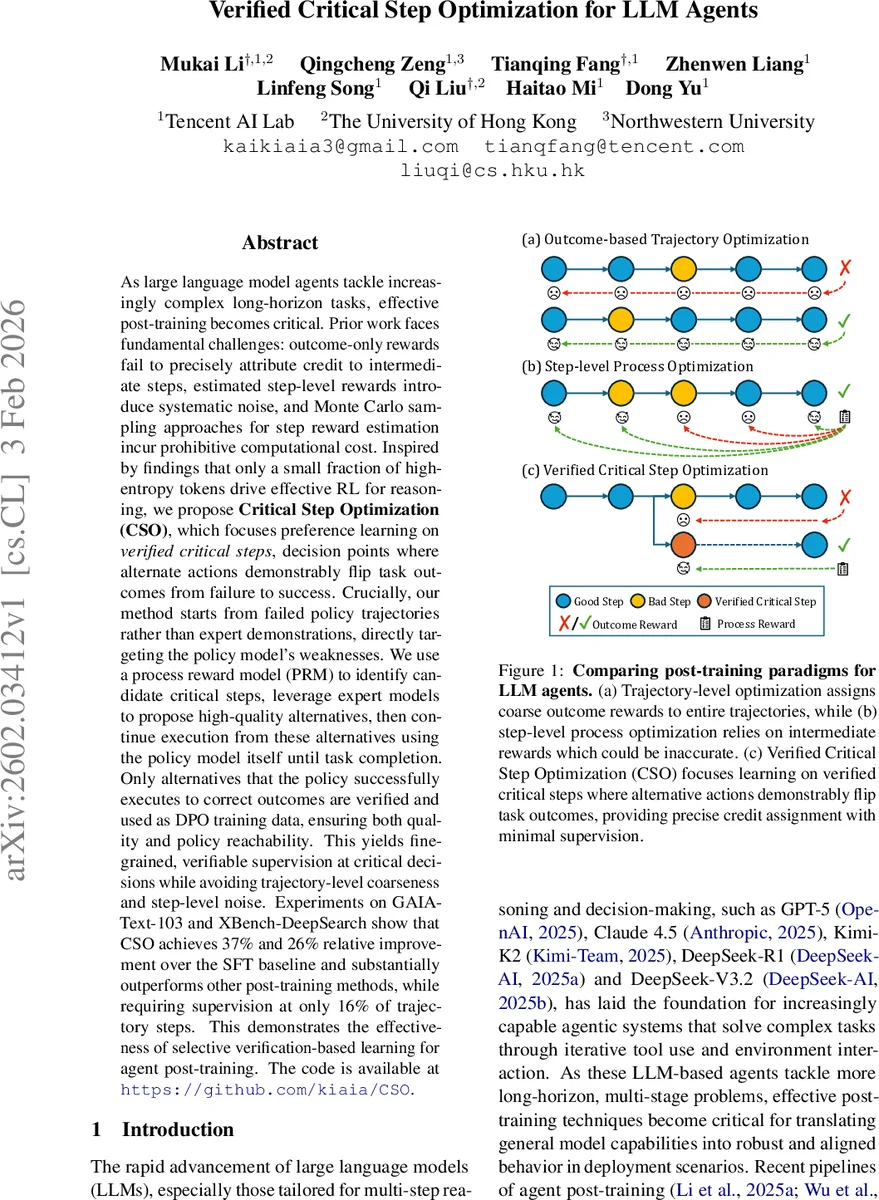

As large language model agents tackle increasingly complex long-horizon tasks, effective post-training becomes critical. Prior work faces fundamental challenges: outcome-only rewards fail to precisely attribute credit to intermediate steps, estimated step-level rewards introduce systematic noise, and Monte Carlo sampling approaches for step reward estimation incur prohibitive computational cost. Inspired by findings that only a small fraction of high-entropy tokens drive effective RL for reasoning, we propose Critical Step Optimization (CSO), which focuses preference learning on verified critical steps, decision points where alternate actions demonstrably flip task outcomes from failure to success. Crucially, our method starts from failed policy trajectories rather than expert demonstrations, directly targeting the policy model’s weaknesses. We use a process reward model (PRM) to identify candidate critical steps, leverage expert models to propose high-quality alternatives, then continue execution from these alternatives using the policy model itself until task completion. Only alternatives that the policy successfully executes to correct outcomes are verified and used as DPO training data, ensuring both quality and policy reachability. This yields fine-grained, verifiable supervision at critical decisions while avoiding trajectory-level coarseness and step-level noise. Experiments on GAIA-Text-103 and XBench-DeepSearch show that CSO achieves 37% and 26% relative improvement over the SFT baseline and substantially outperforms other post-training methods, while requiring supervision at only 16% of trajectory steps. This demonstrates the effectiveness of selective verification-based learning for agent post-training.

💡 Research Summary

The paper introduces Critical Step Optimization (CSO), a novel post‑training framework for large language model (LLM) agents that tackles the shortcomings of existing trajectory‑level and step‑level learning approaches. Traditional outcome‑only methods assign a single reward to an entire trajectory, which blurs credit for the few decisive actions that actually determine success. Step‑level process reward models provide finer granularity but rely on noisy, estimated rewards and often require expensive Monte‑Carlo rollouts. CSO sidesteps both problems by focusing on “verified critical steps”: decision points where an alternative action can flip a failed execution into a successful one, and where that alternative is confirmed to work when the policy itself continues the rollout.

The pipeline works as follows. First, the current policy is run on a set of tasks and only the failed trajectories are retained. For each step in a failed trajectory, an expert model (Claude‑3.7‑Sonnet) generates a small set of candidate alternative actions. A Process Reward Model (PRM), implemented via prompting a strong closed‑source model with a rubric, scores both the policy’s original action and each expert alternative. Steps where the policy’s score falls below a low threshold (γ_low) and at least one expert alternative exceeds a high threshold (γ_high) are marked as candidate critical steps.

Each candidate is then verified by constructing a branch: the high‑scoring expert alternative replaces the original action, and the policy itself proceeds from that point onward until termination. If the branched trajectory ends in success, the step is deemed a verified critical step, and a preference pair is created consisting of (state, successful alternative, original failed action). These pairs form the DPO training set. The policy is fine‑tuned using Direct Preference Optimization with a KL regularizer, concentrating learning exclusively on the verified critical decisions rather than the whole trajectory.

CSO is applied iteratively. After each round of DPO training, the updated policy is redeployed to collect fresh failures, identify new critical steps, and repeat the process. This creates an implicit curriculum: as the model improves, the remaining critical steps become increasingly challenging, driving continual refinement without the need for full reinforcement learning.

Experiments use an 8‑billion‑parameter CK‑Pro‑8B model (SFT‑fine‑tuned from Qwen‑3‑8B) on two benchmarks: GAIA‑Text‑103, which involves multi‑step web search and document generation, and XBench‑DeepSearch, a deep‑search task suite. CSO achieves relative improvements of 37 % on GAIA‑Text‑103 and 26 % on XBench‑DeepSearch compared to the supervised‑fine‑tuned baseline. Notably, supervision is required for only about 16 % of trajectory steps, yet CSO outperforms baseline methods that use full‑trajectory DPO, step‑level reward models, and Monte‑Carlo‑based IPR. The approach also dramatically reduces computational overhead because PRM scoring is cheap and only a limited number of branch rollouts are performed for verification.

Key contributions include: (1) a failure‑centric data collection strategy that keeps training data within the policy’s reachable distribution; (2) a hybrid use of expert alternatives and a process reward model to efficiently pinpoint high‑impact decision points; (3) a verification step that guarantees the supervision is grounded in actual task success, eliminating reward‑estimation noise; and (4) empirical evidence that focusing learning on a small set of verified critical steps yields large performance gains with modest supervision cost.

Overall, CSO demonstrates that selective, verification‑based preference learning can substantially improve the robustness and alignment of LLM agents on long‑horizon tasks, offering a scalable alternative to full reinforcement learning while preserving the benefits of fine‑grained credit assignment.

Comments & Academic Discussion

Loading comments...

Leave a Comment