Accurate Failure Prediction in Agents Does Not Imply Effective Failure Prevention

Proactive interventions by LLM critic models are often assumed to improve reliability, yet their effects at deployment time are poorly understood. We show that a binary LLM critic with strong offline accuracy (AUROC 0.94) can nevertheless cause severe performance degradation, inducing a 26 percentage point (pp) collapse on one model while affecting another by near zero pp. This variability demonstrates that LLM critic accuracy alone is insufficient to determine whether intervention is safe. We identify a disruption-recovery tradeoff: interventions may recover failing trajectories but also disrupt trajectories that would have succeeded. Based on this insight, we propose a pre-deployment test that uses a small pilot of 50 tasks to estimate whether intervention is likely to help or harm, without requiring full deployment. Across benchmarks, the test correctly anticipates outcomes: intervention degrades performance on high-success tasks (0 to -26 pp), while yielding a modest improvement on the high-failure ALFWorld benchmark (+2.8 pp, p=0.014). The primary value of our framework is therefore identifying when not to intervene, preventing severe regressions before deployment.

💡 Research Summary

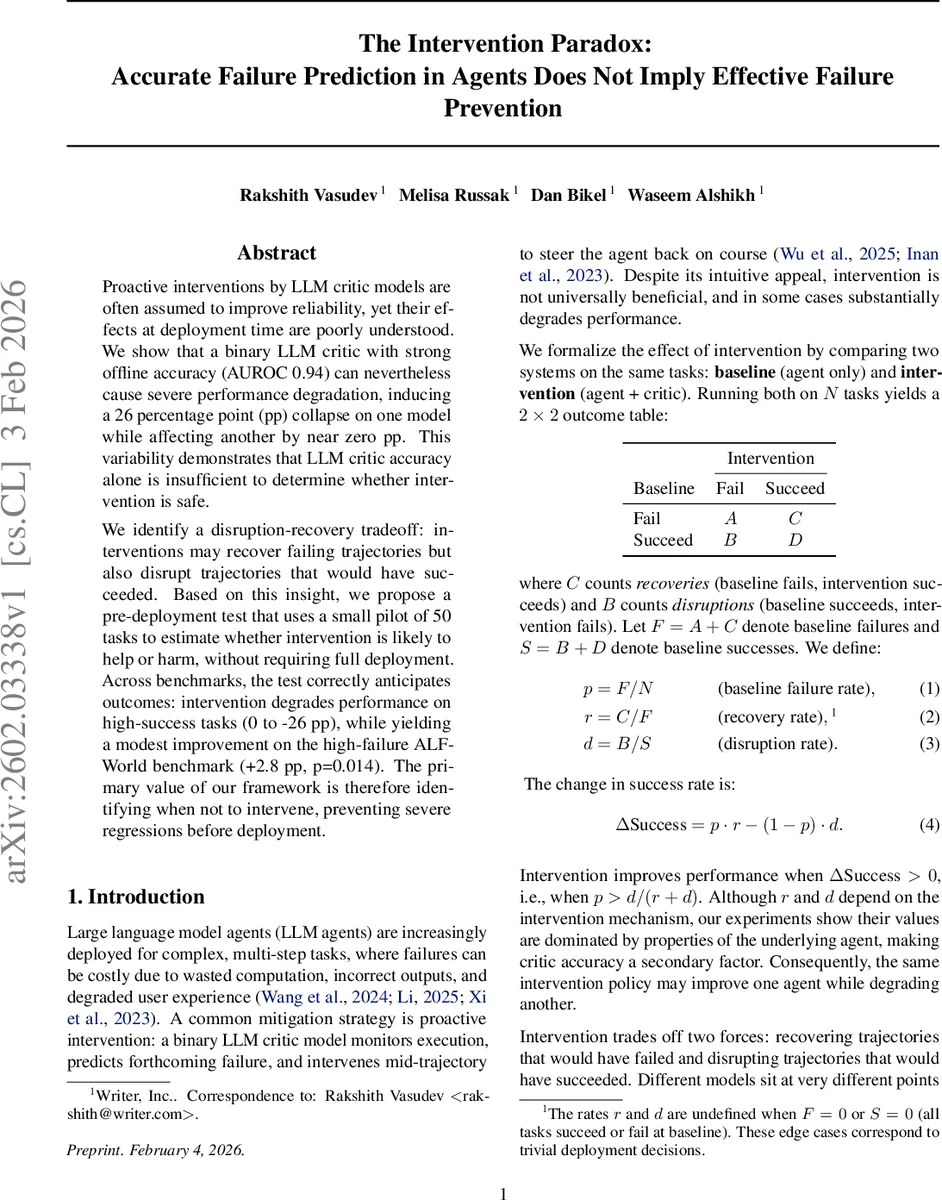

The paper investigates the practical impact of execution‑time interventions for large language model (LLM) agents, focusing on the gap between strong offline failure prediction and real‑world performance improvement. The authors formalize the interaction between a baseline agent and an intervening binary LLM critic using a 2 × 2 outcome table, defining three key ratios: baseline failure rate p, recovery rate r (the proportion of baseline failures that are turned into successes by the intervention), and disruption rate d (the proportion of baseline successes that become failures after intervention). From these quantities they derive a simple expression for the change in overall success rate: ΔSuccess = p·r − (1 − p)·d. This yields a clear condition for when intervention is beneficial: p > d/(r + d). The condition shows that even a perfect predictor (r = 1) cannot guarantee gains if the disruption rate d is high relative to the baseline failure rate.

Empirically, the authors train a binary LLM critic based on Qwen‑3‑0.6B with LoRA adapters on 7,636 trajectory steps from diverse tasks. The critic achieves high offline discrimination (AUROC 0.936, F1 0.963) across several backbone agents (Qwen‑3‑8B, GLM‑4.7, MiniMax‑M2.1). They evaluate two minimal intervention mechanisms: ROLLBACK, which undoes the most recent action and allows a retry, and APPEND, which simply adds a warning message before the action proceeds. Both calibrated and uncalibrated versions are tested.

Three benchmark suites are used, representing distinct baseline success regimes: HotPotQA (high‑success, 57‑70 % baseline), GAIA (medium‑success, 19‑47 % baseline), and ALFWorld (low‑success, 5.8‑14.7 % baseline). Across the high‑ and medium‑success settings, all intervention configurations either leave performance unchanged or cause substantial regressions. For example, MiniMax‑M2.1 suffers a 25‑30 percentage‑point drop on HotPotQA, while Qwen‑3‑8B and GLM‑4.7 see modest declines of 2‑4 pp. The authors attribute these harms to high disruption rates (d) relative to recovery rates (r) for these agents; the condition p > d/(r + d) is systematically violated.

In contrast, the low‑success ALFWorld scenario exhibits the opposite pattern. A pilot study of 50 tasks estimates p ≈ 89 %, r ≈ 12 %, and d ≈ 56 %, yielding a threshold p* ≈ 82 %. Since the observed failure rate exceeds this threshold, the theory predicts a net gain, which the full evaluation confirms: all intervention variants produce modest but statistically significant improvements (e.g., +2.8 pp for uncalibrated APPEND, p = 0.014). The pilot’s estimates of r and d correctly anticipate the sign of ΔSuccess, demonstrating the practical utility of the pre‑deployment test.

Calibration via temperature scaling reduces over‑confidence of the critic’s softmax scores but does not materially alter the disruption‑recovery balance; its effect is primarily to adjust the trigger threshold τ. The authors show that calibration interacts with the trade‑off in a regime‑dependent way, sometimes suppressing beneficial interventions when applied too conservatively.

The central contribution is a lightweight, 50‑task pilot that estimates p, r, and d before full deployment, allowing practitioners to decide whether to enable an LLM‑critic‑based intervention. This approach reframes the problem from “improve failure detection” to “understand the agent’s susceptibility to mid‑trajectory corrections.” The paper argues that scaling the critic (up to 14 B parameters) yields diminishing returns because the ceiling on ΔSuccess is fundamentally limited by the disruption‑recovery ratio, not by predictor accuracy.

In summary, the work demonstrates that high offline AUROC does not guarantee deployment‑time gains; the disruption‑recovery trade‑off dominates. Effective deployment requires measuring baseline failure rates and the agent‑specific disruption and recovery probabilities. When p exceeds the derived threshold, interventions can be safely applied; otherwise, they risk severe performance collapses. This insight guides future research toward designing agents that are more robust to corrective feedback rather than solely focusing on more accurate critics.

Comments & Academic Discussion

Loading comments...

Leave a Comment