Lookahead Sample Reward Guidance for Test-Time Scaling of Diffusion Models

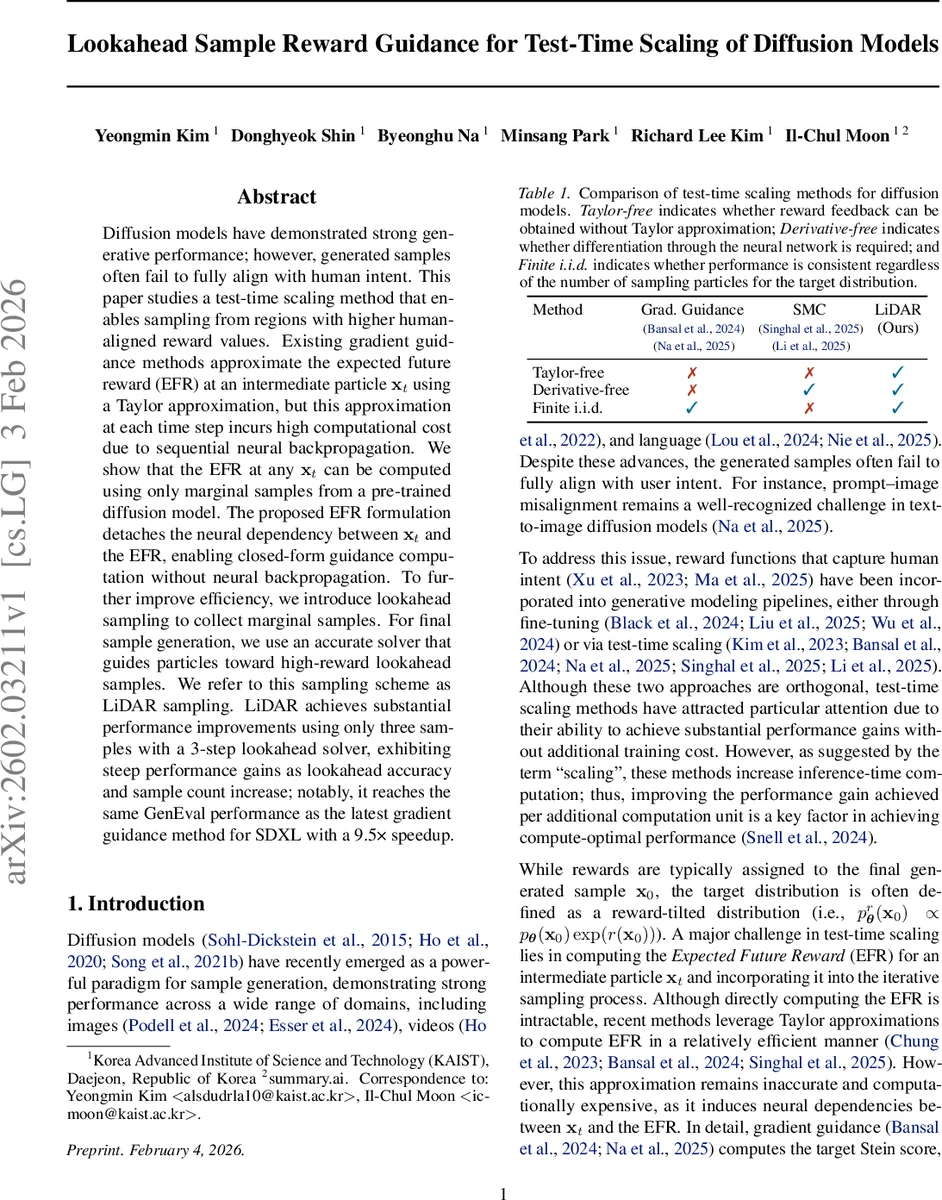

Diffusion models have demonstrated strong generative performance; however, generated samples often fail to fully align with human intent. This paper studies a test-time scaling method that enables sampling from regions with higher human-aligned reward values. Existing gradient guidance methods approximate the expected future reward (EFR) at an intermediate particle $\mathbf{x}_t$ using a Taylor approximation, but this approximation at each time step incurs high computational cost due to sequential neural backpropagation. We show that the EFR at any $\mathbf{x}_t$ can be computed using only marginal samples from a pre-trained diffusion model. The proposed EFR formulation detaches the neural dependency between $\mathbf{x}_t$ and the EFR, enabling closed-form guidance computation without neural backpropagation. To further improve efficiency, we introduce lookahead sampling to collect marginal samples. For final sample generation, we use an accurate solver that guides particles toward high-reward lookahead samples. We refer to this sampling scheme as LiDAR sampling. LiDAR achieves substantial performance improvements using only three samples with a 3-step lookahead solver, exhibiting steep performance gains as lookahead accuracy and sample count increase; notably, it reaches the same GenEval performance as the latest gradient guidance method for SDXL with a 9.5x speedup.

💡 Research Summary

The paper tackles the persistent problem that diffusion‑generated images often fail to align with human intent, especially in text‑to‑image scenarios. Existing test‑time scaling methods improve alignment by sampling from a reward‑tilted distribution pᵣθ(x₀|c) ∝ pθ(x₀|c) exp(λ·r(x₀,c)), but they rely on a first‑order Taylor approximation of the Expected Future Reward (EFR) and require back‑propagation through the score network and the reward model at every diffusion step. This leads to two major drawbacks: (i) the approximation error grows with the reward strength λ, degrading performance, and (ii) the need for differentiable rewards and costly gradient computations makes the approach inefficient.

The authors propose a fundamentally different formulation of the EFR that eliminates any dependence on the intermediate particle xₜ for neural network evaluation. The key insight (Theorem 3.1) shows that the EFR can be expressed as

r̂_λₜ(xₜ,c) = log E_{x₀∼pθ(x₀|c)}

Comments & Academic Discussion

Loading comments...

Leave a Comment