VIRAL: Visual In-Context Reasoning via Analogy in Diffusion Transformers

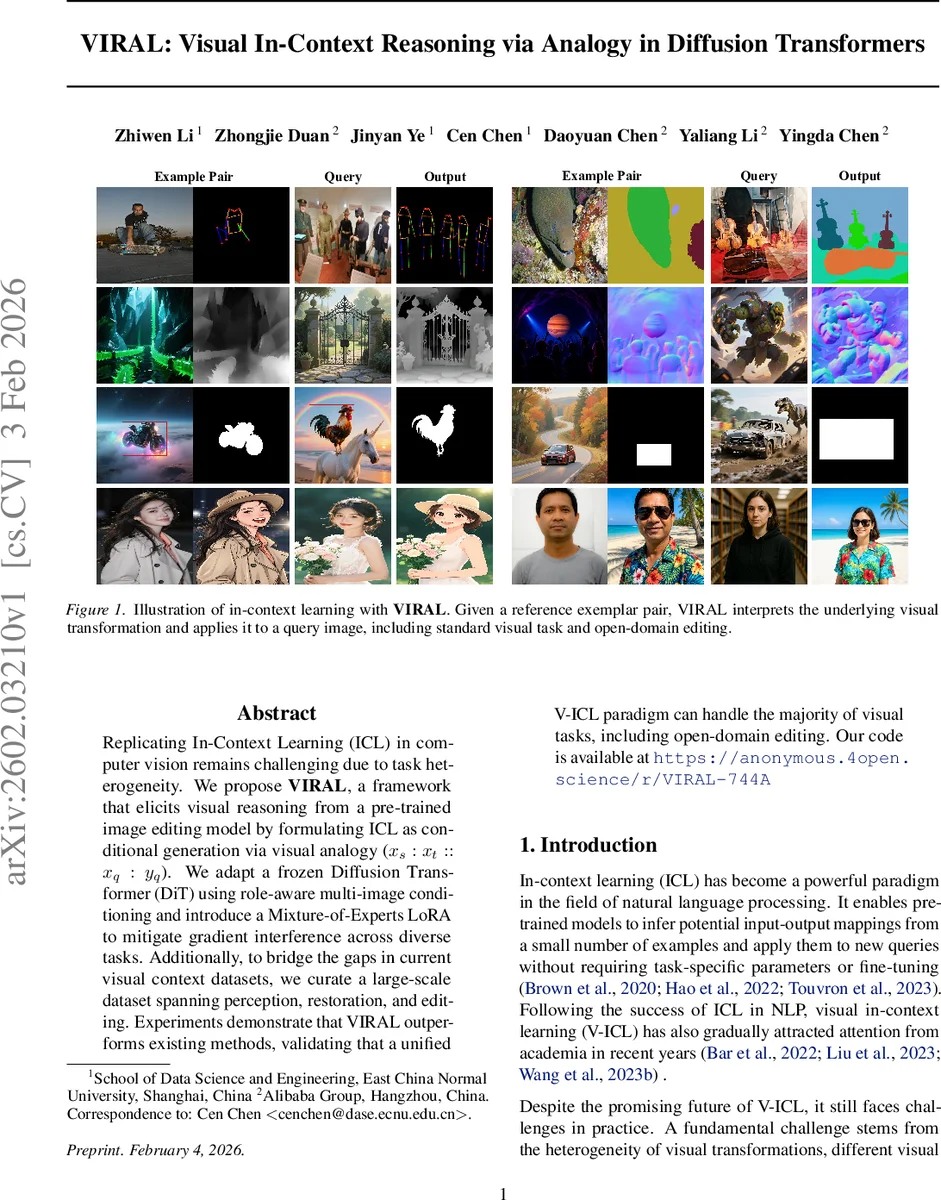

Replicating In-Context Learning (ICL) in computer vision remains challenging due to task heterogeneity. We propose \textbf{VIRAL}, a framework that elicits visual reasoning from a pre-trained image editing model by formulating ICL as conditional generation via visual analogy ($x_s : x_t :: x_q : y_q$). We adapt a frozen Diffusion Transformer (DiT) using role-aware multi-image conditioning and introduce a Mixture-of-Experts LoRA to mitigate gradient interference across diverse tasks. Additionally, to bridge the gaps in current visual context datasets, we curate a large-scale dataset spanning perception, restoration, and editing. Experiments demonstrate that VIRAL outperforms existing methods, validating that a unified V-ICL paradigm can handle the majority of visual tasks, including open-domain editing. Our code is available at https://anonymous.4open.science/r/VIRAL-744A

💡 Research Summary

The paper introduces VIRAL (Visual In‑Context Reasoning via Analogy in Diffusion Transformers), a novel framework that brings in‑context learning (ICL) to computer vision by treating every visual task as a conditional generation problem grounded in visual analogy. Instead of training separate models for each task or stitching image pairs into a grid, VIRAL formulates the problem as xₛ : xₜ :: x_q : y_q, where (xₛ, xₜ) is an exemplar pair that exemplifies an underlying transformation T, and the model must infer the same transformation for a query image x_q to produce y_q.

Key technical contributions are threefold. First, the authors adapt a frozen Diffusion Transformer (DiT) backbone for multi‑image conditioning. Each image (exemplar source, exemplar target, and query source) is encoded by a VAE‑encoder and patchified into a sequence of latent tokens. A 3‑D extension of the MSRoPE positional encoding is applied to embed role information (source, target, query) while preserving spatial geometry, enabling the transformer’s cross‑attention to distinguish between the different images and correctly infer the transformation logic.

Second, to handle the heterogeneity of tasks without catastrophic interference, the paper proposes a Mixture‑of‑Experts LoRA (MoE‑LoRA). Standard low‑rank LoRA adapters are replicated into N experts; a differentiable router selects the top‑k experts for each token, and an auxiliary load‑balancing loss encourages uniform expert utilization. This design allows a single set of adapters to serve many tasks while keeping the massive DiT backbone frozen, dramatically reducing trainable parameters and memory footprint.

Third, the authors construct a large‑scale in‑context dataset comprising millions of exemplar‑query quadruplets. The dataset has two streams: (1) “standard visual tasks” such as edge detection, depth estimation, surface normal prediction, object detection, key‑point detection, segmentation, and various restoration/enhancement tasks. These are generated by sampling prompts from DiffusionDB, synthesizing source images with Qwen‑Image, and automatically annotating with ControlNet‑Aux, SAM, COCO, and other tools. (2) “open‑domain editing” where transformations are unstructured (style transfer, background replacement, etc.). Here, analogous editing pairs are mined from instruction‑driven corpora and verified for consistency. The resulting dataset provides diverse, high‑quality examples that enable the model to learn generic transformation patterns.

Training follows the standard diffusion objective: the target y_q is noised to a random timestep t, and the DiT predicts the noise component ϵ̂ conditioned on the fixed concatenated token sequence Z_cond =

Comments & Academic Discussion

Loading comments...

Leave a Comment