Hand3R: Online 4D Hand-Scene Reconstruction in the Wild

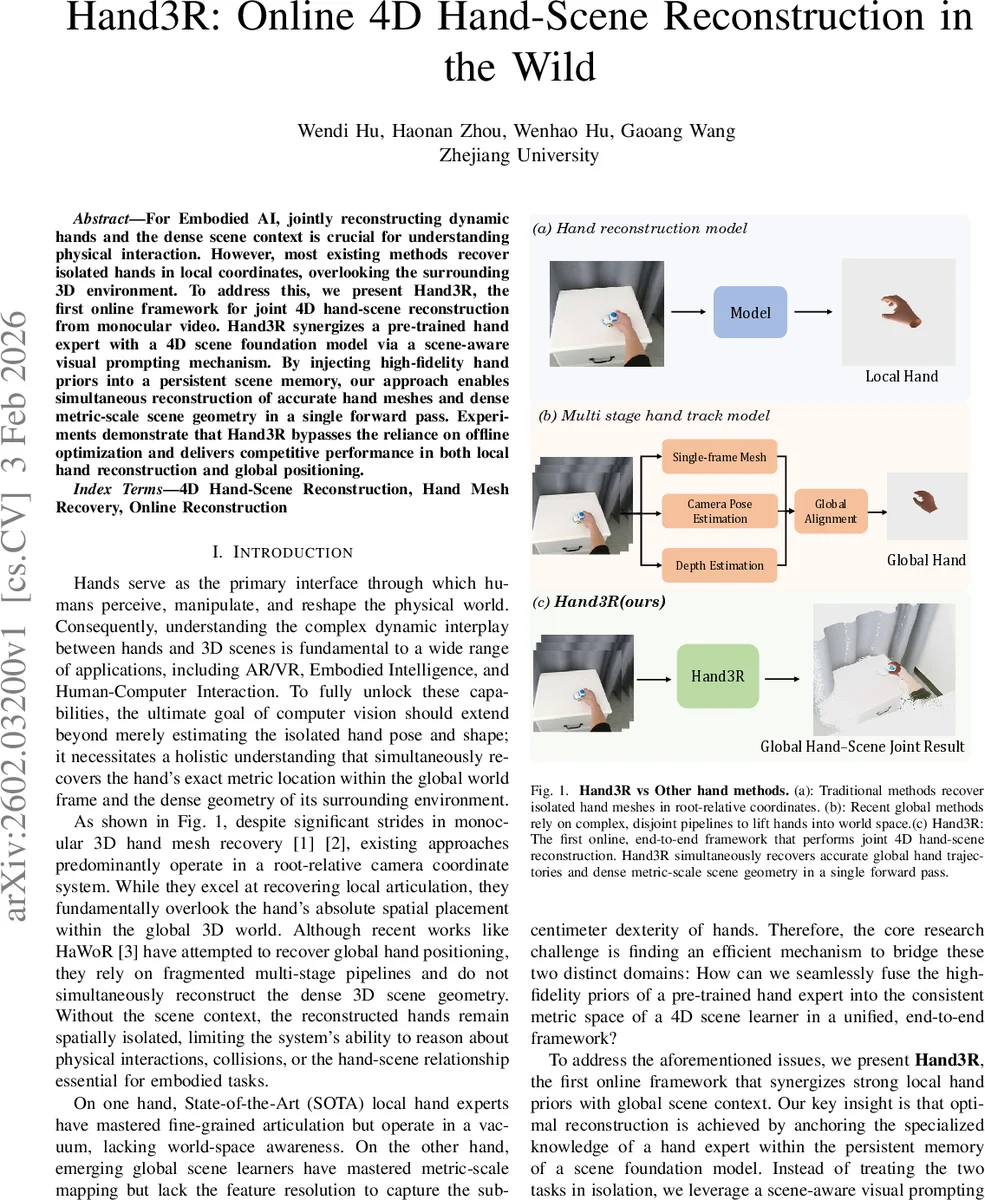

For Embodied AI, jointly reconstructing dynamic hands and the dense scene context is crucial for understanding physical interaction. However, most existing methods recover isolated hands in local coordinates, overlooking the surrounding 3D environment. To address this, we present Hand3R, the first online framework for joint 4D hand-scene reconstruction from monocular video. Hand3R synergizes a pre-trained hand expert with a 4D scene foundation model via a scene-aware visual prompting mechanism. By injecting high-fidelity hand priors into a persistent scene memory, our approach enables simultaneous reconstruction of accurate hand meshes and dense metric-scale scene geometry in a single forward pass. Experiments demonstrate that Hand3R bypasses the reliance on offline optimization and delivers competitive performance in both local hand reconstruction and global positioning.

💡 Research Summary

Hand3R introduces the first online, end‑to‑end framework that jointly reconstructs high‑fidelity hand meshes and dense metric‑scale scene geometry from a monocular video stream. The method tackles a fundamental limitation of prior work: most hand‑reconstruction systems operate in a root‑relative camera frame and ignore the surrounding 3D environment, while recent global hand‑tracking approaches either rely on multi‑stage pipelines or do not reconstruct the scene at all. Hand3R bridges this gap by fusing a frozen, large‑scale hand expert (the HaMeR model) with a 4D scene foundation model (CUT3R) through a scene‑aware visual prompting mechanism.

The architecture consists of two parallel encoders. The scene encoder extracts dense, metric‑scaled feature maps (F_s) from the full RGB frame. Simultaneously, a hand detector provides a bounding box; the cropped region is fed to the frozen hand expert to obtain high‑resolution hand tokens (f_h). Within the same bounding box, the scene feature map is average‑pooled to produce a local context vector (f_s). Concatenating f_h and f_s and passing them through a lightweight MLP yields a visual prompt (p_h) that encodes both “what the hand looks like” and “where it is in the scene”.

The prompt is injected into the scene decoder, which maintains a persistent spatiotemporal state S_{t‑1}. By merging p_h with the current scene tokens and the previous state, the decoder updates the state to S_t and produces fused tokens (f_fused). Two decoupled heads then operate on distinct representations: a MANO head directly regresses pose (θ) and shape (β) from the original hand tokens f_h, preserving the fine‑grained articulation learned by the hand expert; a translation head predicts the absolute hand translation T from f_fused, leveraging the global context to resolve depth ambiguities. This separation ensures that hand mesh quality is not degraded by the global fusion process while still achieving accurate world‑space placement.

Training proceeds in two stages. Stage 1 focuses on robust hand pose learning using the DexYCB dataset; only the MANO head is fine‑tuned while the scene branches remain frozen, and losses are applied to root‑relative joint and vertex positions. Stage 2 fine‑tunes the entire fused network on the HOI4D dataset to learn precise global positioning; a combination of translation, absolute 3D joint, 2D reprojection, and scene reconstruction losses (weighted to avoid catastrophic forgetting) is used.

Evaluation on DexYCB shows that Hand3R attains competitive PA‑MPJPE and AUC scores, even under severe occlusion (75‑100% occlusion MPJPE ≈ 5 mm), matching or surpassing dedicated local hand regressors. On HOI4D, the method achieves substantially lower C‑MPJPE and W‑MPJPE than offline multi‑stage baselines such as HaWor and WiLoR‑SLAM, despite being a single‑stage online system (e.g., 42.6 mm C‑MPJPE on short videos). Qualitative results demonstrate accurate hand‑scene interaction modeling, collision handling, and consistent multi‑hand tracking within a unified world coordinate system.

In summary, Hand3R’s contributions are threefold: (1) the first online framework that simultaneously reconstructs hand meshes and dense scene geometry in a single forward pass, (2) a novel scene‑aware visual prompting mechanism that fuses high‑fidelity hand priors with spatially aligned scene context, and (3) a decoupled decoding design that preserves hand articulation detail while delivering metric‑scale global positioning. By eliminating the need for separate SLAM, depth estimation, and hand‑tracking modules, Hand3R offers a streamlined solution with real‑time capability, opening new possibilities for embodied AI, AR/VR, and human‑computer interaction applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment