Human-in-the-loop Adaptation in Group Activity Feature Learning for Team Sports Video Retrieval

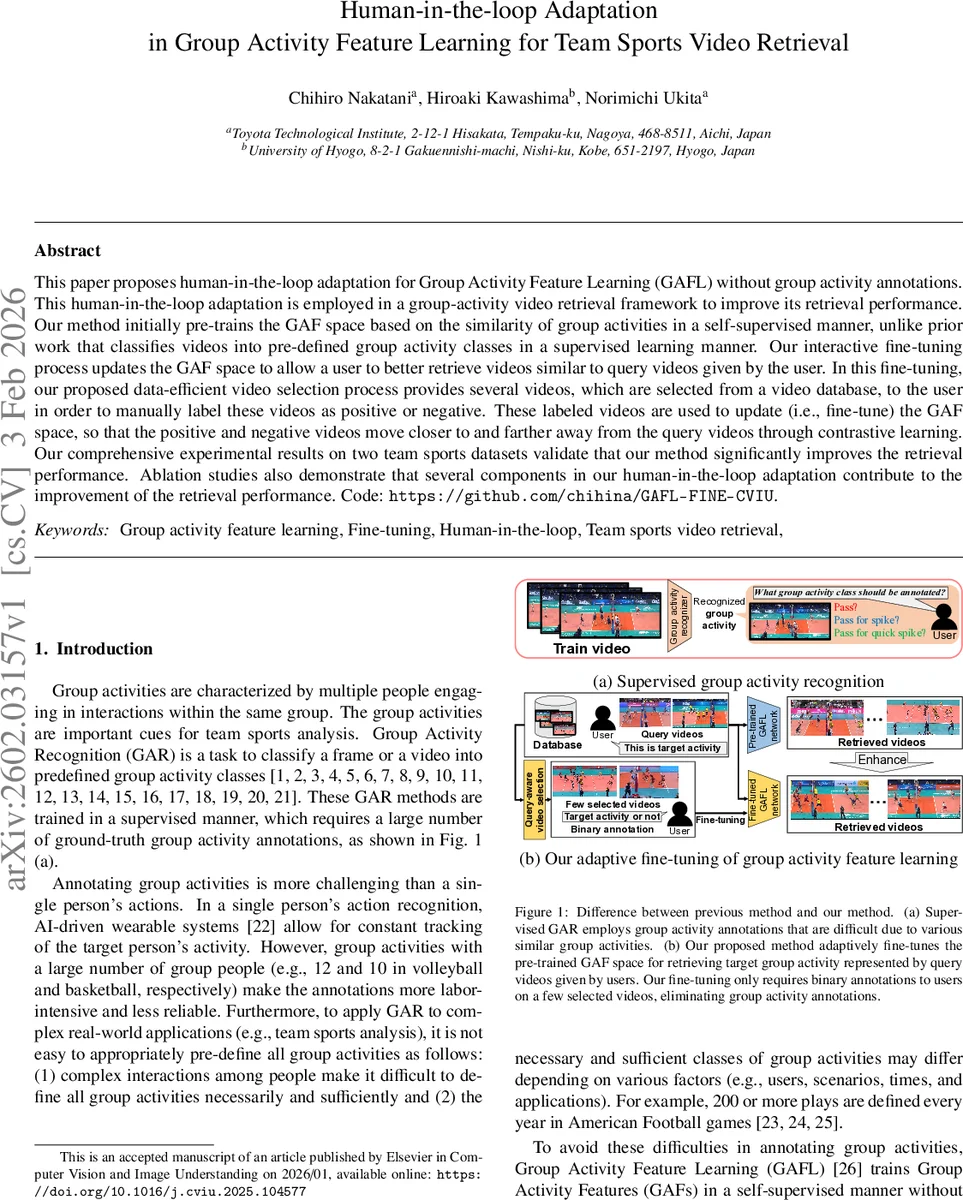

This paper proposes human-in-the-loop adaptation for Group Activity Feature Learning (GAFL) without group activity annotations. This human-in-the-loop adaptation is employed in a group-activity video retrieval framework to improve its retrieval performance. Our method initially pre-trains the GAF space based on the similarity of group activities in a self-supervised manner, unlike prior work that classifies videos into pre-defined group activity classes in a supervised learning manner. Our interactive fine-tuning process updates the GAF space to allow a user to better retrieve videos similar to query videos given by the user. In this fine-tuning, our proposed data-efficient video selection process provides several videos, which are selected from a video database, to the user in order to manually label these videos as positive or negative. These labeled videos are used to update (i.e., fine-tune) the GAF space, so that the positive and negative videos move closer to and farther away from the query videos through contrastive learning. Our comprehensive experimental results on two team sports datasets validate that our method significantly improves the retrieval performance. Ablation studies also demonstrate that several components in our human-in-the-loop adaptation contribute to the improvement of the retrieval performance. Code: https://github.com/chihina/GAFL-FINE-CVIU.

💡 Research Summary

The paper tackles the problem of retrieving specific group‑activity clips from large collections of team‑sports videos without relying on costly, predefined activity annotations. It builds upon the Group Activity Feature Learning (GAFL) framework introduced by Nakatani et al., which learns a high‑level “Group Activity Feature” (GAF) in a self‑supervised manner by predicting masked person attributes from location cues. While GAFL produces useful representations, visualizations show that the learned space does not cleanly separate different activities, limiting its usefulness for fine‑grained retrieval.

To overcome this limitation, the authors propose a human‑in‑the‑loop (HITL) adaptation pipeline. First, a GAFL model is pre‑trained on a large, unannotated video set using the original self‑supervised objective. Then, at retrieval time, a user supplies a handful of query videos that exemplify the target activity (e.g., a successful spike in volleyball). The system selects a small set of candidate videos from the database for user feedback. Candidate selection is “query‑aware”: it combines (i) global similarity to the query (based on the full set of person features) and (ii) local dissimilarity obtained by masking subsets of people, ensuring that selected videos are similar overall but contain subtle differences that are informative for contrastive learning.

The user simply labels each candidate as positive (contains the target activity) or negative (does not). These binary labels are then used to fine‑tune the GAF space with a contrastive loss that pulls query‑positive pairs together and pushes query‑negative pairs apart. To prevent catastrophic forgetting of the original self‑supervised knowledge, an L2 regularization term keeps the fine‑tuned parameters close to the pre‑trained ones. This combination yields a compact, data‑efficient adaptation that requires only a few labeled videos (typically five) while dramatically improving retrieval performance.

Experiments are conducted on two public team‑sports datasets: the Volleyball dataset (Nakanishi et al.) and a Basketball dataset. Retrieval metrics such as mean Average Precision (mAP) and Recall@K are reported. The HITL‑adapted model outperforms the vanilla GAFL by 12–18 % mAP and shows especially strong gains on “hard” cases where the query and distractor videos are visually similar but belong to different activities. Ablation studies dissect the contributions of (a) query‑aware selection versus a generic core‑set approach, (b) the inclusion of local dissimilarity, and (c) the regularization term. Each component provides measurable benefits, confirming the design choices.

Key contributions are: (1) demonstrating that a self‑supervised group‑activity representation can be specialized to arbitrary user‑defined activities with minimal binary feedback, (2) introducing a novel query‑aware video selection strategy tailored to multi‑person activity retrieval, and (3) integrating contrastive learning with regularization to achieve stable fine‑tuning. Limitations include dependence on the representativeness of the initial query videos and the current focus on single‑user, single‑activity scenarios. Future work is suggested on automatic query generation from metadata, collaborative multi‑user labeling, and online adaptation for streaming video.

In summary, the paper provides a practical, low‑cost solution for team‑sports analysts who need to locate specific tactical patterns without exhaustive annotation, advancing the state of the art in activity‑based video retrieval.

Comments & Academic Discussion

Loading comments...

Leave a Comment