Self-Hinting Language Models Enhance Reinforcement Learning

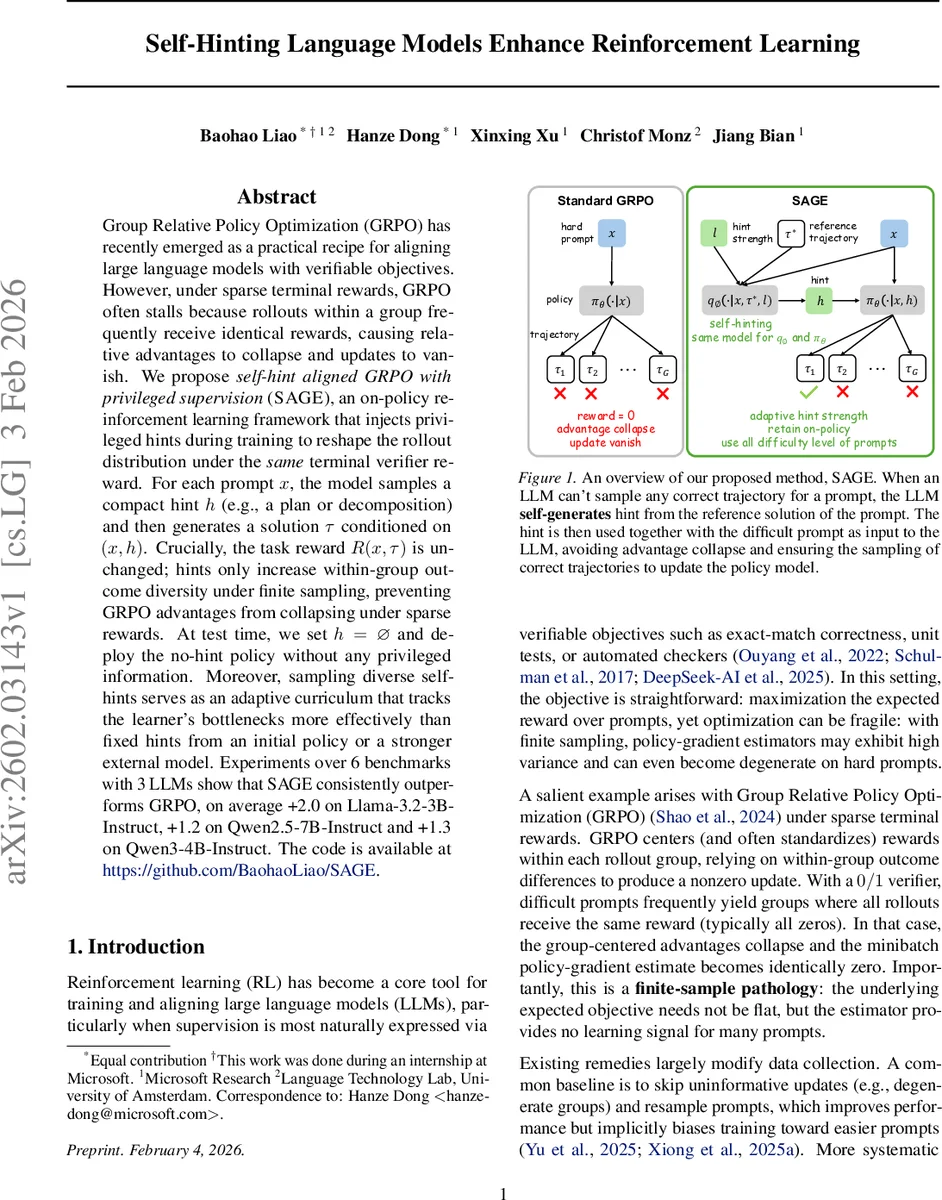

Group Relative Policy Optimization (GRPO) has recently emerged as a practical recipe for aligning large language models with verifiable objectives. However, under sparse terminal rewards, GRPO often stalls because rollouts within a group frequently receive identical rewards, causing relative advantages to collapse and updates to vanish. We propose self-hint aligned GRPO with privileged supervision (SAGE), an on-policy reinforcement learning framework that injects privileged hints during training to reshape the rollout distribution under the same terminal verifier reward. For each prompt $x$, the model samples a compact hint $h$ (e.g., a plan or decomposition) and then generates a solution $τ$ conditioned on $(x,h)$. Crucially, the task reward $R(x,τ)$ is unchanged; hints only increase within-group outcome diversity under finite sampling, preventing GRPO advantages from collapsing under sparse rewards. At test time, we set $h=\varnothing$ and deploy the no-hint policy without any privileged information. Moreover, sampling diverse self-hints serves as an adaptive curriculum that tracks the learner’s bottlenecks more effectively than fixed hints from an initial policy or a stronger external model. Experiments over 6 benchmarks with 3 LLMs show that SAGE consistently outperforms GRPO, on average +2.0 on Llama-3.2-3B-Instruct, +1.2 on Qwen2.5-7B-Instruct and +1.3 on Qwen3-4B-Instruct. The code is available at https://github.com/BaohaoLiao/SAGE.

💡 Research Summary

The paper addresses a critical failure mode of Group Relative Policy Optimization (GRPO) when applied to large language models (LLMs) under sparse terminal rewards. In GRPO, a batch of G rollouts for each prompt is centered and standardized using the group’s mean and variance, and the resulting advantages drive policy updates. With binary (0/1) rewards, especially on hard prompts, most groups consist entirely of failures, causing the variance to be zero and the standardized advantages to collapse. Consequently, the gradient estimate becomes zero and learning stalls. Existing mitigations—such as skipping degenerate groups, resampling prompts, or allocating more rollouts to difficult examples—either bias the training distribution or require external models, and they do not fundamentally solve the collapse problem.

To overcome this, the authors propose SAGE (Self‑Hint Aligned GRPO with Privileged Supervision). The key idea is to inject privileged hints h during training while keeping the external task reward unchanged. For each prompt x, a compact hint (e.g., a plan, decomposition, or partial solution) is sampled from a hint generator qϕ(h | x, τ⋆, ℓ), where τ⋆ is a reference solution and ℓ∈{0,…,L} denotes hint strength. The policy is then conditioned on both the prompt and the hint, πθ(· | x, h), and rollouts are drawn from this conditioned distribution. Because the hint increases the probability of generating a successful trajectory, the within‑group reward variance becomes non‑zero, preventing the advantage collapse. Crucially, at test time ℓ is set to 0, h is empty, and the model reverts to the original no‑hint policy, so deployment incurs no extra information or overhead.

SAGE introduces a policy‑dependent scheduler that activates hints only when a prompt’s recent rollouts show zero variance (i.e., a “collapse indicator”). This creates an automatic curriculum: easy prompts receive no hint, while hard prompts receive increasingly informative hints until the group’s reward variance reappears. The scheduler can be a simple epoch‑level accuracy threshold (SAGE‑LIGHT) or a more fine‑grained per‑prompt rule.

The authors provide a theoretical analysis showing that GRPO’s update magnitude can be expressed as a gate‑opening probability u(p)=1−(1−p)^G−p^G, where p is the success probability under the current hint configuration. u(p) is maximized at p=½ and is approximately G·p when p≪1. Therefore, hints should be calibrated to move p toward ½; too weak hints leave p≈0 (gate closed), while overly strong hints push p≈1 (gate closes again). They prove that the optimal hint distribution is policy‑dependent: the hint generator must adapt as the policy evolves to keep p≈½. Moreover, because u(p) is concave, sampling many different hints per prompt would actually reduce the expected gate‑opening probability, justifying the design choice of a single hint per prompt per epoch.

Experimentally, SAGE is evaluated on six benchmark suites covering reasoning, code generation, and mathematics, using three LLMs: Llama‑3.2‑3B‑Instruct, Qwen2.5‑7B‑Instruct, and Qwen3‑4B‑Instruct. Group sizes range from 8 to 32 rollouts, and hint strengths ℓ=2 are found to be most effective. Results show consistent improvements over vanilla GRPO: +2.0 points on Llama‑3.2‑3B‑Instruct, +1.2 on Qwen2.5‑7B‑Instruct, and +1.3 on Qwen3‑4B‑Instruct. The gains are especially pronounced on prompts that would otherwise never see a successful rollout under sparse rewards; SAGE dramatically reduces the fraction of “never‑sampled” prompts.

Ablation studies confirm several design choices: (1) conditioning the log‑probability on the hint is essential; dropping the hint from the loss makes the update off‑policy and unstable. (2) Using hints indiscriminately (without the scheduler) can over‑strengthen the policy, pushing p close to 1 and re‑closing the gate. (3) Online self‑hinting—periodically refreshing the hint generator from the current policy—outperforms fixed hints extracted once at the start, and even matches or exceeds hints generated by a stronger external teacher model.

In summary, SAGE offers a principled, on‑policy method to alleviate GRPO’s collapse under sparse rewards by leveraging self‑generated privileged hints. It preserves the simplicity of GRPO, requires no extra supervision at deployment, and automatically adapts hint strength through a lightweight scheduler. The work opens avenues for extending privileged hinting to continuous rewards, multi‑objective settings, and larger-scale LLMs.

Comments & Academic Discussion

Loading comments...

Leave a Comment