De-conflating Preference and Qualification: Constrained Dual-Perspective Reasoning for Job Recommendation with Large Language Models

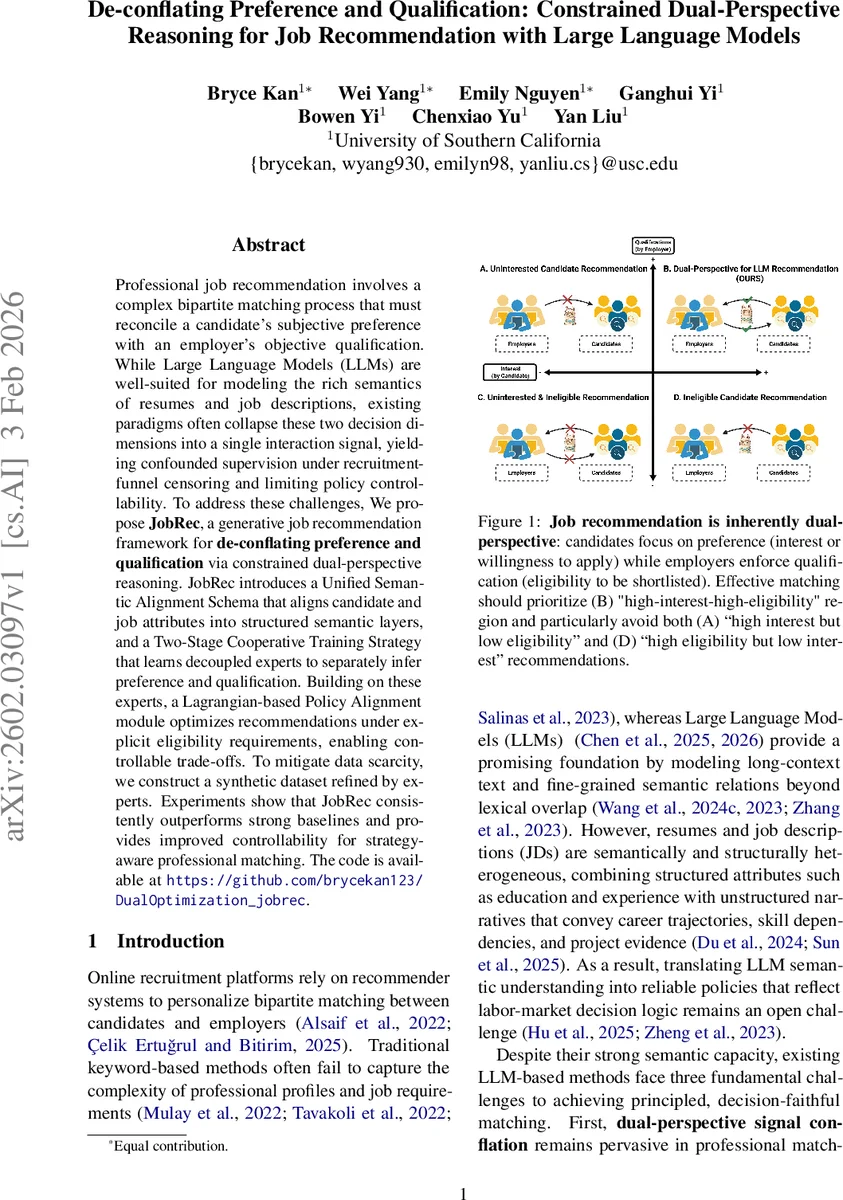

Professional job recommendation involves a complex bipartite matching process that must reconcile a candidate’s subjective preference with an employer’s objective qualification. While Large Language Models (LLMs) are well-suited for modeling the rich semantics of resumes and job descriptions, existing paradigms often collapse these two decision dimensions into a single interaction signal, yielding confounded supervision under recruitment-funnel censoring and limiting policy controllability. To address these challenges, We propose JobRec, a generative job recommendation framework for de-conflating preference and qualification via constrained dual-perspective reasoning. JobRec introduces a Unified Semantic Alignment Schema that aligns candidate and job attributes into structured semantic layers, and a Two-Stage Cooperative Training Strategy that learns decoupled experts to separately infer preference and qualification. Building on these experts, a Lagrangian-based Policy Alignment module optimizes recommendations under explicit eligibility requirements, enabling controllable trade-offs. To mitigate data scarcity, we construct a synthetic dataset refined by experts. Experiments show that JobRec consistently outperforms strong baselines and provides improved controllability for strategy-aware professional matching.

💡 Research Summary

The paper tackles the fundamental tension in online recruitment between a candidate’s subjective interest in a job (preference) and an employer’s objective eligibility criteria (qualification). Existing approaches typically collapse these two dimensions into a single interaction label such as “match” or “hire,” which leads to confounded supervision, especially under recruitment‑funnel censoring where negative outcomes are ambiguous. To resolve this, the authors introduce JobRec, a generative job‑recommendation framework that explicitly de‑conflates preference and qualification and aligns them through a constrained optimization layer.

The first technical contribution is the Unified Semantic Alignment Schema (USAS), a hierarchical representation that symmetrically organizes both candidate profiles and job descriptions into four layers: (1) basic metadata, (2) competency & qualification, (3) operational constraint & commitment, and (4) deep semantic & experience. This schema allows a large language model (LLM) to ingest structured fields (e.g., education, GPA, required majors) together with unstructured narratives (project descriptions, persona embeddings) in a consistent, side‑by‑side fashion.

Building on USAS, the problem is decomposed into two independent prediction tasks. The preference module produces a score s_pref(u,i) that estimates the probability a candidate would apply to a job, driven mainly by semantic alignment and practical constraints. The qualification module yields s_qual(u,i) that estimates the probability the candidate meets the employer’s screening thresholds, primarily based on eligibility‑related attributes. By modeling these scores separately, the framework avoids the diagnostic ambiguity of a single “success” label.

The core of the recommendation engine is a Lagrangian‑based joint optimization. For each candidate the objective is to maximize s_pref subject to a minimum eligibility constraint s_qual ≥ ε. Introducing a non‑negative multiplier λ, the Lagrangian L = s_pref + λ·(s_qual − ε) is optimized in a saddle‑point fashion (min λ ≥ 0 max θ L). At inference time the final ranking score is s_final = s_pref + λ*·(s_qual − ε), where λ* is the converged multiplier. Adjusting ε or λ provides a controllable trade‑off between candidate‑centric and employer‑centric policies, effectively suppressing high‑interest‑low‑eligibility or high‑eligibility‑low‑interest recommendations.

Training proceeds in two stages. Stage I jointly pre‑trains a shared transformer encoder with two task‑specific heads, using adaptive loss weighting to balance the dense preference signal against the sparser qualification signal. Stage II freezes the encoder and fine‑tunes only the policy layer and the dual variable λ via projected gradient ascent, aligning the ranking policy with explicit eligibility requirements.

Because labeled dual‑perspective data are scarce, the authors construct a synthetic dataset using an expert‑in‑the‑loop pipeline. USAS guides the generation of coherent candidate–job pairs; a rule‑based scorer provides initial (Y_pref, Y_qual) labels, which domain experts then review and correct. This yields high‑quality supervision for both modules and serves as a benchmark.

Extensive experiments on real‑world recruitment logs demonstrate that JobRec consistently outperforms strong baselines (keyword‑based matching, single‑objective LLM recommenders, prior multi‑task models) in Top‑K accuracy, NDCG, and controllability metrics. Moreover, varying λ and ε smoothly shifts the system between preference‑driven and qualification‑driven regimes without sacrificing performance. The paper releases code and the refined synthetic dataset, facilitating reproducibility and practical adoption. In sum, JobRec presents a principled, LLM‑powered solution that disentangles preference from qualification, embeds explicit eligibility constraints via Lagrangian optimization, and offers flexible, controllable job recommendation for professional matching.

Comments & Academic Discussion

Loading comments...

Leave a Comment