VOILA: Value-of-Information Guided Fidelity Selection for Cost-Aware Multimodal Question Answering

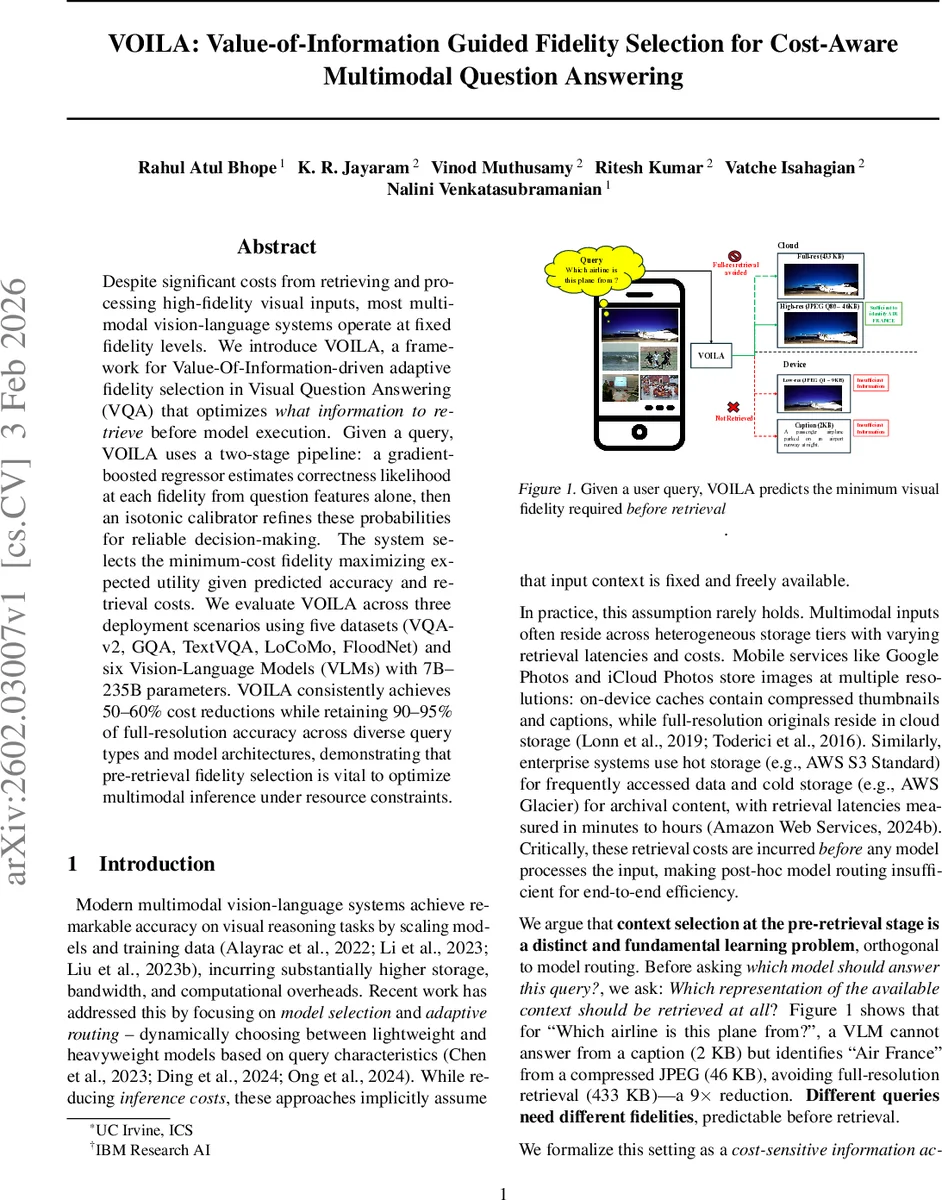

Despite significant costs from retrieving and processing high-fidelity visual inputs, most multimodal vision-language systems operate at fixed fidelity levels. We introduce VOILA, a framework for Value-Of-Information-driven adaptive fidelity selection in Visual Question Answering (VQA) that optimizes what information to retrieve before model execution. Given a query, VOILA uses a two-stage pipeline: a gradient-boosted regressor estimates correctness likelihood at each fidelity from question features alone, then an isotonic calibrator refines these probabilities for reliable decision-making. The system selects the minimum-cost fidelity maximizing expected utility given predicted accuracy and retrieval costs. We evaluate VOILA across three deployment scenarios using five datasets (VQA-v2, GQA, TextVQA, LoCoMo, FloodNet) and six Vision-Language Models (VLMs) with 7B-235B parameters. VOILA consistently achieves 50-60% cost reductions while retaining 90-95% of full-resolution accuracy across diverse query types and model architectures, demonstrating that pre-retrieval fidelity selection is vital to optimize multimodal inference under resource constraints.

💡 Research Summary

The paper tackles a previously under‑explored problem in multimodal vision‑language systems: deciding what visual information to retrieve before running a model, rather than merely how to compute the answer. Modern VQA pipelines typically fetch the highest‑resolution image regardless of the question, incurring substantial storage, bandwidth, and compute costs, especially in edge‑cloud, agentic memory, and mission‑critical cyber‑physical settings where latency and cost constraints dominate. The authors propose VOILA (Value‑Of‑Information Guided Fidelity Selection), a lightweight, two‑stage framework that predicts, from the question alone, the probability that a given visual fidelity (e.g., caption, thumbnail, low‑resolution JPEG, full‑resolution image) will lead to a correct answer.

Technical pipeline

-

Question‑conditioned success scoring – For each fidelity f in a predefined ordered set F, a Gradient‑Boosted Regressor (GBR) is trained on features extracted from the question text (TF‑IDF, length, presence of numbers, coarse type such as counting, color, spatial). The regressor outputs a raw score r_f(q) that correlates with the likelihood of a correct answer when the VLM is run on fidelity f. Training data are generated by actually executing the target VLM on all fidelities for a large labeled set and recording binary correctness y_f.

-

Fidelity‑specific probability calibration – Raw scores are not calibrated probabilities, so isotonic regression is applied per fidelity to learn a monotonic mapping ψ_f from r_f(q) to a calibrated probability \hat p_f(q) ≈ Pr(correct | q, f). Isotonic regression is non‑parametric, preserves the rank ordering, and provides strong convergence guarantees, ensuring that higher scores always map to higher probabilities.

-

Value‑of‑Information (VOI) based selection – With calibrated probabilities, the system computes the expected accuracy gain of moving from a lower‑cost fidelity f_i to a higher‑cost fidelity f_j: VOI(f_j | f_i) =

Comments & Academic Discussion

Loading comments...

Leave a Comment