RPL: Learning Robust Humanoid Perceptive Locomotion on Challenging Terrains

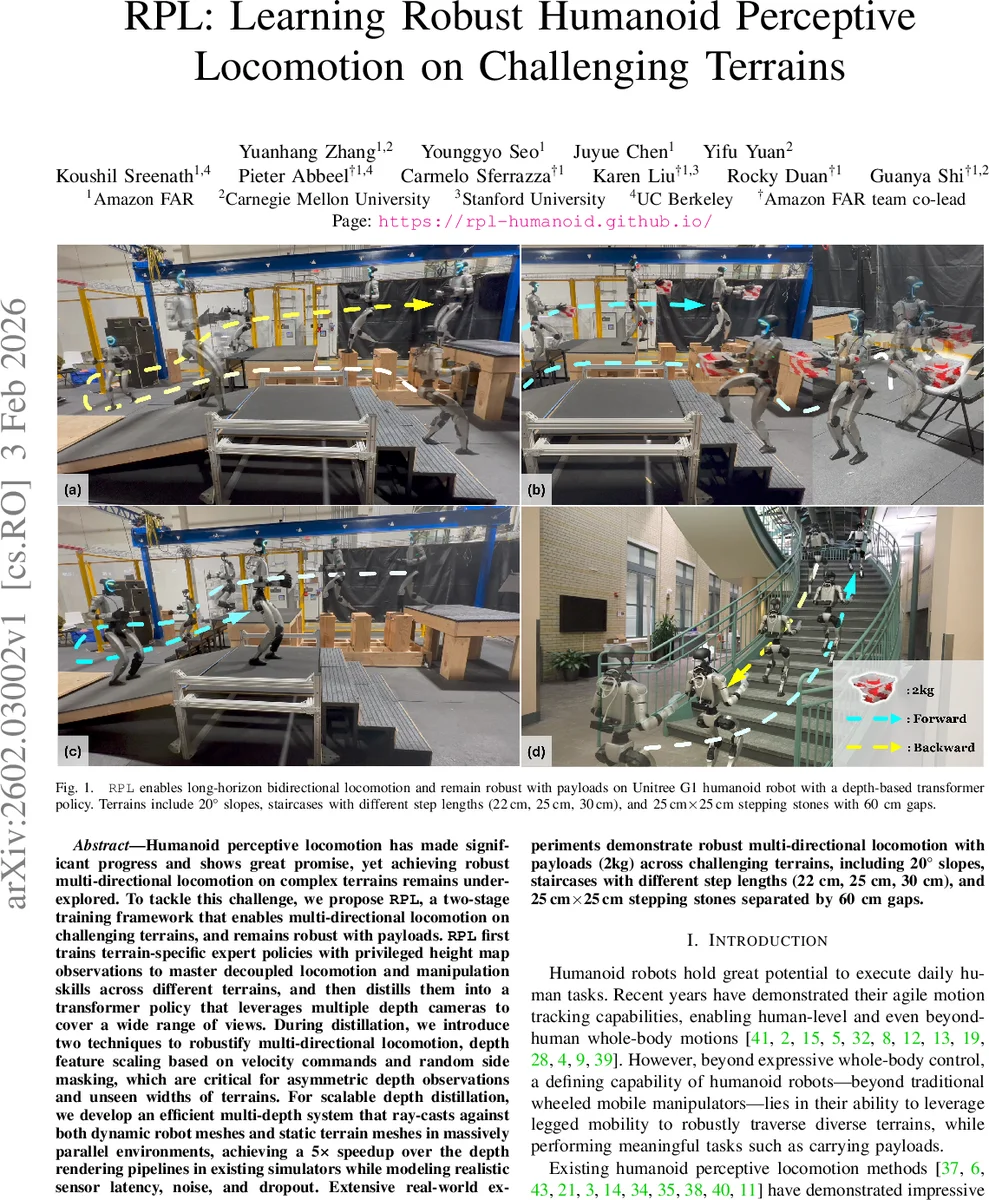

Humanoid perceptive locomotion has made significant progress and shows great promise, yet achieving robust multi-directional locomotion on complex terrains remains underexplored. To tackle this challenge, we propose RPL, a two-stage training framework that enables multi-directional locomotion on challenging terrains, and remains robust with payloads. RPL first trains terrain-specific expert policies with privileged height map observations to master decoupled locomotion and manipulation skills across different terrains, and then distills them into a transformer policy that leverages multiple depth cameras to cover a wide range of views. During distillation, we introduce two techniques to robustify multi-directional locomotion, depth feature scaling based on velocity commands and random side masking, which are critical for asymmetric depth observations and unseen widths of terrains. For scalable depth distillation, we develop an efficient multi-depth system that ray-casts against both dynamic robot meshes and static terrain meshes in massively parallel environments, achieving a 5-times speedup over the depth rendering pipelines in existing simulators while modeling realistic sensor latency, noise, and dropout. Extensive real-world experiments demonstrate robust multi-directional locomotion with payloads (2kg) across challenging terrains, including 20° slopes, staircases with different step lengths (22 cm, 25 cm, 30 cm), and 25 cm by 25 cm stepping stones separated by 60 cm gaps.

💡 Research Summary

RPL (Robust Perceptive Locomotion) introduces a two‑stage learning pipeline that enables a humanoid robot to traverse complex, uneven terrains in both forward and backward directions while carrying a payload. In the first stage, terrain‑specific expert policies are trained using privileged height‑map observations. These experts learn decoupled locomotion and manipulation skills under a force‑curriculum that applies vertical forces to the end‑effector, thereby preparing the robot for payload disturbances. The experts are trained with PPO, asymmetric actor‑critic, and symmetry data augmentation, and receive specialized rewards such as foot‑edge penalty, dense foothold penalty, and torso‑orientation tracking to ensure precise foot placement and stable upper‑body posture.

The second stage distills the collection of expert policies into a single visual policy that consumes multi‑view depth images from front and rear cameras. Distillation follows a DAgger‑style regression loss, where the student policy receives noisy proprioceptive data and depth observations, while the teachers operate on clean proprioception and height maps. Two novel augmentation techniques are introduced to handle the asymmetric and variable nature of multi‑camera inputs: (1) Depth Feature Scaling based on Velocity commands (DFSV) dynamically rescales depth features according to the commanded linear and angular velocities, mitigating distribution shift when the robot changes direction; (2) Random Side Masking (RSM) randomly occludes lateral portions of the depth images with varying widths during training, improving robustness to unseen terrain widths.

A key engineering contribution is an efficient GPU‑based multi‑depth rendering system built on NVIDIA Warp. The system performs massive parallel ray‑casting against both dynamic robot meshes and a shared static terrain mesh. By keeping robot body meshes in their local frames and transforming rays into those frames, the method avoids costly per‑frame mesh refitting. All ray‑mesh queries are fused into a single kernel and executed across environments, cameras, and pixels, achieving a five‑fold speedup over conventional simulators. The renderer also injects realistic sensor latency, Gaussian noise, and dropout to narrow the sim‑to‑real gap.

Real‑world experiments were conducted on a Unitree G1 humanoid equipped with a 2 kg payload. The robot successfully performed long‑horizon bidirectional locomotion on 20° slopes, staircases with step lengths of 22 cm, 25 cm, and 30 cm, and on a field of 25 cm × 25 cm stepping stones separated by 60 cm gaps. Importantly, the policy generalized to terrain widths and step dimensions not seen during training, demonstrating the effectiveness of DFSV and RSM.

In summary, RPL’s contributions are: (1) a two‑stage framework that leverages high‑fidelity height‑map experts to generate robust visual policies; (2) depth feature scaling and random side masking to handle asymmetric multi‑view perception and unseen terrain geometries; (3) a fast, parallel multi‑depth simulation pipeline that models dynamic self‑occlusions and realistic sensor artifacts; and (4) extensive real‑world validation showing stable, payload‑carrying locomotion across diverse, challenging terrains. This work advances the practical deployment of humanoid robots in unstructured environments by tightly integrating perception, control, and simulation efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment