CPMobius: Iterative Coach-Player Reasoning for Data-Free Reinforcement Learning

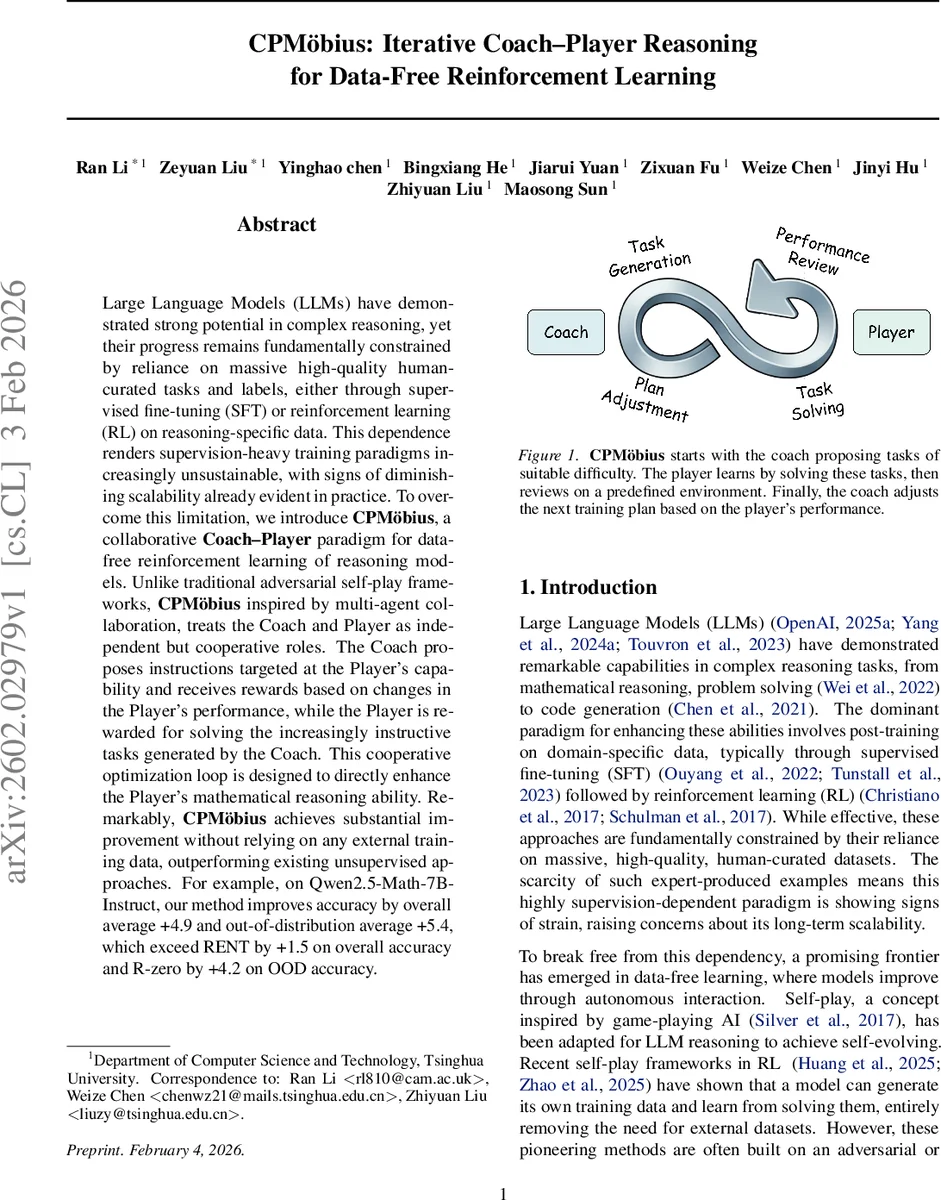

Large Language Models (LLMs) have demonstrated strong potential in complex reasoning, yet their progress remains fundamentally constrained by reliance on massive high-quality human-curated tasks and labels, either through supervised fine-tuning (SFT) or reinforcement learning (RL) on reasoning-specific data. This dependence renders supervision-heavy training paradigms increasingly unsustainable, with signs of diminishing scalability already evident in practice. To overcome this limitation, we introduce CPMöbius (CPMobius), a collaborative Coach-Player paradigm for data-free reinforcement learning of reasoning models. Unlike traditional adversarial self-play, CPMöbius, inspired by real world human sports collaboration and multi-agent collaboration, treats the Coach and Player as independent but cooperative roles. The Coach proposes instructions targeted at the Player’s capability and receives rewards based on changes in the Player’s performance, while the Player is rewarded for solving the increasingly instructive tasks generated by the Coach. This cooperative optimization loop is designed to directly enhance the Player’s mathematical reasoning ability. Remarkably, CPMöbius achieves substantial improvement without relying on any external training data, outperforming existing unsupervised approaches. For example, on Qwen2.5-Math-7B-Instruct, our method improves accuracy by an overall average of +4.9 and an out-of-distribution average of +5.4, exceeding RENT by +1.5 on overall accuracy and R-zero by +4.2 on OOD accuracy.

💡 Research Summary

CPMobius introduces a cooperative Coach‑Player paradigm for data‑free reinforcement learning (RL) of large language models (LLMs) focused on mathematical reasoning. Unlike prior self‑play approaches that pit two agents against each other, CPMobius treats the Coach and the Player as independent but collaborative partners. The Coach acts as a curriculum designer: at each iteration it samples a batch of m problem instructions x_i from its policy π_C θ. The Player, governed by policy π_P ϕ, attempts each instruction n times, producing answers y_{i,j}. A majority‑vote yields a pseudo‑label y*i, and each answer receives a verifiable binary reward r{i,j}=𝟙

Comments & Academic Discussion

Loading comments...

Leave a Comment