Aligning Forest and Trees in Images and Long Captions for Visually Grounded Understanding

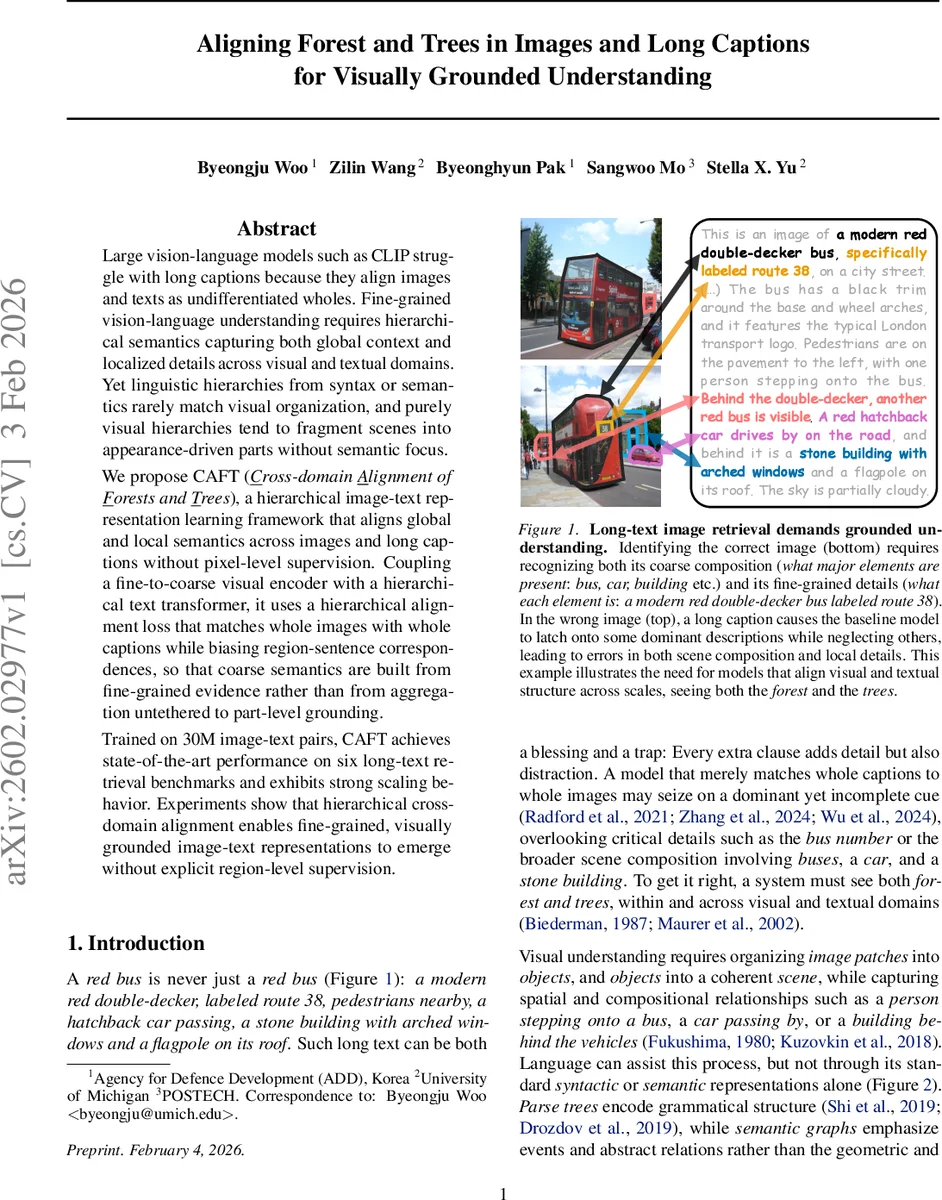

Large vision-language models such as CLIP struggle with long captions because they align images and texts as undifferentiated wholes. Fine-grained vision-language understanding requires hierarchical semantics capturing both global context and localized details across visual and textual domains. Yet linguistic hierarchies from syntax or semantics rarely match visual organization, and purely visual hierarchies tend to fragment scenes into appearance-driven parts without semantic focus. We propose CAFT (Cross-domain Alignment of Forests and Trees), a hierarchical image-text representation learning framework that aligns global and local semantics across images and long captions without pixel-level supervision. Coupling a fine-to-coarse visual encoder with a hierarchical text transformer, it uses a hierarchical alignment loss that matches whole images with whole captions while biasing region-sentence correspondences, so that coarse semantics are built from fine-grained evidence rather than from aggregation untethered to part-level grounding. Trained on 30M image-text pairs, CAFT achieves state-of-the-art performance on six long-text retrieval benchmarks and exhibits strong scaling behavior. Experiments show that hierarchical cross-domain alignment enables fine-grained, visually grounded image-text representations to emerge without explicit region-level supervision.

💡 Research Summary

The paper introduces CAFT (Cross‑domain Alignment of Forests and Trees), a hierarchical representation learning framework designed to overcome the limitations of current vision‑language models (e.g., CLIP) when dealing with long textual descriptions. Traditional models compress an image and its caption into single vectors, which works for short captions but fails to capture the myriad of fine‑grained details present in lengthy, richly structured sentences. Moreover, linguistic hierarchies such as syntactic parse trees or semantic graphs rarely align with the spatial organization of visual scenes, while purely visual hierarchies tend to fragment scenes into appearance‑driven parts without semantic coherence.

Key ideas

- Spatially structured long captions – The authors observe that many long captions naturally decompose into sentences, each describing a distinct region or object. They treat each sentence (or a small group of consecutive sentences) as a “sub‑caption,” forming a textual hierarchy that mirrors the part‑whole structure of the image.

- Coherent within‑domain hierarchies – On the visual side, a fine‑to‑coarse encoder starts from 196 super‑pixel tokens and progressively pools them (via graph‑pooling similar to CAST) into 64, 32, and finally 16 segment tokens. The mid‑level tokens (v_fine) retain localized object information, while the final CLS token (v_coarse) encodes the whole scene. On the language side, a hierarchical transformer first encodes each sub‑caption independently (Sub‑caption Transformer) and then aggregates the resulting embeddings with a Whole‑caption Transformer, producing a global caption embedding (t_whole). Residual MLP adapters refine sub‑caption embeddings before aggregation.

- Hierarchical cross‑domain alignment loss – The training objective combines two contrastive losses: (a) a global image‑caption loss aligning v_coarse with t_whole, and (b) a part‑level loss aligning v_fine with each t_sub. A weighting parameter λ balances the two terms, encouraging the model to construct global semantics from grounded fine‑grained evidence rather than from undifferentiated aggregation.

Training regime – CAFT is pretrained on 30 million image‑text pairs, where long captions are either harvested from the web or synthetically generated by large language models. No pixel‑level annotations or explicit region‑sentence correspondences are provided; the model discovers visual regions solely through language supervision.

Empirical results – The method is evaluated on six long‑text image‑retrieval benchmarks. CAFT consistently outperforms CLIP‑based extensions (LoTLIP, FLAIR, etc.) in Recall@1/5, especially on queries where subtle details (e.g., bus route numbers, specific object attributes) are crucial. Attention visualizations show that each sub‑caption attends to the appropriate image region, confirming emergent grounding. Moreover, the mid‑level visual tokens serve as unsupervised segmentation masks. In zero‑shot referring segmentation, CAFT surpasses GroupViT and MaskCLIP, demonstrating that meaningful region parsing can be learned without any segmentation labels.

Contributions

- Identification and exploitation of a spatial hierarchy inherent in long captions.

- Design of matched part‑whole hierarchies for both vision and language, ensuring that global representations are built from fine‑grained, grounded components.

- Introduction of a dual‑level contrastive alignment objective that jointly learns whole‑whole and part‑part correspondences without explicit supervision.

Impact – By aligning “forests” (global scene semantics) and “trees” (local details) across modalities, CAFT provides a new paradigm for visually grounded language understanding. The approach opens avenues for more complex tasks such as video‑text alignment, multimodal reasoning, and large‑scale retrieval where long, descriptive texts are the norm.

Comments & Academic Discussion

Loading comments...

Leave a Comment