Generative Engine Optimization: A VLM and Agent Framework for Pinterest Acquisition Growth

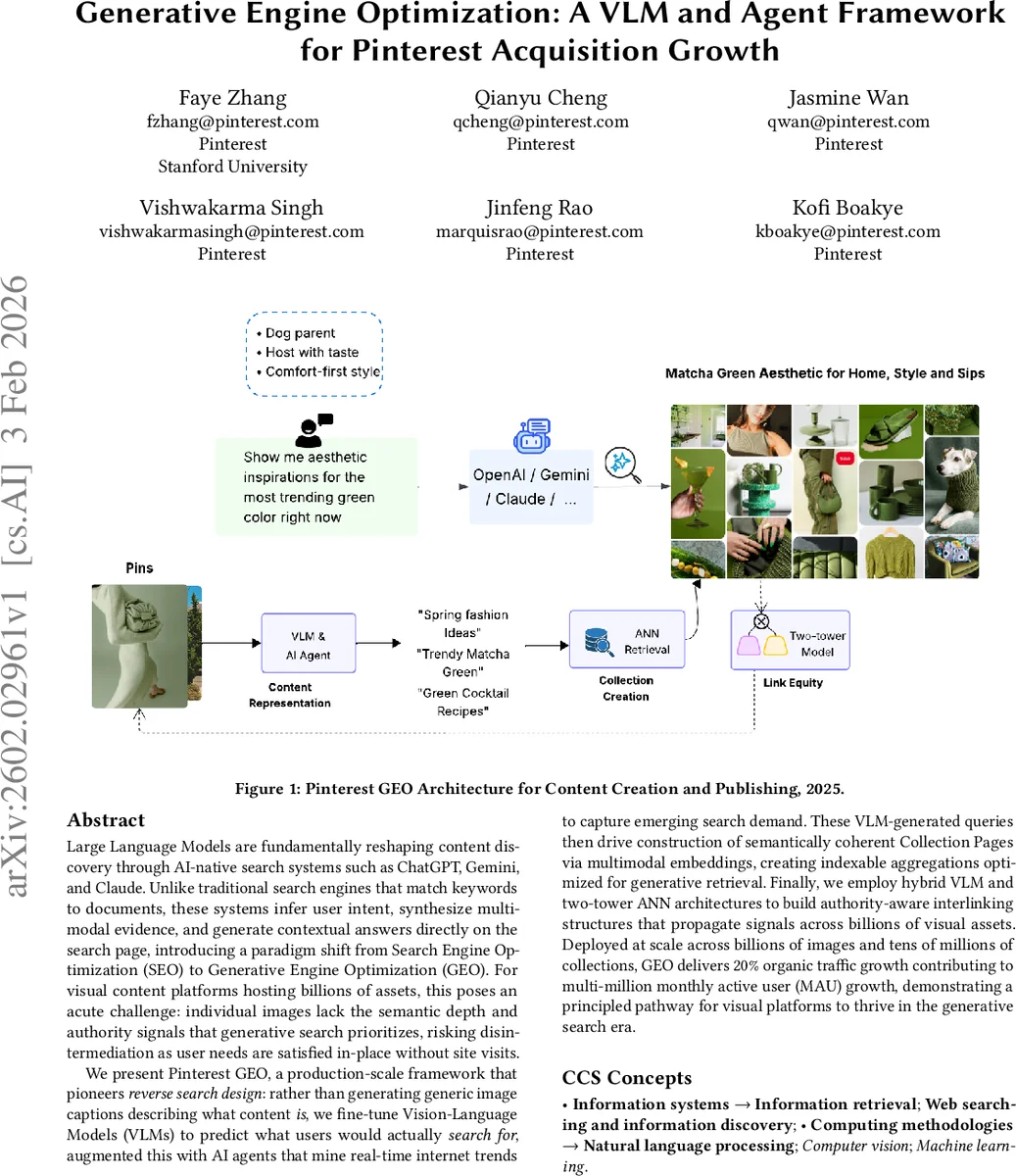

Large Language Models are fundamentally reshaping content discovery through AI-native search systems such as ChatGPT, Gemini, and Claude. Unlike traditional search engines that match keywords to documents, these systems infer user intent, synthesize multimodal evidence, and generate contextual answers directly on the search page, introducing a paradigm shift from Search Engine Optimization (SEO) to Generative Engine Optimization (GEO). For visual content platforms hosting billions of assets, this poses an acute challenge: individual images lack the semantic depth and authority signals that generative search prioritizes, risking disintermediation as user needs are satisfied in-place without site visits. We present Pinterest GEO, a production-scale framework that pioneers reverse search design: rather than generating generic image captions describing what content is, we fine-tune Vision-Language Models (VLMs) to predict what users would actually search for, augmented this with AI agents that mine real-time internet trends to capture emerging search demand. These VLM-generated queries then drive construction of semantically coherent Collection Pages via multimodal embeddings, creating indexable aggregations optimized for generative retrieval. Finally, we employ hybrid VLM and two-tower ANN architectures to build authority-aware interlinking structures that propagate signals across billions of visual assets. Deployed at scale across billions of images and tens of millions of collections, GEO delivers 20% organic traffic growth contributing to multi-million monthly active user (MAU) growth, demonstrating a principled pathway for visual platforms to thrive in the generative search era.

💡 Research Summary

The paper tackles a newly emerging challenge for visual‑centric platforms in the age of generative search engines such as ChatGPT, Gemini, and Claude. Traditional Search Engine Optimization (SEO) relies on keyword matching and link structures, but generative engines synthesize answers, infer user intent, and cite only the most authoritative, context‑rich sources. Because individual images lack the textual depth and authority signals required by these engines, platforms risk losing traffic to in‑place AI answers. The authors formalize this as the “visual Generative Engine Optimization (GEO)” problem and present a production‑scale solution deployed at Pinterest, called Pinterest GEO.

The core of the solution consists of three tightly coupled components: (1) a Vision‑Language Model (VLM) fine‑tuned to generate search‑oriented queries rather than descriptive captions, (2) an AI‑agent system that continuously mines external web trends to anticipate emerging user demand, and (3) a hybrid retrieval and linking architecture that builds topic‑coherent collection pages and propagates authority across billions of assets.

For the VLM, the authors start from Qwen2‑VL‑7B‑Instruct, a large multimodal transformer, and apply LoRA‑based low‑rank adaptation to keep training efficient. Training data are constructed from two sources: (a) real‑world query‑image pairs extracted from search console metrics (impressions, CTR, average position) filtered for high performance, and (b) synthetic pairs generated by GPT‑4V that cover three query categories—description (30 %), style/detail (30 %), and use‑case (40 %). This balanced mix forces the model to learn not only what the image depicts but also how users would phrase intent‑driven searches (e.g., “garden party outfit ideas”). At inference time, the model emits 5‑7 queries per image with low temperature decoding, followed by safety filtering and a secondary LLaMA‑7B classifier that checks brand safety, sentiment, and intent strength.

The AI‑agent framework runs continuously, pulling trend signals from social media, news feeds, and e‑commerce sites. It clusters emerging keywords, maps them to relevant visual assets, and prompts the VLM to generate fresh query‑image pairs for topics that have not yet appeared in behavioral logs, thereby addressing the cold‑start problem.

Generated queries are embedded using CLIP‑style multimodal encoders and indexed with an Approximate Nearest Neighbor (ANN) structure (HNSW). The system clusters semantically similar images into “collection pages” that act as authoritative landing surfaces. To distribute authority, a two‑tower ANN model encodes queries and images independently, while a hybrid VLM‑based link model learns internal hyperlinks between collections and individual pins. This internal link graph mimics PageRank‑like equity propagation, making the collections more likely to be cited by generative engines as evidence.

Deployed at billion‑scale, the system powers tens of millions of collection pages. Offline experiments show a 19 % improvement in query‑image alignment over baseline captioning models. Online A/B tests report a 20 % lift in organic traffic and a 1.8× increase in citation rate from AI‑generated answers. Inference cost is reduced by a factor of 94 compared with commercial VLM APIs, thanks to LoRA fine‑tuning and batch inference on A100 GPUs.

Ablation studies isolate the contribution of each component: VLM fine‑tuning alone yields a 12 % traffic gain, the AI‑agent trend module adds 9 %, and the two‑tower link architecture contributes an additional 5 %. The authors discuss limitations, including dependence on external trend data quality, the need for periodic re‑training to keep query distributions fresh, and the lack of long‑term SEO impact analysis.

In conclusion, the paper demonstrates that a tightly integrated pipeline of intent‑oriented VLM generation, proactive trend mining, and authority‑aware linking can effectively transform visual content into assets that thrive under generative search. The work provides a concrete, scalable blueprint for other image‑heavy platforms seeking to maintain relevance in the rapidly evolving AI‑driven discovery landscape.

Comments & Academic Discussion

Loading comments...

Leave a Comment