RANKVIDEO: Reasoning Reranking for Text-to-Video Retrieval

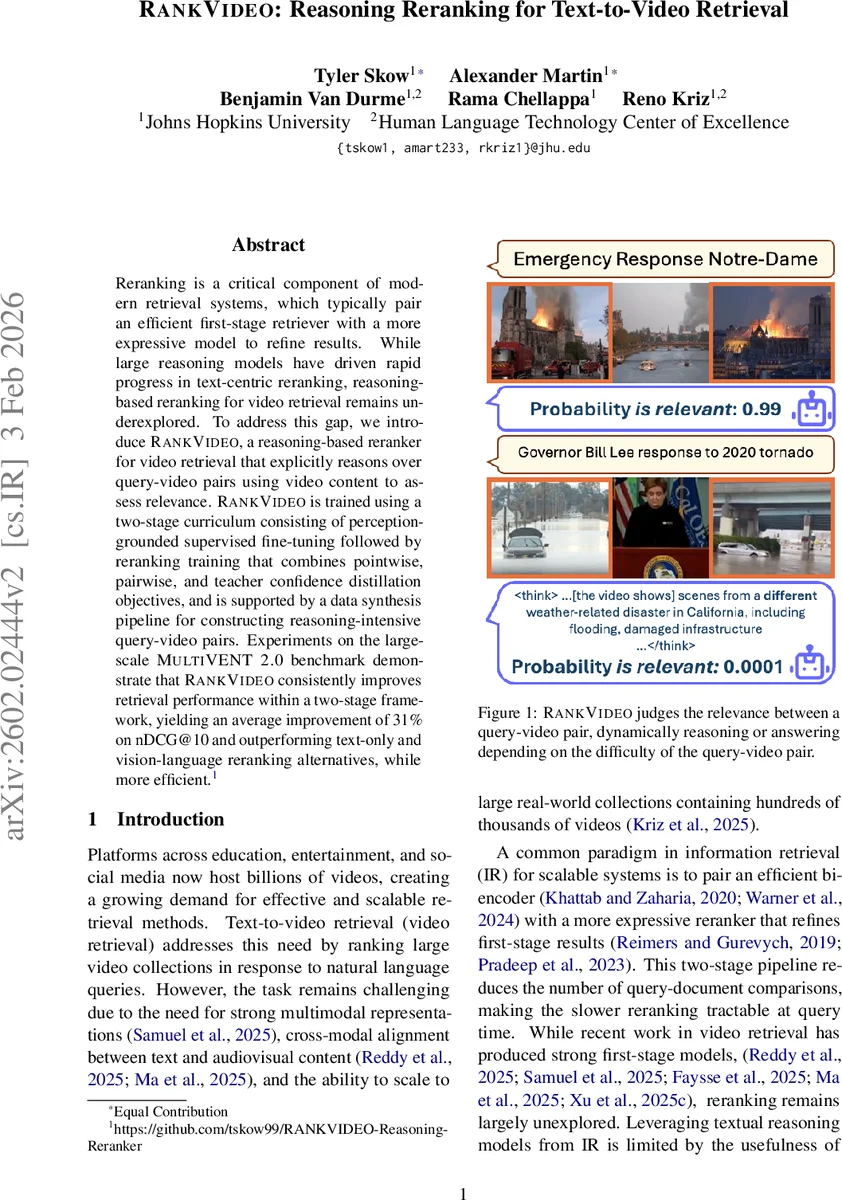

Reranking is a critical component of modern retrieval systems, which typically pair an efficient first-stage retriever with a more expressive model to refine results. While large reasoning models have driven rapid progress in text-centric reranking, reasoning-based reranking for video retrieval remains underexplored. To address this gap, we introduce RANKVIDEO, a reasoning-based reranker for video retrieval that explicitly reasons over query-video pairs using video content to assess relevance. RANKVIDEO is trained using a two-stage curriculum consisting of perception-grounded supervised fine-tuning followed by reranking training that combines pointwise, pairwise, and teacher confidence distillation objectives, and is supported by a data synthesis pipeline for constructing reasoning-intensive query-video pairs. Experiments on the large-scale MultiVENT 2.0 benchmark demonstrate that RANKVIDEO consistently improves retrieval performance within a two-stage framework, yielding an average improvement of 31% on nDCG@10 and outperforming text-only and vision-language reranking alternatives, while more efficient.

💡 Research Summary

RANKVIDEO introduces a reasoning‑based reranker specifically designed for text‑to‑video retrieval, addressing the gap where most existing reranking approaches rely solely on textual information or treat video as a set of extracted captions and transcripts. The system operates in a classic two‑stage retrieval pipeline: a fast, high‑recall first‑stage retriever (e.g., OMNI‑EMBED, MM‑MORRF, CLIP‑based, LANGUAGE‑BIND, or VIDEO‑COLBERT) produces a shortlist of up to 1,000 candidates per query, and RANKVIDEO then refines this list using a large multimodal reasoning model that directly consumes raw video frames, audio, on‑screen text, and metadata.

The core technical contribution is a two‑stage curriculum. Stage 1 (Perception‑Grounded Supervised Fine‑Tuning) trains the model to generate teacher‑provided captions for each video, forcing it to learn fine‑grained visual and auditory grounding before any ranking supervision is introduced. Stage 2 (Ranking Fine‑Tuning) adds a unified loss that blends three components: (i) a pointwise binary cross‑entropy loss that stabilizes calibration, (ii) a pairwise softmax loss that pushes the true positive to the top of each query‑specific batch, and (iii) a teacher‑distillation loss that transfers the calibrated confidence of a large reasoning teacher (Reason‑Rank‑32B) into the student’s logits. The relevance score is computed as the logit difference between “yes” and “no” tokens, eliminating the need for full text generation at inference time and enabling fast scoring.

To obtain training data that reflects real‑world reasoning demands, the authors synthesize a large set of query‑video pairs from the MultiVENT 2.0 corpus. They automatically generate captions (Qwen‑3‑Omni‑30B‑A3B‑INSTRUCT), transcribe audio (Whisper‑Large‑V2), extract OCR text, and collect metadata. Using a text‑only reasoning model (Qwen‑3‑32B), they create five query variants (caption‑only, audio‑only, OCR‑only, metadata‑only, and all‑modalities combined). After filtering based on first‑stage retrieval scores and teacher judgments, they retain 35,684 high‑quality pairs (9,267 positives, 26,258 negatives) with an average of 3.85 candidates per query. Hard negative mining further categorizes candidates into trusted negatives, suspected positives, and hard negatives based on the teacher’s confidence margin, ensuring that the model focuses on the most confusing distractors.

Experiments on the MultiVENT 2.0 test set demonstrate that RANKVIDEO consistently improves retrieval metrics across all four first‑stage retrievers. The average gain is 31 % in nDCG@10, with similar lifts in recall at 10, 20, 50, and 100. Compared to strong baselines—Reason‑Rank (text‑only reasoning reranker), Qwen‑3‑VL‑8B‑INSTRUCT (state‑of‑the‑art video understanding), and Qwen‑3‑VL‑8B‑THINKING (reasoning‑augmented video model)—RANKVIDEO achieves higher effectiveness while being 2–3× faster at inference because it avoids generating long rationales and only evaluates a single logit difference per candidate. Ablation studies confirm that each loss component and the hard‑negative strategy contribute meaningfully; removing teacher distillation or the pairwise term reduces performance by several points. Moreover, the model exhibits dynamic reasoning depth: for easy queries it terminates after a shallow pass, while for complex queries it allocates more computation, further improving efficiency without sacrificing accuracy.

Limitations include reliance on synthetic queries that may not capture the full diversity of user intent, and the fact that the teacher model itself is a large LLM, which adds computational overhead during training. Future work could incorporate real user logs for query generation, explore lighter teacher models, and extend the approach to streaming video or multimodal conversational retrieval.

In summary, RANKVIDEO successfully brings large‑scale multimodal reasoning into the reranking stage of video retrieval, delivering substantial gains in relevance while maintaining practical efficiency, and opens new avenues for research at the intersection of video understanding, reasoning, and information retrieval.

Comments & Academic Discussion

Loading comments...

Leave a Comment