IRIS: Implicit Reward-Guided Internal Sifting for Mitigating Multimodal Hallucination

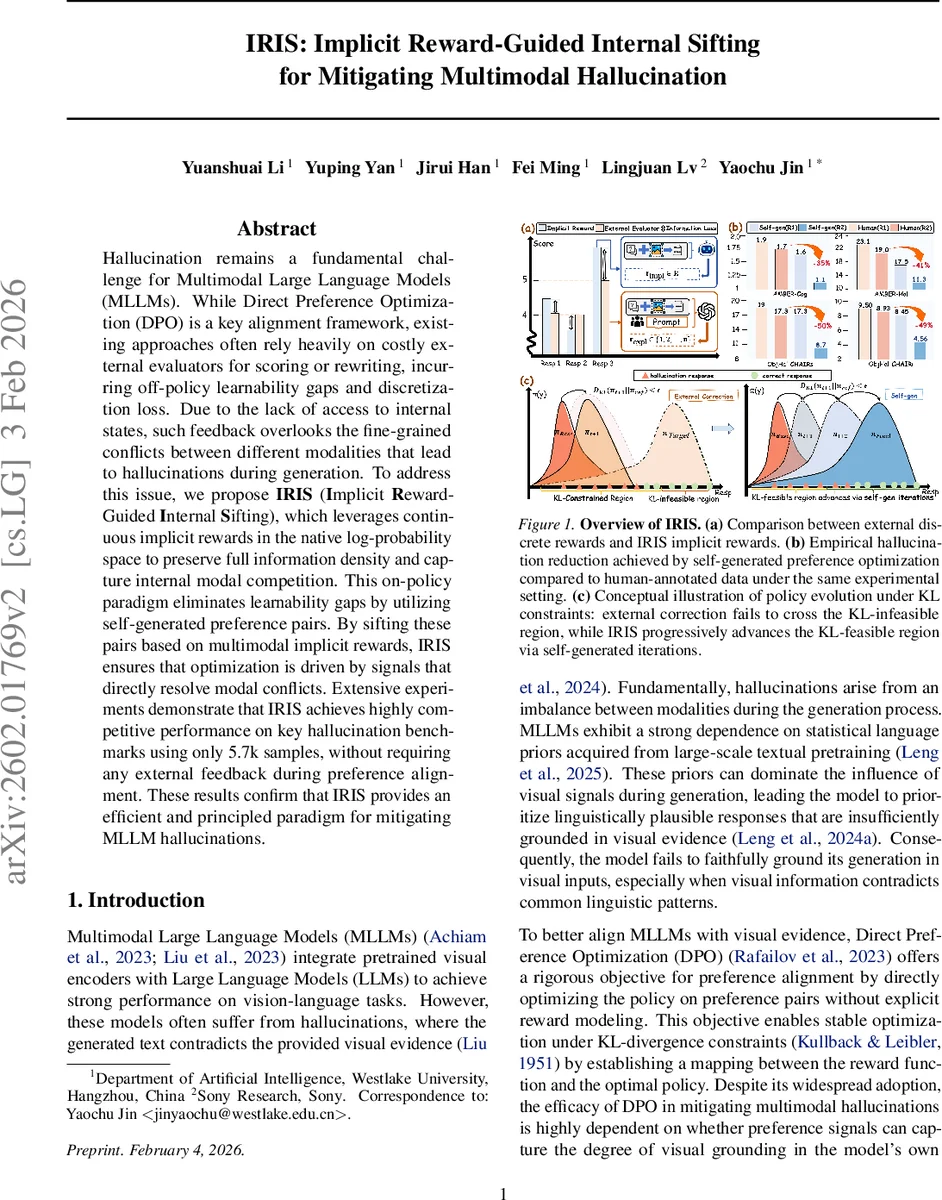

Hallucination remains a fundamental challenge for Multimodal Large Language Models (MLLMs). While Direct Preference Optimization (DPO) is a key alignment framework, existing approaches often rely heavily on costly external evaluators for scoring or rewriting, incurring off-policy learnability gaps and discretization loss. Due to the lack of access to internal states, such feedback overlooks the fine-grained conflicts between different modalities that lead to hallucinations during generation. To address this issue, we propose IRIS (Implicit Reward-Guided Internal Sifting), which leverages continuous implicit rewards in the native log-probability space to preserve full information density and capture internal modal competition. This on-policy paradigm eliminates learnability gaps by utilizing self-generated preference pairs. By sifting these pairs based on multimodal implicit rewards, IRIS ensures that optimization is driven by signals that directly resolve modal conflicts. Extensive experiments demonstrate that IRIS achieves highly competitive performance on key hallucination benchmarks using only 5.7k samples, without requiring any external feedback during preference alignment. These results confirm that IRIS provides an efficient and principled paradigm for mitigating MLLM hallucinations.

💡 Research Summary

The paper tackles the persistent problem of hallucinations in Multimodal Large Language Models (MLLMs), where generated text contradicts visual evidence. Traditional approaches to mitigate this issue rely on Direct Preference Optimization (DPO) combined with external evaluators such as GPT‑4V to produce discrete scores or corrective feedback. The authors identify two fundamental shortcomings of this paradigm: (1) discretization discards the fine‑grained information present in the model’s continuous probability distribution, and (2) the off‑policy nature of externally generated preference data creates a “learnability gap” under the KL‑divergence constraints of DPO, often resulting in vanishing gradients because the reference policy assigns near‑zero probability to off‑policy samples.

To overcome these limitations, the authors propose IRIS (Implicit Reward‑Guided Internal Sifting), an on‑policy framework that leverages the model’s own implicit reward signal derived from log‑probability ratios between the current policy πθ and a reference policy πref (typically the policy from the previous iteration). The implicit reward is defined as r(y)=β·log

Comments & Academic Discussion

Loading comments...

Leave a Comment