ObjEmbed: Towards Universal Multimodal Object Embeddings

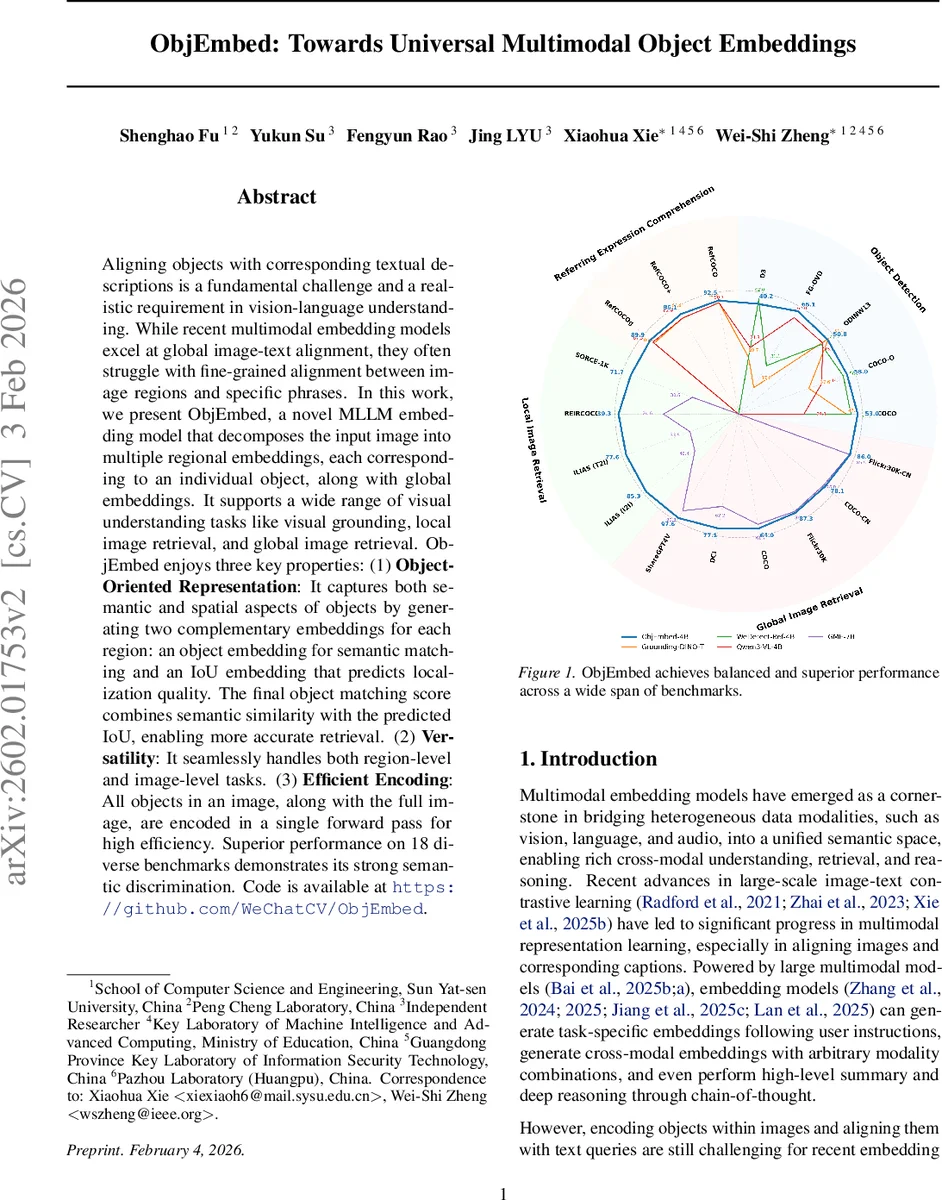

Aligning objects with corresponding textual descriptions is a fundamental challenge and a realistic requirement in vision-language understanding. While recent multimodal embedding models excel at global image-text alignment, they often struggle with fine-grained alignment between image regions and specific phrases. In this work, we present ObjEmbed, a novel MLLM embedding model that decomposes the input image into multiple regional embeddings, each corresponding to an individual object, along with global embeddings. It supports a wide range of visual understanding tasks like visual grounding, local image retrieval, and global image retrieval. ObjEmbed enjoys three key properties: (1) Object-Oriented Representation: It captures both semantic and spatial aspects of objects by generating two complementary embeddings for each region: an object embedding for semantic matching and an IoU embedding that predicts localization quality. The final object matching score combines semantic similarity with the predicted IoU, enabling more accurate retrieval. (2) Versatility: It seamlessly handles both region-level and image-level tasks. (3) Efficient Encoding: All objects in an image, along with the full image, are encoded in a single forward pass for high efficiency. Superior performance on 18 diverse benchmarks demonstrates its strong semantic discrimination.

💡 Research Summary

ObjEmbed introduces a novel multimodal large language model (MLLM)‑based framework that produces object‑level embeddings together with a global image embedding in a single forward pass. The system first generates a set of region proposals (100 in the paper) using an off‑the‑shelf detector (WeDetect‑Uni). Each proposal is processed by RoIAlign, compressed by an object projector, and inserted into a sequence of special tokens that are fed to a pretrained multimodal LLM (Qwen‑3‑VL‑Instruct). Five special tokens are defined: ⟨object⟩ (semantic object embedding), ⟨iou⟩ (a token that predicts the Intersection‑over‑Union quality of the bounding box), ⟨global⟩ (full‑image embedding), ⟨local text⟩ (text token for object‑level matching) and ⟨global text⟩ (text token for image‑level matching). Because all visual tokens and the text tokens share the same transformer backbone, the model can simultaneously output embeddings for every object, the IoU quality scores, and the global image representation without any autoregressive decoding, achieving high throughput (≈30 fps on a single RTX‑4090 with FlashAttention‑2).

Training combines three losses: (1) region‑level contrastive learning using sigmoid focal loss to align each object description with its corresponding proposals, handling many‑to‑one and missing‑annotation cases; (2) image‑level contrastive learning that aligns short and long captions with the global image token; and (3) IoU regression, where a linear head on the ⟨iou⟩ token predicts the IoU of the proposal with the ground‑truth box, also optimized with sigmoid focal loss. The total loss is a weighted sum of these three components.

During inference, the matching score between a text query and an object is computed as the product of the cosine similarity of the object embedding and the local‑text embedding, and the predicted IoU from the IoU token. This product naturally balances semantic relevance with spatial accuracy. For local image retrieval, the system takes the maximum matching score across all objects, enabling fine‑grained “part‑aware” search even when the target occupies a small region. For global image retrieval, the ⟨global⟩ embedding is used directly, preserving compatibility with standard image‑text retrieval benchmarks.

The authors assembled a training corpus of 1.3 M image‑text pairs (≈1.29 M individual annotations) by aggregating public detection and referring‑expression datasets (COCO, LVIS, V3Det, RefCOCO/+/g, FG‑OVD, etc.) and adding 300 k self‑collected images with captions. Experiments on 18 diverse benchmarks demonstrate that ObjEmbed outperforms prior global‑embedding models by up to 20 percentage points on local image retrieval, achieves 53.0 mAP on COCO detection (competitive with specialist detectors), and reaches 89.5 % accuracy on RefCOCO/+/g referring expression comprehension. Global image retrieval performance is on par with state‑of‑the‑art CLIP‑based models despite using a relatively modest training set.

Key strengths of ObjEmbed include: (i) object‑oriented representation that jointly encodes semantics and localization quality; (ii) versatility across region‑level tasks (detection, grounding, referring expression) and image‑level tasks (global retrieval); (iii) efficient single‑pass encoding that scales to hundreds of objects per image. Limitations are the reliance on the quality of the upstream proposal generator and the fact that the IoU token predicts only a quality score rather than directly refining box coordinates. Future work may explore end‑to‑end proposal‑free detection, multi‑head designs that output both IoU and refined boxes, and scaling the pre‑training to larger, possibly noisy, image‑text corpora to further improve generalization.

In summary, ObjEmbed establishes a unified, efficient, and high‑performing paradigm for multimodal object embeddings, bridging the gap between fine‑grained visual grounding and global image‑text alignment, and opening new possibilities for object‑centric applications such as autonomous driving, robotic manipulation, and content moderation.

Comments & Academic Discussion

Loading comments...

Leave a Comment