Morphis: SLO-Aware Resource Scheduling for Microservices with Time-Varying Call Graphs

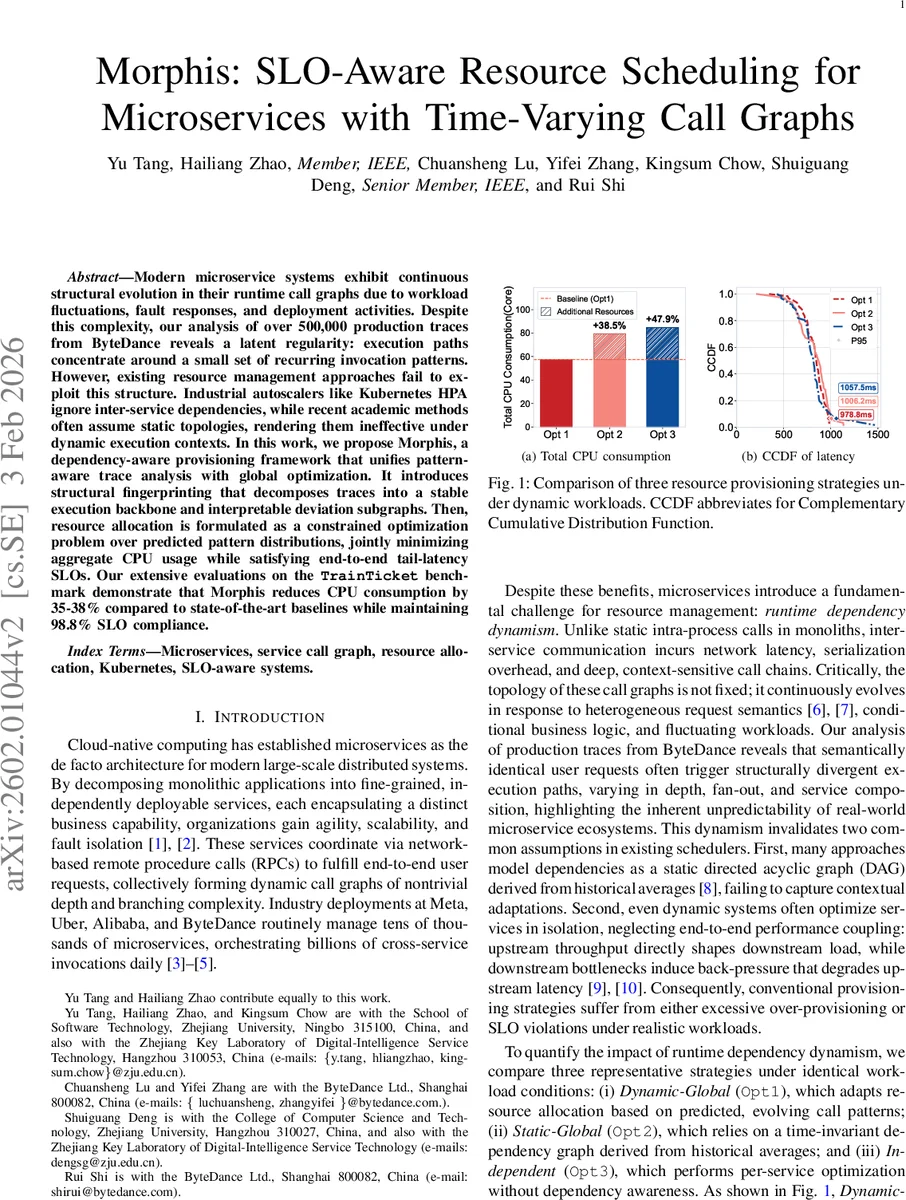

Modern microservice systems exhibit continuous structural evolution in their runtime call graphs due to workload fluctuations, fault responses, and deployment activities. Despite this complexity, our analysis of over 500,000 production traces from ByteDance reveals a latent regularity: execution paths concentrate around a small set of recurring invocation patterns. However, existing resource management approaches fail to exploit this structure. Industrial autoscalers like Kubernetes HPA ignore inter-service dependencies, while recent academic methods often assume static topologies, rendering them ineffective under dynamic execution contexts. In this work, we propose Morphis, a dependency-aware provisioning framework that unifies pattern-aware trace analysis with global optimization. It introduces structural fingerprinting that decomposes traces into a stable execution backbone and interpretable deviation subgraphs. Then, resource allocation is formulated as a constrained optimization problem over predicted pattern distributions, jointly minimizing aggregate CPU usage while satisfying end-to-end tail-latency SLOs. Our extensive evaluations on the TrainTicket benchmark demonstrate that Morphis reduces CPU consumption by 35-38% compared to state-of-the-art baselines while maintaining 98.8% SLO compliance.

💡 Research Summary

The paper tackles a fundamental challenge in modern microservice‑based cloud systems: the runtime call graph of services is not static but continuously evolves due to workload fluctuations, failures, scaling events, and deployment rollouts. While this dynamism makes resource management difficult, the authors’ large‑scale analysis of more than 500,000 production traces from ByteDance uncovers a striking regularity – the seemingly chaotic set of execution graphs actually concentrates around a small, finite collection of recurring invocation patterns (the “happy paths”) plus a handful of structured deviations (retries, fan‑outs, version switches, etc.). Existing resource controllers, such as Kubernetes Horizontal Pod Autoscaler (HPA), operate per‑service on local metrics and ignore these inter‑service dependencies; recent academic proposals either assume a fixed call graph or perform isolated service optimization, thus failing under realistic, time‑varying workloads.

Morphis is introduced as a closed‑loop framework that explicitly models this latent regularity and uses it for SLO‑aware provisioning. It consists of two tightly coupled components: (1) Structural Fingerprinting and (2) Pattern‑driven Global Resource Allocation.

Structural Fingerprinting: For a given request type, all collected traces are represented as directed acyclic graphs (DAGs). Each trace’s root‑to‑leaf paths are broken into overlapping k‑grams (k = 3 by default). The frequency of each k‑gram across the trace set is computed; those exceeding a configurable threshold θ are deemed “core segments.” A criticality score Θ combines latency contribution and span proportion, allowing the algorithm to select a set of core segments that are both frequent and performance‑critical. These core segments are merged into a stable “backbone” graph B that captures the primary business logic. All remaining segments are grouped into deviation subgraphs S using density‑based clustering (e.g., DBSCAN). The resulting signature Ψ = (B, S) satisfies three design goals: stability under minor, non‑semantic variations; discriminability when core execution semantics differ; and human‑readable interpretability for root‑cause analysis.

Pattern‑driven Global Allocation: The backbone‑deviation signatures are fed into a short‑term forecasting module (Seasonal ARIMA, Prophet, etc.) that predicts the distribution of patterns over the next scheduling interval. For each predicted pattern p, the expected invocation count λₚ and average end‑to‑end latency Lₚ are derived. The resource provisioning problem is then formulated as a constrained integer linear program: minimize total CPU cost Σᵢ cᵢ·rᵢ (cᵢ is per‑core cost, rᵢ the replica count for service i) subject to (i) latency SLO constraints for every pattern (e.g., 99th‑percentile latency ≤ 1 s), (ii) integer replica variables, and (iii) overall cluster capacity limits. Solving this yields a globally coordinated replica allocation that respects inter‑service load propagation. The solution is applied through a Kubernetes‑native controller that updates HPA/VPA settings in real time.

Evaluation: Experiments use the “TrainTicket” benchmark, a large‑scale microservice application with thousands of services and billions of cross‑service calls, deployed on a production‑grade cluster. Three baselines are compared: (i) Dynamic‑Global (pattern‑aware but without global optimization), (ii) Static‑Global (uses a time‑invariant call graph derived from historical averages), and (iii) Independent (per‑service HPA). Under diverse workloads—including diurnal traffic cycles, sudden spikes, and injected failures—Morphis achieves 35‑38 % reduction in total CPU allocation relative to the best baseline, while delivering a 5‑10 % improvement in tail latency. SLO compliance reaches 98.8 % (≤ 1.2 % violations), outperforming the baselines by a wide margin. The framework also demonstrates rapid adaptation to retry cascades and fan‑out expansions, avoiding the over‑provisioning observed in static or independent approaches.

Contributions: (1) Formalization of runtime dependency dynamism and empirical evidence of its resource impact; (2) Introduction of structural fingerprinting that cleanly separates invariant backbone from context‑sensitive deviations; (3) Integration of pattern prediction with a global SLO‑aware optimizer; (4) Production‑grade implementation and extensive evaluation showing substantial cost savings and high SLO adherence.

Limitations and Future Work: The fingerprinting relies on hyper‑parameters (k‑gram size, frequency threshold θ) that may need tuning per application. The current optimization focuses solely on CPU; extending to multi‑resource (memory, network, storage) constraints is an open direction. Solving the integer program at very large scale may become computationally expensive, suggesting the need for fast approximation or online heuristic methods. The authors propose future research on automated parameter learning, multi‑resource extensions, and scalable solvers.

In summary, Morphis leverages the hidden regularity of dynamic microservice call graphs—viewing them as a bounded set of recurring patterns plus structured deviations—to build a principled, SLO‑aware, globally optimal resource scheduler. By doing so, it bridges the gap between per‑service autoscaling and the reality of inter‑service performance coupling, delivering significant efficiency gains and robust latency guarantees in real‑world cloud environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment