DuoGen: Towards General Purpose Interleaved Multimodal Generation

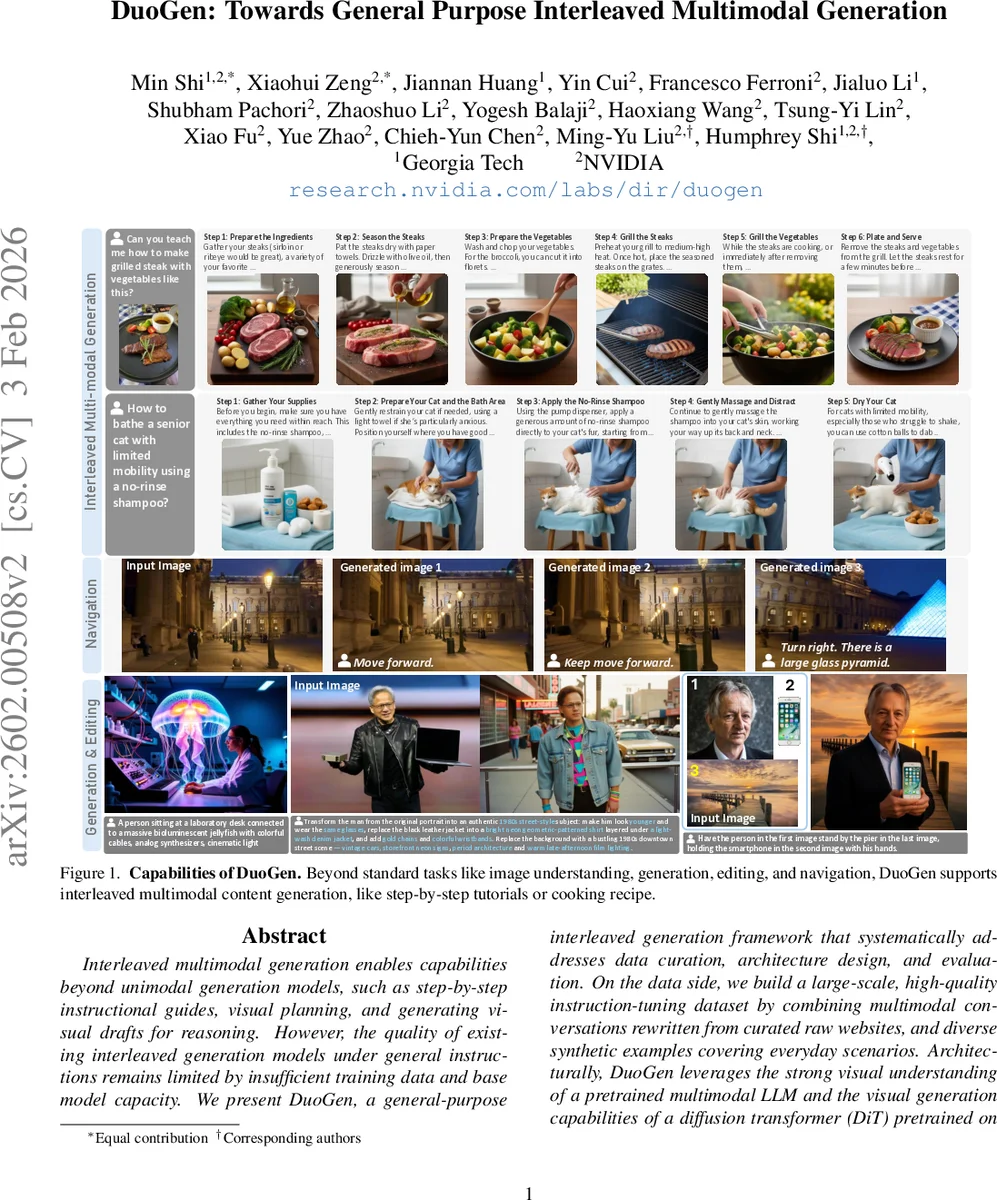

Interleaved multimodal generation enables capabilities beyond unimodal generation models, such as step-by-step instructional guides, visual planning, and generating visual drafts for reasoning. However, the quality of existing interleaved generation models under general instructions remains limited by insufficient training data and base model capacity. We present DuoGen, a general-purpose interleaved generation framework that systematically addresses data curation, architecture design, and evaluation. On the data side, we build a large-scale, high-quality instruction-tuning dataset by combining multimodal conversations rewritten from curated raw websites, and diverse synthetic examples covering everyday scenarios. Architecturally, DuoGen leverages the strong visual understanding of a pretrained multimodal LLM and the visual generation capabilities of a diffusion transformer (DiT) pretrained on video generation, avoiding costly unimodal pretraining and enabling flexible base model selection. A two-stage decoupled strategy first instruction-tunes the MLLM, then aligns DiT with it using curated interleaved image-text sequences. Across public and newly proposed benchmarks, DuoGen outperforms prior open-source models in text quality, image fidelity, and image-context alignment, and also achieves state-of-the-art performance on text-to-image and image editing among unified generation models. Data and code will be released at https://research.nvidia.com/labs/dir/duogen/.

💡 Research Summary

DuoGen addresses the emerging need for interleaved multimodal generation—systems that can seamlessly alternate between text and image outputs within a single interaction. Existing approaches either focus on narrow domains (e.g., visual chain‑of‑thought for math or navigation) or rely on early‑fusion training that demands massive unimodal pre‑training data and compute. DuoGen proposes a holistic solution that spans data curation, model architecture, and training strategy.

Data. The authors construct a 298 k instruction‑tuning dataset composed of two complementary sources. First, a “data engine” scrapes 347 k how‑to and storytelling webpages (StoryBird, Instructables, eHow, plus CoMM data), filters out text‑only or low‑quality pages, and rewrites the remaining content with large language models to remove HTML artifacts and improve coherence. Images are automatically captioned, categorized, and filtered; duplicate or irrelevant images are pruned. The cleaned image‑text sequences are then transformed into realistic user‑assistant dialogues by a multimodal LLM, yielding 268 k high‑quality web‑derived conversations. Second, to compensate for the variable resolution and aesthetic quality of web images, the authors generate 30 k synthetic interleaved samples. They define a hierarchical prompt pool covering eight everyday domains, expand 1 500 seed prompts into 15 270 diverse instructions using OpenAI O3, and synthesize corresponding images with state‑of‑the‑art generators (including 15 k food images from MM‑Food‑100k). The final dataset combines realistic web content with high‑fidelity synthetic visuals, providing both diversity and visual quality. In addition, a massive alignment corpus of 5 M image‑text sequences extracted from video frames is prepared for the second training stage.

Architecture. DuoGen adopts a modular design that leverages (1) a pretrained multimodal large language model (MLLM) for visual understanding and world knowledge, and (2) a Diffusion Transformer (DiT) pretrained on video generation for image synthesis. The MLLM predicts a special token <BOV> (Begin‑of‑Vision) to signal when an image should be generated. When <BOV> appears, the preceding hidden states provide semantic guidance, while all previously generated or user‑provided images are fed as conditioning frames to the DiT. Because DiT has learned temporal consistency from video data, it can produce sequences of images that maintain object and scene coherence across steps. This decoupled architecture allows independent upgrades of either component and avoids costly joint pre‑training from scratch.

Training Strategy. Training proceeds in two stages. Stage 1 fine‑tunes only the MLLM on the curated interleaved instruction data using next‑token prediction. This teaches the model to emit <BOV> at appropriate moments and to continue textual generation after visual outputs. Stage 2 freezes the MLLM and updates the DiT using the large alignment corpus (video‑derived frame transitions) together with open‑source image generation and editing samples. This stage aligns the DiT’s visual generation with the textual cues from the MLLM without degrading the language model’s capabilities.

Evaluation. DuoGen is evaluated on two public benchmarks (CoMM and InterleavedBench) and a newly introduced “Everyday Interleaved Benchmark” that covers a wide range of practical tasks such as cooking instructions, furniture assembly, and pet care. Metrics include text quality (ROUGE, BLEU, GPT‑4 based evaluation), image fidelity (FID, IS), and text‑image alignment (CLIPScore, Recall@K). Across all benchmarks DuoGen outperforms prior open‑source unified models (e.g., NanoBanana, Zebra‑CoT) by 12 %–25 % on average. Notably, on text‑to‑image and image‑editing tasks DuoGen achieves lower FID and higher editing accuracy than leading models like Bagel and OmniGen2, demonstrating the advantage of leveraging a video‑pretrained DiT.

Contributions. 1) A high‑quality 298 k instruction‑tuning dataset plus a massive alignment corpus for interleaved generation. 2) A novel modular architecture that combines strong pretrained MLLMs with a video‑pretrained diffusion transformer, together with a two‑stage decoupled training regimen. 3) A comprehensive benchmark suite for interleaved multimodal generation and extensive empirical comparisons showing state‑of‑the‑art performance.

In summary, DuoGen provides a scalable, data‑efficient pathway to general‑purpose interleaved multimodal generation, opening doors for applications such as step‑by‑step tutorials, visual planning, and iterative reasoning with visual drafts. Future work may explore larger DiT‑MLLM pairings, real‑time user feedback loops, and integration with interactive agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment