CiMRAG: CiM-Aware Domain-Adaptive and Noise-Resilient Retrieval-Augmented Generation for Edge-Based LLMs

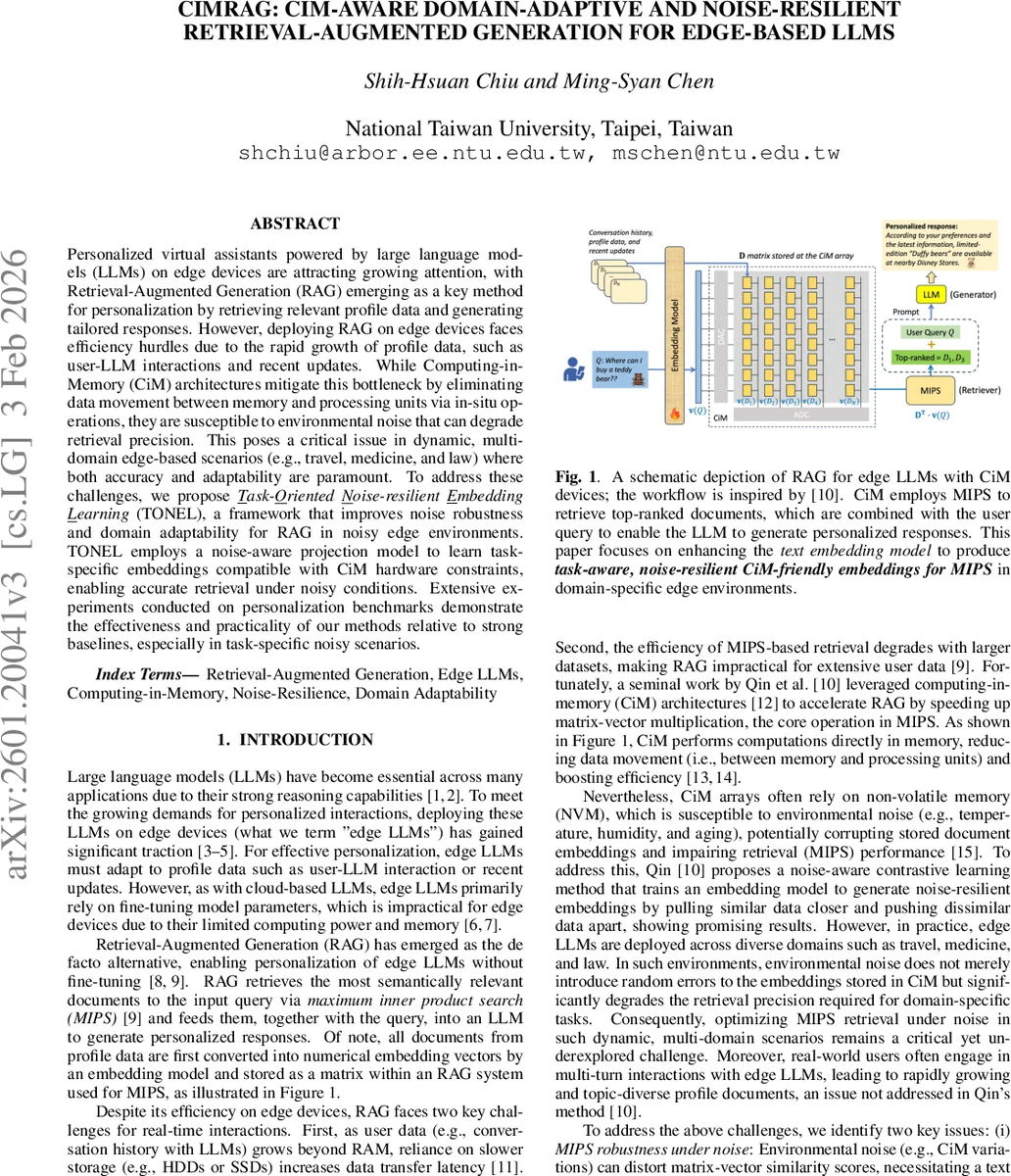

Personalized virtual assistants powered by large language models (LLMs) on edge devices are attracting growing attention, with Retrieval-Augmented Generation (RAG) emerging as a key method for personalization by retrieving relevant profile data and generating tailored responses. However, deploying RAG on edge devices faces efficiency hurdles due to the rapid growth of profile data, such as user-LLM interactions and recent updates. While Computing-in-Memory (CiM) architectures mitigate this bottleneck by eliminating data movement between memory and processing units via in-situ operations, they are susceptible to environmental noise that can degrade retrieval precision. This poses a critical issue in dynamic, multi-domain edge-based scenarios (e.g., travel, medicine, and law) where both accuracy and adaptability are paramount. To address these challenges, we propose Task-Oriented Noise-resilient Embedding Learning (TONEL), a framework that improves noise robustness and domain adaptability for RAG in noisy edge environments. TONEL employs a noise-aware projection model to learn task-specific embeddings compatible with CiM hardware constraints, enabling accurate retrieval under noisy conditions. Extensive experiments conducted on personalization benchmarks demonstrate the effectiveness and practicality of our methods relative to strong baselines, especially in task-specific noisy scenarios.

💡 Research Summary

The paper tackles the problem of deploying Retrieval‑Augmented Generation (RAG) for personalized virtual assistants on edge devices equipped with large language models (LLMs). While RAG avoids costly fine‑tuning by retrieving relevant user‑profile documents, it relies on maximum inner‑product search (MIPS) over an embedding matrix. On edge hardware, moving this matrix between memory and compute units creates latency, prompting the use of Computing‑in‑Memory (CiM) architectures that perform matrix‑vector multiplication directly inside non‑volatile memory crossbars. However, CiM arrays are vulnerable to environmental variations (temperature, humidity, aging), which introduce noise that corrupts stored embeddings and degrades retrieval accuracy, especially in multi‑domain scenarios such as travel, medicine, and law.

To address both noise robustness and domain adaptability, the authors propose TONEL (Task‑Oriented Noise‑resilient Embedding Learning). TONEL consists of two complementary components:

-

Noise‑aware Task‑oriented Optimization (NATO) – A projection network reduces high‑dimensional (384‑dim) FP32 embeddings from a pretrained encoder to a CiM‑compatible 64‑dim space and quantizes them to 8‑bit integers using fake quantization and uniform scaling. During training, realistic Gaussian noise sampled from measured device‑specific standard deviations (σv) is injected into the quantized vectors. The noisy vectors are fed to a task predictor, and a CiM‑aware cross‑entropy loss (CiMCE) is minimized. CiMCE incorporates both the task labels and the injected noise, encouraging the projection network to produce embeddings that remain discriminative under varying noise levels.

-

Pseudo‑Label Generation Mechanism (PGM) – Since manual labeling of ever‑growing user documents is infeasible, PGM clusters the original encoder embeddings using an unsupervised algorithm (e.g., K‑means) into K latent topics. Each document receives a “pseudo” class label based on its cluster assignment. These pseudo labels serve as supervision for NATO, enabling label‑free domain adaptation. When true task labels are available, TONEL can also be trained in a supervised mode (TONEL‑w/ TL).

The authors evaluate TONEL on two personalization benchmarks from the LaMP suite: Movie Tagging (15 classes) and Product Rating (5 classes). Baselines include a classic PCA dimensionality reduction and RoCR, a prior noise‑aware embedding method for CiM. Metrics cover MIPS Top‑1 accuracy (Acc@1), Precision@5, nDCG@5, and downstream classification accuracy/F1 when the retrieved top‑5 documents are fed to edge‑friendly LLM generators (Gemma‑2B and Llama‑3.2‑3B).

Key experimental findings:

- Across all noise percentages (0 %, 50 %, 100 %) and four real CiM devices (two RRAM, two FeFET), TONEL‑w/ PL (pseudo‑label mode) consistently outperforms PCA and RoCR on Acc@1, Precision@5, and nDCG@5. For example, on Device‑2 with 100 % noisy documents in the Movie dataset, TONEL‑w/ PL achieves Acc@1 = 0.3883 versus RoCR’s 0.3295 (≈18 % relative gain).

- In the supervised setting (TONEL‑w/ TL), performance approaches the oracle (noise‑free) upper bound, reaching Acc@1 ≈ 0.70–0.77 depending on the device, demonstrating the full potential when true task labels are available.

- Downstream LLM generation experiments show that augmenting queries with TONEL‑retrieved documents improves classification accuracy by 4–7 % points and F1 by 5–9 % points over a baseline that uses only the query. TONEL also outperforms RoCR in these end‑to‑end metrics.

- The projection and quantization steps respect the fixed 64 × 64 crossbar size and 8‑bit precision, incurring negligible additional compute overhead, making the approach suitable for real‑time edge inference.

The technical contributions are threefold: (1) integrating hardware‑specific noise models directly into the embedding learning objective, (2) providing a label‑free domain‑adaptation pipeline via clustering‑based pseudo labels, and (3) demonstrating that noise‑robust, domain‑aware embeddings substantially improve both retrieval quality and downstream LLM performance on actual CiM hardware.

The paper concludes by suggesting future extensions such as handling multimodal user profiles (images, audio), dynamic noise estimation during operation, and combining TONEL with lightweight on‑device fine‑tuning to achieve a fully self‑contained personalized edge LLM system. Overall, TONEL offers a practical solution for robust, efficient RAG on noisy, resource‑constrained edge devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment