Do Models Hear Like Us? Probing the Representational Alignment of Audio LLMs and Naturalistic EEG

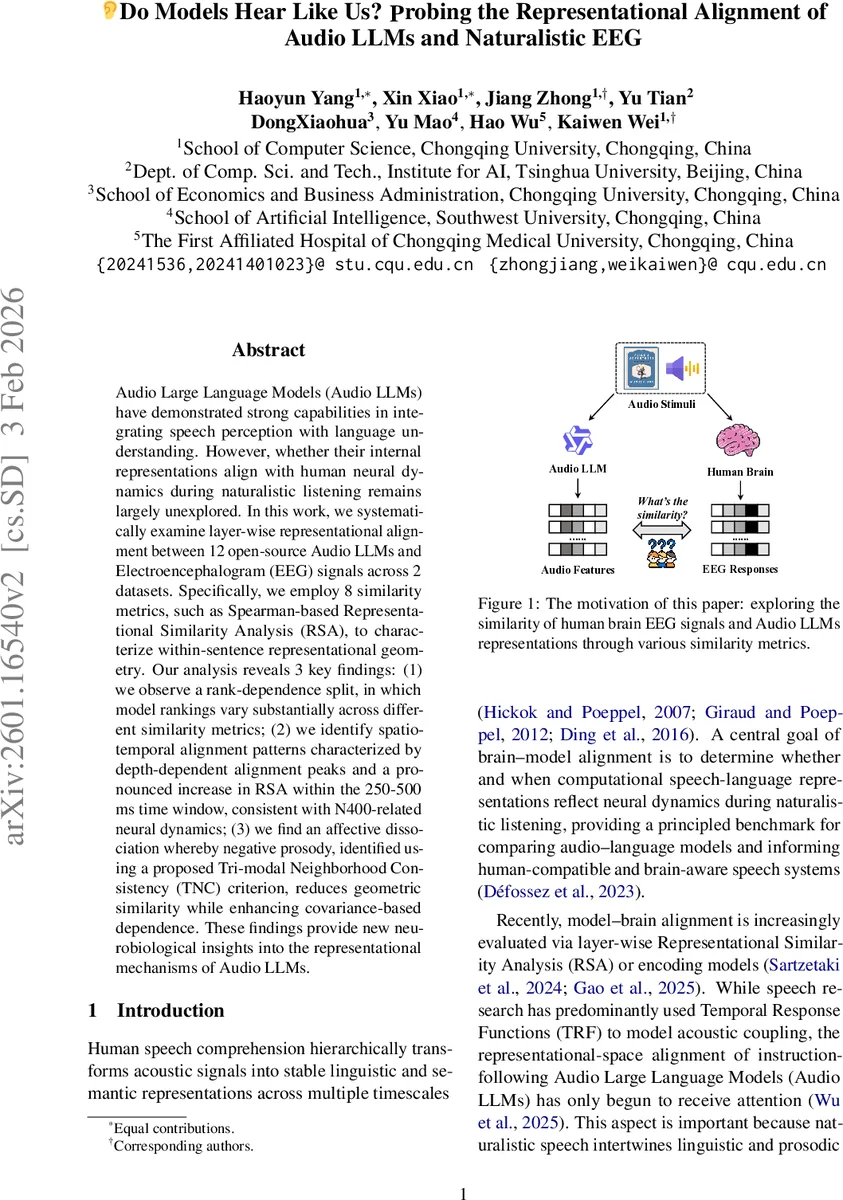

Audio Large Language Models (Audio LLMs) have demonstrated strong capabilities in integrating speech perception with language understanding. However, whether their internal representations align with human neural dynamics during naturalistic listening remains largely unexplored. In this work, we systematically examine layer-wise representational alignment between 12 open-source Audio LLMs and Electroencephalogram (EEG) signals across 2 datasets. Specifically, we employ 8 similarity metrics, such as Spearman-based Representational Similarity Analysis (RSA), to characterize within-sentence representational geometry. Our analysis reveals 3 key findings: (1) we observe a rank-dependence split, in which model rankings vary substantially across different similarity metrics; (2) we identify spatio-temporal alignment patterns characterized by depth-dependent alignment peaks and a pronounced increase in RSA within the 250-500 ms time window, consistent with N400-related neural dynamics; (3) we find an affective dissociation whereby negative prosody, identified using a proposed Tri-modal Neighborhood Consistency (TNC) criterion, reduces geometric similarity while enhancing covariance-based dependence. These findings provide new neurobiological insights into the representational mechanisms of Audio LLMs.

💡 Research Summary

This paper investigates whether the internal representations of modern audio large language models (Audio LLMs) align with human neural dynamics during naturalistic speech listening. The authors systematically compare twelve open‑source Audio LLMs with electroencephalogram (EEG) recordings from two separate datasets, focusing on layer‑wise similarity across time and affective prosody.

First, each sentence‑level audio stimulus is fed into every Audio LLM, and hidden states from all transformer layers are extracted. Because EEG is sampled at a much higher temporal resolution than the token sequence, the authors linearly interpolate each electrode’s time series onto the token grid, yielding a token‑synchronous EEG matrix. For each layer‑sentence pair they construct representational dissimilarity matrices (RDMs) for both modalities and compute eight complementary similarity metrics: Pearson RSA, Spearman RSA, Kendall’s τb (all rank‑based), distance correlation (dCor), RV coefficient, Gaussian mutual information (MI), and centered kernel alignment with linear (CKA‑L) and RBF kernels (CKA‑RBF). These metrics capture linear vs. nonlinear dependence, rank‑order geometry, and covariance‑style relationships, providing a multifaceted view of cross‑modal alignment.

Statistical significance is assessed via a non‑parametric time‑shuffle permutation test that disrupts temporal correspondence, with false‑discovery‑rate correction for multiple comparisons.

The results reveal three major findings. (1) Rank‑dependence split – Model rankings differ dramatically depending on which similarity metric is used. Geometry‑focused measures (RSA, Kendall) tend to peak in mid‑depth layers, whereas dependence‑focused measures (dCor, RV, CKA‑RBF) often favor deeper layers. This split underscores that a single metric cannot fully characterize model‑brain correspondence. (2) Spatio‑temporal alignment patterns – When the similarity scores are examined in sliding 10 ms windows, a pronounced peak emerges in the 250–500 ms interval, the canonical N400 window associated with semantic integration. The RSA peak occurs around layers 6–9, suggesting that these intermediate transformer layers encode representations that best match the brain’s semantic processing stage. Non‑linear CKA scores, however, peak later (layers 10–12), indicating that higher layers capture more abstract linguistic information that aligns with later neural dynamics. (3) Affective dissociation – The authors partition sentences by affective prosody using eGeMAPS acoustic descriptors (valence proxy) and by broader prosodic clusters (duration, energy, pitch, etc.). They introduce a novel Tri‑modal Neighborhood Consistency (TNC) metric, which averages Spearman RSA correlations among acoustics‑EEG, EEG‑LLM, and acoustics‑LLM, thereby requiring consistent geometry across all three modalities. Analyses show that sentences with negative prosody reduce rank‑based RSA similarity but increase covariance‑based measures (dCor, RV, CKA). This suggests that negative affect reshapes the temporal neighborhood structure rather than merely scaling the strength of coupling.

Overall, the paper makes three contributions: (i) a comprehensive, multi‑metric, layer‑wise EEG‑Audio LLM similarity benchmark; (ii) a detailed characterization of depth‑ and time‑dependent alignment, linking specific transformer layers to the N400 semantic window; and (iii) the TNC framework for tri‑modal consistency, revealing robust affect‑driven modulation of model‑brain alignment. These findings provide new neuro‑biological insights into how Audio LLMs process speech and open avenues for developing brain‑compatible speech‑language systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment