AI Twin: Enhancing ESL Speaking Practice through AI Self-Clones of a Better Me

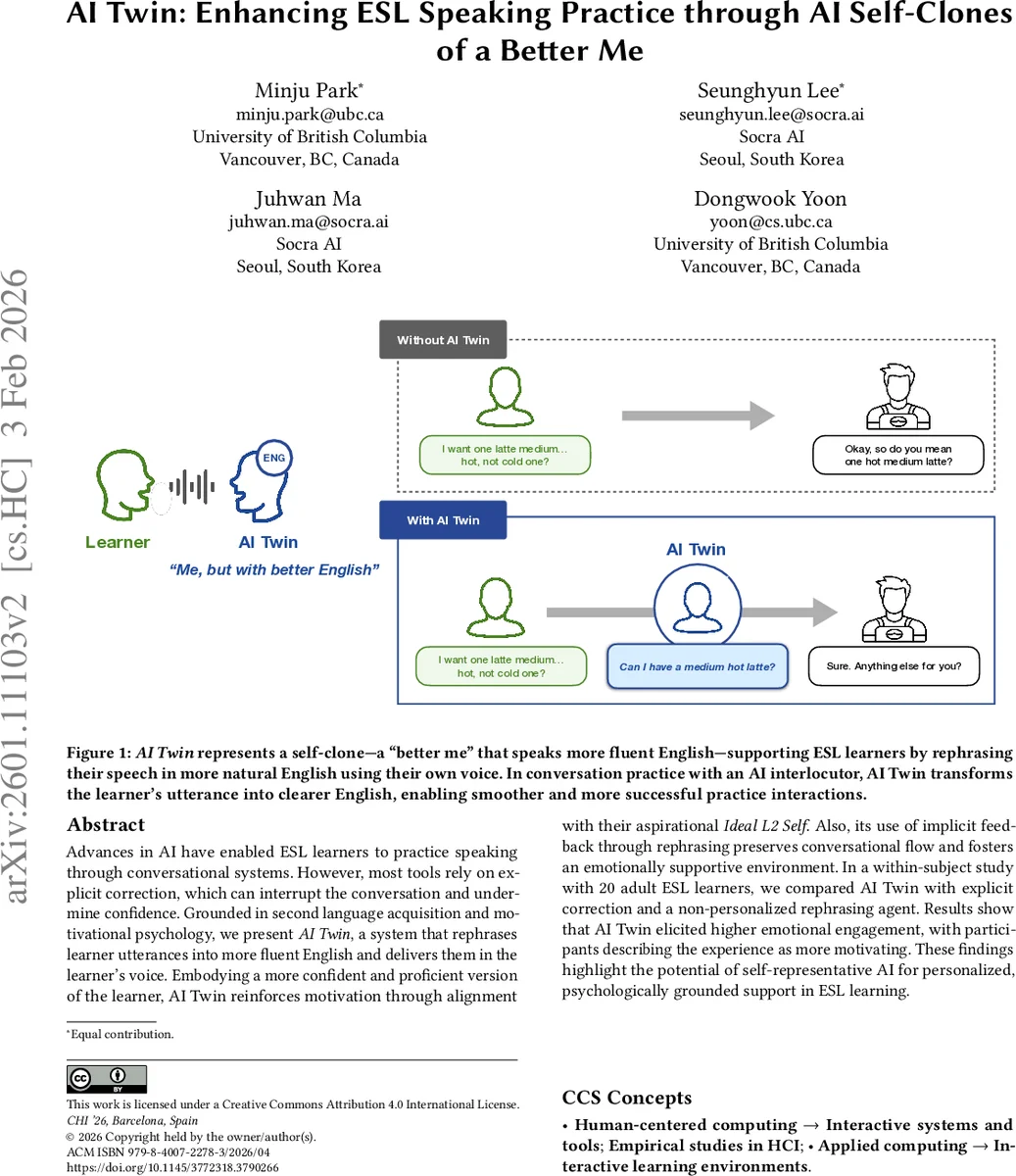

Advances in AI have enabled ESL learners to practice speaking through conversational systems. However, most tools rely on explicit correction, which can interrupt the conversation and undermine confidence. Grounded in second language acquisition and motivational psychology, we present AI Twin, a system that rephrases learner utterances into more fluent English and delivers them in the learner’s voice. Embodying a more confident and proficient version of the learner, AI Twin reinforces motivation through alignment with their aspirational Ideal L2 Self. Also, its use of implicit feedback through rephrasing preserves conversational flow and fosters an emotionally supportive environment. In a within-subject study with 20 adult ESL learners, we compared AI Twin with explicit correction and a non-personalized rephrasing agent. Results show that AI Twin elicited higher emotional engagement, with participants describing the experience as more motivating. These findings highlight the potential of self-representative AI for personalized, psychologically grounded support in ESL learning.

💡 Research Summary

The paper introduces AI Twin, an AI‑driven “self‑clone” system that rephrases ESL learners’ spoken utterances into more fluent English and renders the corrected output in the learner’s own voice. Grounded in second‑language acquisition research and motivational psychology, the design combines two well‑established concepts: (1) recasts—implicit corrective feedback that preserves conversational flow—and (2) the Ideal L2 Self—a learner’s aspirational self‑image that drives sustained motivation. By delivering recasts through a personalized voice clone, AI Twin aims to make implicit feedback salient, emotionally supportive, and motivationally aligned with the learner’s self‑concept.

Technical architecture: speech input → automatic speech recognition (ASR) → text sent to a large language model (LLM) for fluent re‑phrasing → the revised text is fed to a text‑to‑speech (TTS) model that has been fine‑tuned to reproduce the learner’s vocal characteristics (a “voice clone”). The synthesized utterance is then sent to the conversational AI interlocutor, allowing the learner to continue the dialogue while hearing a more proficient version of themselves.

The authors evaluated AI Twin in a within‑subject study with 20 adult ESL learners. Each participant experienced three conditions in counterbalanced order: (a) Explicit Feedback (the system directly corrects errors), (b) AI Proxy (rephrased output spoken by a generic voice), and (c) AI Twin (rephrased output spoken by the learner’s cloned voice). Engagement was measured across three dimensions—emotional (enjoyment, anxiety), cognitive (noticing, strategy use), and behavioral (persistence, participation)—using validated questionnaires, plus pre‑ and post‑session anxiety and confidence scales. Semi‑structured interviews provided qualitative insight.

Quantitative results showed that AI Twin yielded the highest emotional engagement scores (mean ≈ 4.6/5), significantly outperforming Explicit Feedback (≈ 3.2) and also surpassing the non‑personalized proxy. Cognitive and behavioral engagement followed the same pattern, indicating that learners not only felt better but also processed feedback more deeply and persisted longer. Qualitative data reinforced these findings: participants reported that hearing their own voice delivering “better” English made them feel more confident, reduced the fear of being corrected, and created a vivid sense of interacting with their Ideal L2 Self. In contrast, explicit correction was described as “interruptive” and “demotivating.”

Technical analysis acknowledges current limitations: voice‑cloning TTS still introduces subtle artifacts, and some learners perceived the synthesized voice as slightly artificial. Moreover, the LLM‑based rephrasing can occasionally alter meaning; the authors mitigated this with post‑processing rules that preserve semantic intent and control lexical difficulty.

Educational implications are threefold. First, feedback modality profoundly influences affective states; implicit, flow‑preserving feedback sustains immersion. Second, embedding learner‑specific identity cues (voice clone) amplifies motivation by linking corrective input to the Ideal L2 Self, turning feedback into a self‑affirming experience rather than external criticism. Third, even with imperfect cloning technology, the combined approach yields measurable gains over traditional explicit correction.

The paper concludes that AI Twin demonstrates a viable path toward emotionally intelligent, identity‑aware language learning tools. Future work should explore long‑term retention, scalability across proficiency levels and cultural contexts, and improve voice‑cloning fidelity through multimodal training. Overall, AI Twin offers a compelling example of how personalized AI can transform corrective feedback from a disruptive interruption into a supportive, motivating dialogue partner.

Comments & Academic Discussion

Loading comments...

Leave a Comment