SAIL-RL: Guiding MLLMs in When and How to Think via Dual-Reward RL Tuning

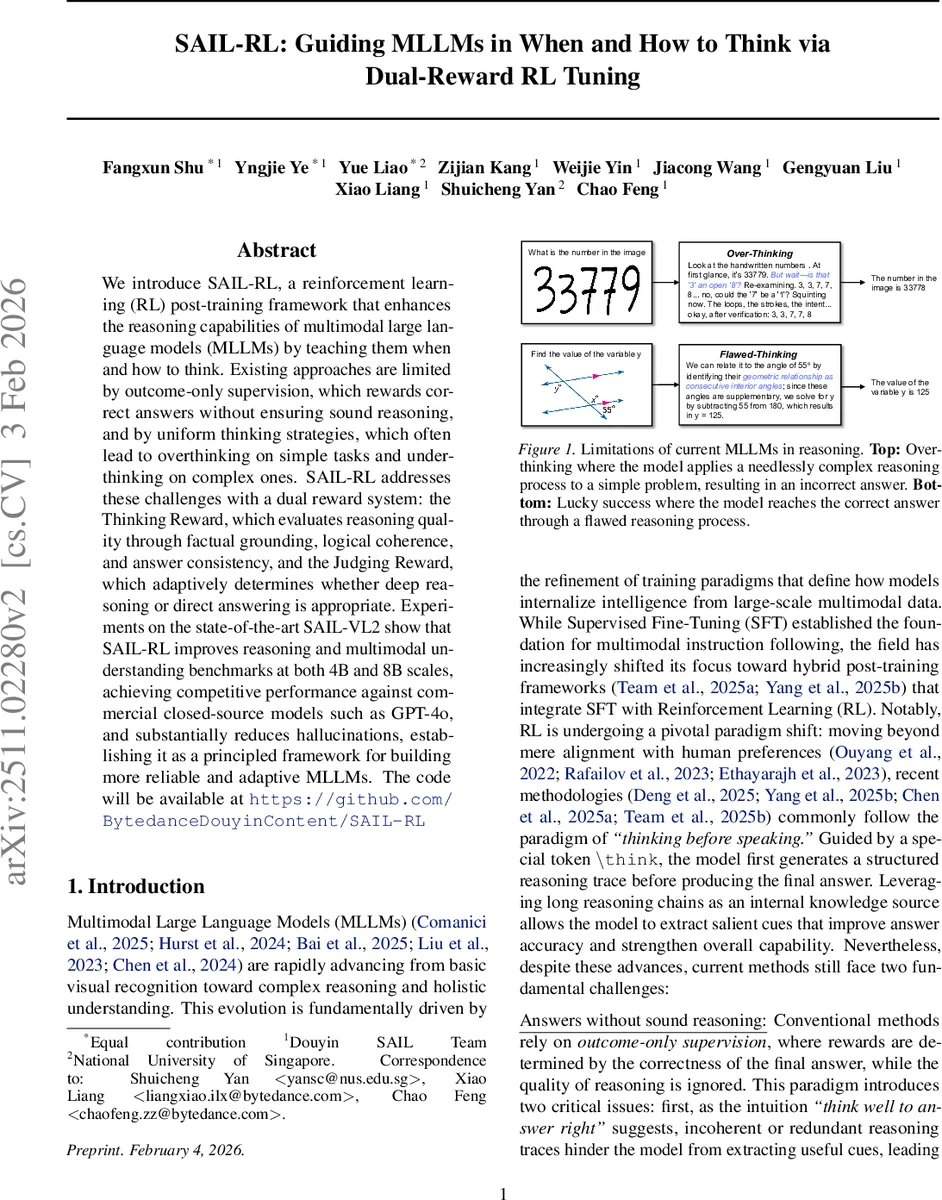

We introduce SAIL-RL, a reinforcement learning (RL) post-training framework that enhances the reasoning capabilities of multimodal large language models (MLLMs) by teaching them when and how to think. Existing approaches are limited by outcome-only supervision, which rewards correct answers without ensuring sound reasoning, and by uniform thinking strategies, which often lead to overthinking on simple tasks and underthinking on complex ones. SAIL-RL addresses these challenges with a dual reward system: the Thinking Reward, which evaluates reasoning quality through factual grounding, logical coherence, and answer consistency, and the Judging Reward, which adaptively determines whether deep reasoning or direct answering is appropriate. Experiments on the state-of-the-art SAIL-VL2 show that SAIL-RL improves reasoning and multimodal understanding benchmarks at both 4B and 8B scales, achieving competitive performance against commercial closed-source models such as GPT-4o, and substantially reduces hallucinations, establishing it as a principled framework for building more reliable and adaptive MLLMs. The code will be available at https://github.com/BytedanceDouyinContent/SAIL-RL.

💡 Research Summary

**

The paper introduces SAIL‑RL, a reinforcement‑learning (RL) post‑training framework designed to endow multimodal large language models (MLLMs) with the ability to decide when and how to think. Existing approaches rely on outcome‑only supervision, rewarding correct final answers while ignoring the quality of the reasoning process. Moreover, they apply a uniform “think‑before‑speak” strategy to all inputs, which leads to over‑thinking on simple tasks (wasting computation and generating noisy chains) and under‑thinking on complex tasks (producing shallow reasoning and errors).

SAIL‑RL tackles these issues with a dual‑reward system:

-

Thinking Reward – evaluates the reasoning trace itself along three dimensions:

- Logical Coherence (structural soundness of problem decomposition and deductive consistency).

- Factual Grounding (verification against visual evidence, textual context, and world knowledge).

- Answer Consistency (ensuring the final answer follows directly from the preceding steps).

Each dimension yields a binary score (0/1); the overall thinking reward is the average of the three.

-

Judging Reward – a binary signal that tells the model whether to engage the reasoning mode. The model emits a special

<think>token before answering; this decision is compared against a ground‑truth difficulty label (simple vs. complex). Correct alignment receives a reward of 1, otherwise 0. This encourages the model to skip CoT for easy queries and to invoke full reasoning for hard ones, directly addressing efficiency.

The two rewards are combined in a Cascading Reward System:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment