Causality Guided Representation Learning for Cross-Style Hate Speech Detection

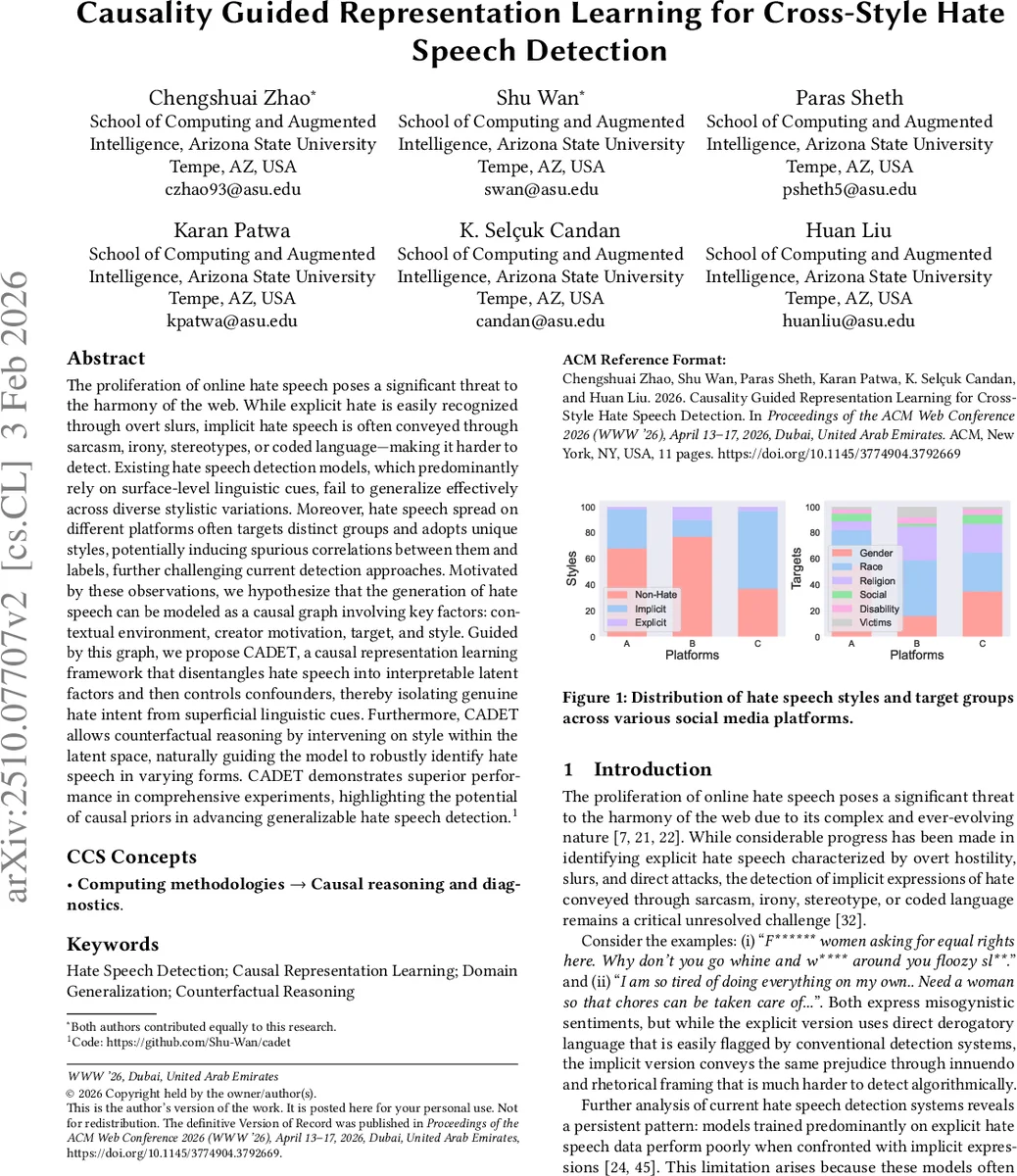

The proliferation of online hate speech poses a significant threat to the harmony of the web. While explicit hate is easily recognized through overt slurs, implicit hate speech is often conveyed through sarcasm, irony, stereotypes, or coded language – making it harder to detect. Existing hate speech detection models, which predominantly rely on surface-level linguistic cues, fail to generalize effectively across diverse stylistic variations. Moreover, hate speech spread on different platforms often targets distinct groups and adopts unique styles, potentially inducing spurious correlations between them and labels, further challenging current detection approaches. Motivated by these observations, we hypothesize that the generation of hate speech can be modeled as a causal graph involving key factors: contextual environment, creator motivation, target, and style. Guided by this graph, we propose CADET, a causal representation learning framework that disentangles hate speech into interpretable latent factors and then controls confounders, thereby isolating genuine hate intent from superficial linguistic cues. Furthermore, CADET allows counterfactual reasoning by intervening on style within the latent space, naturally guiding the model to robustly identify hate speech in varying forms. CADET demonstrates superior performance in comprehensive experiments, highlighting the potential of causal priors in advancing generalizable hate speech detection.

💡 Research Summary

The paper tackles the persistent problem of detecting hate speech that varies in style—particularly the challenge of implicit hate that evades surface‑level lexical cues. The authors argue that existing classifiers, which rely heavily on explicit slurs and overt aggression, fail to generalize across platforms and stylistic shifts because they conflate true hateful intent with spurious contextual signals such as platform policies, audience demographics, or prevailing sociopolitical climates. To address this, they propose a causal generative model of hate speech that includes four key variables: contextual environment (U), creator motivation (M), target group (T), and linguistic style (S). In this graph, U is an unobserved confounder that simultaneously influences M, T, and S, thereby inducing correlations that can mislead a classifier. Crucially, they introduce a latent variable M* representing the creator’s genuine hateful intent, which is assumed to be invariant to U and thus serves as the optimal domain‑invariant predictor of the binary hate label Y.

Building on this causal graph, the authors present CADET (Causality Guided Representation Learning for Cross‑Style Hate Speech Detection). CADET consists of three tightly integrated components:

-

Causally‑Aligned Disentanglement – A pretrained RoBERTa encoder maps each post x to a fixed‑length vector h. From h, four latent factors are inferred: a continuous Gaussian z_u for the confounder, a continuous Gaussian z_m for raw motivation, a discrete Gumbel‑Softmax‑based z_t for the target, and a continuous z_s for style. Orthogonal projection and adversarial objectives enforce independence among these factors, mirroring the causal graph.

-

Confounder Mitigation – A Gradient Reversal Layer (GRL) and a domain discriminator are attached to z_u, encouraging the encoder to discard platform‑specific information. Additional regularization terms penalize statistical dependence between z_u and the other latents, further reducing spurious correlations.

-

Counterfactual Reasoning – Leveraging the causal graph, the model intervenes on the style node S while keeping motivation M and target T fixed, generating counterfactual posts that express the same hateful intent in an alternative style (explicit ↔ implicit). A style‑flipping network implements this transformation. Both original and counterfactual samples are trained with the same hate label, enforcing style‑invariant representations. The overall loss combines reconstruction, confounder‑mitigation, style‑independence, and counterfactual consistency terms, with hyper‑parameters tuned on a validation split.

The authors evaluate CADET on four publicly available hate‑speech corpora spanning multiple platforms (Twitter, Reddit, Gab) and both explicit and implicit styles. They frame cross‑style detection as a domain‑generalization problem: train on one style (source) and test on the other (target). CADET achieves an average macro‑F1 of 0.81, a 13 % relative improvement over the strongest baselines (including adversarial domain‑invariant methods such as MultiFOLD). Ablation studies show that removing any of the three core components degrades performance, with the confounder‑mitigation module having the largest impact on cross‑platform robustness. Visualization of the latent space reveals that the inferred intent latent (z_m*) clusters hateful posts regardless of style, while the style latent (z_s) cleanly separates explicit from implicit expressions, confirming successful disentanglement.

In summary, CADET demonstrates that embedding explicit causal priors into representation learning—through factor disentanglement, confounder control, and counterfactual style intervention—substantially improves the ability of hate‑speech detectors to generalize across stylistic and platform variations. The work opens avenues for extending causal‑guided models to temporal dynamics, multilingual settings, and richer sets of confounders, thereby moving toward more reliable and responsible content moderation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment